Ask a candidate how they handle conflict, and they will describe a version of themselves that handles it exceptionally well. Ask them to describe a specific time they navigated one, and you will learn something real. That is the entire logic of the behavioral interview.

A behavioral interview is a structured methodology that asks candidates to describe specific past experiences to predict future performance. It operates on a straightforward premise: past behavior in comparable situations is the strongest available predictor of future behavior in similar ones. In an era of AI hiring and automated screening, it remains the gold standard of evidence-based candidate assessment.

In 2026, behavioral interviewing sits at the center of serious candidate evaluation frameworks. It improves candidate experience by creating fairer, more consistent conversations, strengthens the candidate journey by replacing gut-feel with structured evidence, and gives hiring teams a defensible, repeatable process that holds up long after the offer is signed.

What is a Behavioral Interview?

A behavioral interview is a competency-based evaluation method in which an interviewer asks candidates to provide specific, concrete examples from their professional history that demonstrate particular skills, behaviors, or qualities relevant to the role being assessed.

The defining characteristic is specificity. A behavioral question does not ask for a candidate’s philosophy, preference, or theoretical approach. It asks for a situation, a task, a set of actions, and a measurable outcome. This structure, most commonly formalized as the STAR framework (Situation, Task, Action, Result), ensures that every response contains the same structural elements and can therefore be evaluated against a consistent rubric.

In practice, behavioral interview questions typically begin with phrases like “Tell me about a time when,” “Describe a situation in which,” or “Give me an example of.” The signal quality of the response depends heavily on two variables: the precision of the question design (does it target a specific, role-relevant competency?) and the interviewer’s skill at probing for completeness when a candidate’s initial response is thin, vague, or hypothetical.

In 2026, the format has evolved to include asynchronous behavioral interviews (recorded video responses to written behavioral questions, reviewed at the hiring team’s convenience) and AI-scored behavioral assessments that analyze the structural completeness and competency relevance of responses at scale.

Are You Running a Behavioral Interview or Just Asking Better Small Talk?

There is a version of the behavioral interview that exists in name only. The job posting says “competency-based interview.” The candidate receives a confirmation email referencing “behavioral questions.” And then the interviewer spends 45 minutes asking about career trajectory, hypothetical scenarios, and what the candidate likes about the company’s mission. The questions start with “Tell me about a time” but trail into “so what would you do if” when the first answer feels thin. The interviewer makes a note that says “great communication” and moves to the next candidate. This is not a behavioral interview. It is an unstructured conversation with behavioral vocabulary layered over it.

The failure mode matters because the predictive validity that makes behavioral interviewing worth the investment depends entirely on structural integrity. An interview that mixes behavioral and hypothetical questions, uses no scoring rubric, and allows interviewers to follow different conversational paths with different candidates is not measuring competencies consistently. It is measuring a combination of communication fluency, relatability to the interviewer, and the candidate’s familiarity with the behavioral interview format, none of which are reliable proxies for job performance.

The research on unstructured versus structured interviewing is among the most replicated findings in industrial-organizational psychology. Unstructured interviews, which feel natural and productive to the people conducting them, have a predictive validity coefficient of approximately $r = 0.38$. Fully structured behavioral interviews with standardized questions, consistent rubrics, and calibrated scoring achieve $r = 0.55$ or higher. The difference is not in the quality of the interviewers. It is in the design of the process they are following.

Companies using structured behavioral interview programs report a 37% improvement in hiring manager satisfaction with the quality of candidates hired compared to those using unstructured or semi-structured formats. They also report lower rates of first-year attrition, fewer performance improvement plans in the 12-month post-hire window, and significantly stronger inter-rater reliability when multiple interviewers are assessing the same candidate.

For TA leaders, the practical challenge is not convincing anyone that behavioral interviews are better. Most hiring leaders already believe this. The challenge is maintaining structural integrity across a distributed interviewing organization where every hiring manager has their own preferred conversation style, every recruiter has their own interpretation of “competency-based,” and no one is consistently scoring responses against a rubric because no one ever built one.

Consider the scenario that makes the cost of this gap concrete. A professional services firm hires a senior consultant who performed brilliantly in the interview: articulate, confident, clearly intelligent, and enthusiastic about the role. Six months later, the consultant is on a performance improvement plan. The competency that is failing is the one the interview never actually tested: the ability to manage ambiguity and deliver under conditions of incomplete information.

The interview asked about project experience, client relationships, and career goals. It did not include a single structured behavioral question targeting problem-solving under uncertainty, because no one had mapped that competency to the interview before the process started. The PIP costs the firm an estimated $47,000 in manager time, HR involvement, and delayed project delivery. The behavioral question that would have surfaced the gap costs nothing to write.

The ROI math on behavioral interview quality is real. Organizations that implement structured behavioral interview training for hiring managers and standardized question banks by role report a measurable improvement in quality of hire scores at 12 months. If that improvement reduces performance-related attrition by just 8 percentage points across 100 hires per year, and the average replacement cost is $30,000 per departure, the annual saving is $240,000, before accounting for the productivity and morale costs of managing underperforming hires.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Behavioral Interviews

Behavioral interviewing works because of a well-established principle in personality psychology: behavioral consistency. People tend to respond to similar situations in similar ways because their habitual approaches to challenges are rooted in skills, values, and cognitive patterns that are relatively stable over time. The behavioral interview accesses those patterns through evidence rather than assertion.

The STAR Framework and Response Architecture

The STAR framework (Situation, Task, Action, Result) is the structural backbone of behavioral interview design and response evaluation. Each element serves a distinct analytical purpose. The Situation and Task establish context and complexity. The Action is the analytically richest component: it is where the candidate’s actual behavioral choices, rather than the circumstances they inherited, are visible. The Result provides outcome data that allows an assessor to evaluate whether the actions taken were effective.

A well-structured behavioral question produces responses that move linearly through all four elements. A poorly probed response frequently stalls at the Situation level or skips to the Result without describing what the candidate specifically did. The interviewer’s job is to recognize which element is missing and probe directly: “That’s helpful context. Tell me specifically what you did next” or “What was the measurable outcome?” In 2026, AI scoring of asynchronous behavioral responses uses the STAR framework as its primary analytical lens, flagging responses that are hypothetical rather than experience-based and scoring relevance to the target competency.

Attribution Error and the Halo Effect

Unstructured interviews are particularly vulnerable to attribution error (attributing outcomes to personal qualities rather than the circumstances the candidate was operating in) and the halo effect (allowing one strong impression to color assessment of all competencies). Both biases are significantly mitigated in behavioral interviews by the structural requirement to evaluate specific actions rather than general impressions, and by competency-specific rubrics that force assessors to rate each dimension independently.

The residual risk is “good story” bias: a compelling narrative about a moderately relevant experience can outperform direct evidence from a less polished candidate. Behavioral Anchored Rating Scales (BARS), which define what strong, average, and weak responses look like per competency with specific behavioral descriptors, are the most effective counter.

Introversion, Performance Anxiety, and Interview Equity

Live behavioral interviews systematically advantage fluent verbal performers. The ability to recall and narrate a specific experience under time pressure, in front of evaluators, while simultaneously monitoring reactions and calibrating the narrative requires real-time communication skills that are not equivalent to the skills most roles actually require. Asynchronous behavioral formats, which allow candidates to record responses without a live audience, partially mitigate this limitation.

Candidates who think in writing, who need a moment to access specific memories, or who are less comfortable in high-stimulus social evaluations perform significantly more consistently in asynchronous formats, producing a more accurately and more equitably filtered candidate pool at the live interview stage.

Behavioral Interview vs. Other Interview Formats

The behavioral interview occupies a specific and evidence-backed position within the broader landscape of interview methodologies:

| Interview Format | Core Question Type | Predictive Validity | Best Use |

|---|---|---|---|

| Behavioral Interview | “Tell me about a time when…” | $r = 0.51$ to $0.61$ | Competency-based screening at any funnel stage |

| Situational Interview | “What would you do if…” | $r = 0.35$ to $0.45$ | Assessing judgment in hypothetical role scenarios |

| Unstructured Interview | Open-ended conversation | $r = 0.28$ to $0.38$ | Relationship building; poor standalone predictor |

| Case Interview | Real-time problem-solving exercise | $r = 0.40$ to $0.50$ | Analytical and consulting role assessment |

| Competency Panel Interview | Multiple assessors rating against a competency framework | $r = 0.55$ to $0.65$ | Senior roles requiring multi-assessor validation |

| Structured Interview (General) | Standardized questions with rubric scoring | $r = 0.44$ to $0.54$ | Consistent early-funnel screening at volume |

The research consensus is that behavioral interviewing outperforms situational interviewing for most roles because past behavior provides empirical evidence rather than hypothetical judgment. Situational interviews are useful for roles where the candidate cannot have prior experience in the specific scenario (newly qualified graduates, career changers entering a domain), but should not be the primary format when relevant behavioral history exists.

The highest validity scores are achieved when behavioral interviews are combined with structured competency panels, in which multiple assessors independently rate the same candidate against the same competency framework and then calibrate scores before reaching a consensus. This approach virtually eliminates the single-assessor halo effect and produces inter-rater reliability scores that justify the additional time investment for senior and high-impact roles.

What the Experts Say?

The behavioral interview, done properly, is not a conversation. It is a structured investigation. The interviewer is not learning who this person is. They are gathering evidence about what this person does when it matters.

– Bradford Smart, author of “Topgrading” and pioneer of structured interview methodology in executive hiring

How to Design and Measure Behavioral Interview Effectiveness?

Designing effective behavioral interviews requires connecting the question design to the role’s actual competency requirements, not to a generic list of “good interview questions” available from any HR website.

Formula: Inter-Rater Reliability

κ = (Observed agreement − Expected agreement) ÷ (1 − Expected agreement)

Where $P_o$ is the observed agreement between assessors and $P_e$ is the expected agreement by chance. A Cohen’s kappa of 0.61 or above indicates substantial agreement between assessors and suggests that the rubric is sufficiently specific for consistent application. Below 0.40 indicates that assessors are applying the rubric differently, which signals a need for calibration training or rubric redesign.

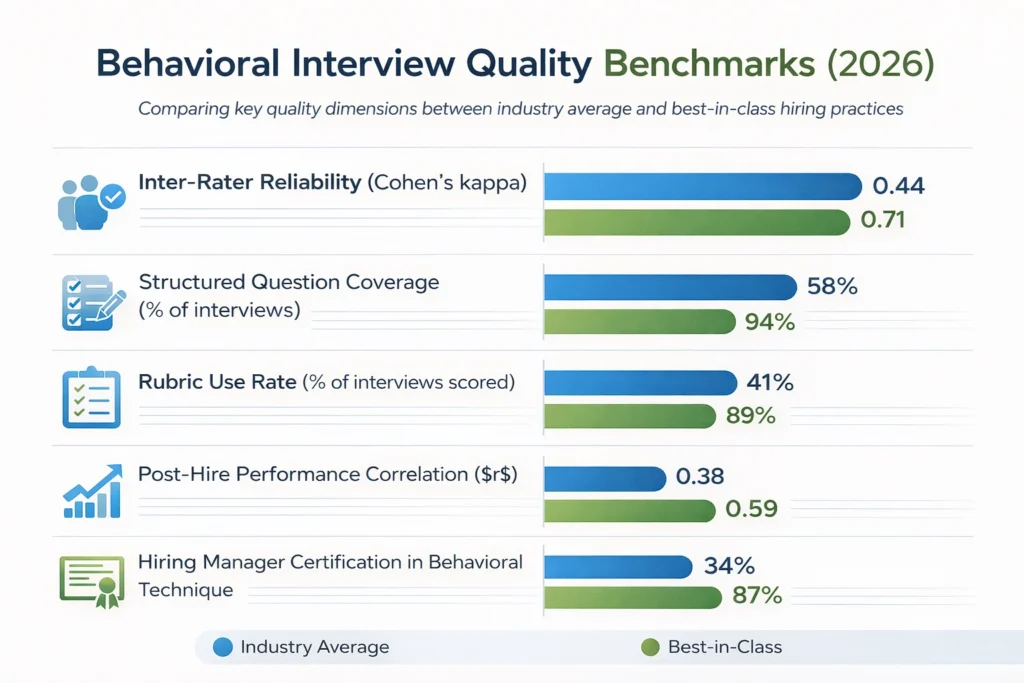

Behavioral Interview Quality Benchmarks (2026)

| Quality Dimension | Industry Average | Best-in-Class |

|---|---|---|

| Inter-Rater Reliability (Cohen’s kappa) | 0.44 | 0.71 |

| Structured Question Coverage (% of interviews) | 58% | 94% |

| Rubric Use Rate (% of interviews scored) | 41% | 89% |

| Post-Hire Performance Correlation ($r$) | 0.38 | 0.59 |

| Hiring Manager Certification in Behavioral Technique | 34% | 87% |

The gap between industry average and best-in-class maps almost entirely to training and process discipline. Organizations that train hiring managers in behavioral interview technique, provide standardized question banks by role, and require rubric-based scoring achieve validity coefficients that approach the theoretical ceiling for interview-based prediction. Organizations that assume behavioral interviewing is intuitive and requires no structural support produce results that are only marginally better than unstructured conversation.

Key Strategies for Improving Behavioral Interview Quality

How AI Enhances Behavioral Interview Design and Scoring?

AI-Assisted Question Generation

Building a behavioral interview question bank for a new role from scratch requires deep competency analysis, question design expertise, and validation work that most hiring teams do not have the time to perform. AI-powered question generators, trained on validated behavioral interview research and organized around established competency frameworks, produce a draft question bank for any role in minutes. The draft requires human review against the specific role context, but it eliminates the blank-page problem that causes most organizations to default to generic behavioral questions not actually measuring the right competencies.

NLP-Based Response Analysis

For asynchronous behavioral interview responses, NLP analysis evaluates structural completeness (does the response include situation, task, action, and result?), relevance of the example to the target competency, specificity of behavioral description, and measurability of the stated outcome. This does not replace human judgment on qualitative dimensions, but it flags structurally incomplete responses for closer human review and ranks the completeness of a candidate pool’s responses so reviewers can prioritize their time efficiently.

Post-Hire Validation and Question Improvement

The most powerful long-term AI application in behavioral interview design is the closed-loop validation cycle: tracking the correlation between candidates’ behavioral interview scores on specific competencies and their subsequent performance ratings on those same competencies at 6 and 12 months. Questions where high scores consistently predict high performance are retained. Questions where scores do not correlate with performance are redesigned. Over time, this produces a behavioral question bank empirically validated against actual job performance data within the organization, rather than relying solely on external research or general competency theory.

Bias Detection in Question Design

AI can audit behavioral interview question banks for language patterns associated with adverse impact: questions implicitly referencing experiences more common in certain demographic groups, terminology more accessible to specific educational backgrounds, and phrasing that triggers performance-impairing anxiety in particular populations. This audit does not guarantee bias-free question design, but it identifies a category of design problems that is extremely difficult to detect through human review of familiar-seeming text.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Behavioral Interviews and Diversity and Inclusion

The behavioral interview is one of the more equitable interview formats available, but its equity credentials depend entirely on how it is designed and administered.

Standardization as an Equalizer

When every candidate for a given role is asked the same behavioral questions in the same sequence and scored against the same rubric, the structural advantages that accrue to practiced interview performers are reduced. The well-coached candidate from an elite institution and the candidate from a non-traditional background who has never had a formal mock interview face the same question set. The framework does not eliminate preparation advantages, but it reduces their leverage relative to the underlying behavioral evidence.

Experience Breadth and Access to Compelling Stories

A structural equity concern is that the quality of a candidate’s behavioral examples is partly a function of the richness and variety of their professional experiences, which is itself a function of the access they have had to meaningful opportunities. Candidates from resource-rich organizations have had access to high-stakes situations that produce compelling narratives. Candidates from under-resourced environments may have demonstrated equivalent competence under more constrained conditions, but their stories may sound less impressive in a conventional rubric.

The mitigation is competency-level rubric design that rewards the quality of judgment and action demonstrated, not the scale or resources of the situation. A response about resolving a difficult team dynamic in a three-person organization can score as high as one about managing a global restructuring if the rubric evaluates sophistication of problem-solving rather than impressiveness of setting.

Language and Cultural Communication Norms

Behavioral interview conventions are culturally specific. The expectation that a candidate will confidently narrate their individual contribution, frame themselves as the decisive actor, and claim credit for specific outcomes reflects a communication norm more natural in some cultural contexts than others. Candidates from cultures where individual achievement is discussed with more restraint or where team contribution is framed collectively may systematically under-perform in behavioral formats relative to their actual competency level.

Interviewer training that includes cultural communication norm awareness, rubric language that accommodates collective framing, and asynchronous formats that remove real-time social pressure are practical accommodations that preserve structural validity while reducing cultural access barriers.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Candidates Giving Hypothetical Rather Than Real Responses | Redirect immediately: “I am looking for a specific example from your experience. Can you think of a time when this actually happened?” |

| Interviewers Accepting Thin or Vague Responses | Train in STAR probing technique; use a mandatory question card that prompts follow-up for each missing element |

| Inconsistent Scoring Across Assessors | Implement BARS rubrics and run pre-cycle calibration sessions; track inter-rater reliability as a program quality metric |

| Behavioral Question Banks That Are Too Generic | Map every question to a specific role-level competency; review and update banks at least annually against role performance data |

| Candidate Anxiety Suppressing Response Quality | Provide explicit behavioral interview guidance and sample questions in the candidate preparation email; consider asynchronous format for early screening stages |

Real-World Case Studies

Case Study 1: The Consulting Firm

A mid-size management consulting firm was experiencing a recurring pattern: candidates who performed impressively in their first-round interviews were failing in the role at a rate that hiring managers found inexplicable. A structured audit of their interview process revealed that first-round interviews were entirely unstructured, with each partner conducting a different conversation and arriving at scores based on personal impression.

They redesigned the first-round interview as a structured behavioral format with six role-specific competency areas, a standardized five-question bank per competency area, and a BARS rubric for scoring. They also required all partners conducting first-round interviews to complete a four-hour behavioral interviewing certification. Within two hiring cohorts, 12-month performance ratings for new hires improved significantly. Regrettable attrition in the first year dropped from 22% to 11%. Partner confidence in the hiring process, measured by an internal survey, rose from 51% to 79%.

Case Study 2: The Technology Company

A technology company that had been using behavioral interviews informally for several years implemented a formal AI-assisted post-hire validation cycle: for every hire over a 24-month period, they tracked the correlation between first-round behavioral interview scores on five target competencies and 12-month manager performance ratings on those same competencies. The analysis revealed that three of their five behavioral questions had strong predictive validity ($r$ above 0.50) and two had essentially no correlation with downstream performance ($r$ below 0.15).

The two non-predictive questions were redesigned based on the performance data. In the following hiring cycle, the average correlation between behavioral interview scores and 12-month performance improved from $r = 0.41$ to $r = 0.58$, approaching the theoretical ceiling for single-interview prediction.

Case Study 3: The Retail Chain

A national retail chain was trying to improve diversity of hire at the store manager level while maintaining the performance quality of its management cohort. They had observed that diverse candidates were advancing through the resume screening stage at reasonable rates but declining in the behavioral interview stage at significantly higher rates than comparable non-diverse candidates.

A bias audit of their behavioral question bank found that two of their eight standard questions were implicitly referencing situations more common in candidates who had worked in corporate retail environments, which correlated with a demographic profile that underrepresented the diversity they were seeking. They replaced those two questions with competency-equivalent questions drawn from a broader range of professional contexts. The pass rate for diverse candidates through the behavioral interview stage improved by 28% in the following two hiring cycles, with no measurable change in the 12-month performance of the resulting hires.

Building a Behavioral Interview Quality Dashboard: What to Track?

An AC without measurement infrastructure is a process masquerading as a system. Six metrics form the core of a useful performance dashboard:

Behavioral Interview Across the Hiring Funnel

Early-Funnel Behavioral Screening

Asynchronous behavioral interviews used as early-funnel screening tools allow TA teams to assess behavioral competencies at volume before investing recruiter and hiring manager time in live conversations. A two to three question asynchronous behavioral screen targeting the one or two competencies most critical for performance can replace the first-round phone screen with significantly richer data. The candidate pool that advances to live interview stages has already demonstrated, in their own words, that they can produce behavioral evidence in the target competency areas.

Mid-Funnel Structured Behavioral Interview

The primary deployment: a standardized behavioral interview guide with four to six questions, each targeting a distinct role-relevant competency, conducted by a trained interviewer or panel, scored against a BARS rubric. This is the stage where behavioral interviewing’s predictive validity is most directly realized, and where the investment in question design, rubric development, and interviewer training pays the most consistent dividend.

Final Panel Behavioral Calibration

For senior and high-impact roles, final-stage panel interviews include a calibration session in which each assessor shares their evidence-based scores, discusses discrepancies, and reaches a consensus position. This eliminates anchoring to the first assessor’s opinion, surfaces evidence that individual assessors may have weighted differently, and produces a documented, defensible hiring decision.

Post-Hire Behavioral Onboarding

The behavioral examples a candidate provides during their interview are a resource, not just an evaluation instrument. High-quality behavioral responses identify the kinds of situations the candidate has navigated effectively and the contexts in which they do their best work. Organizations that share de-identified interview insights with onboarding managers use this information to design early assignments that play to the new hire’s demonstrated strengths, accelerating time-to-contribution and reducing the early-tenure friction that drives first-year attrition.

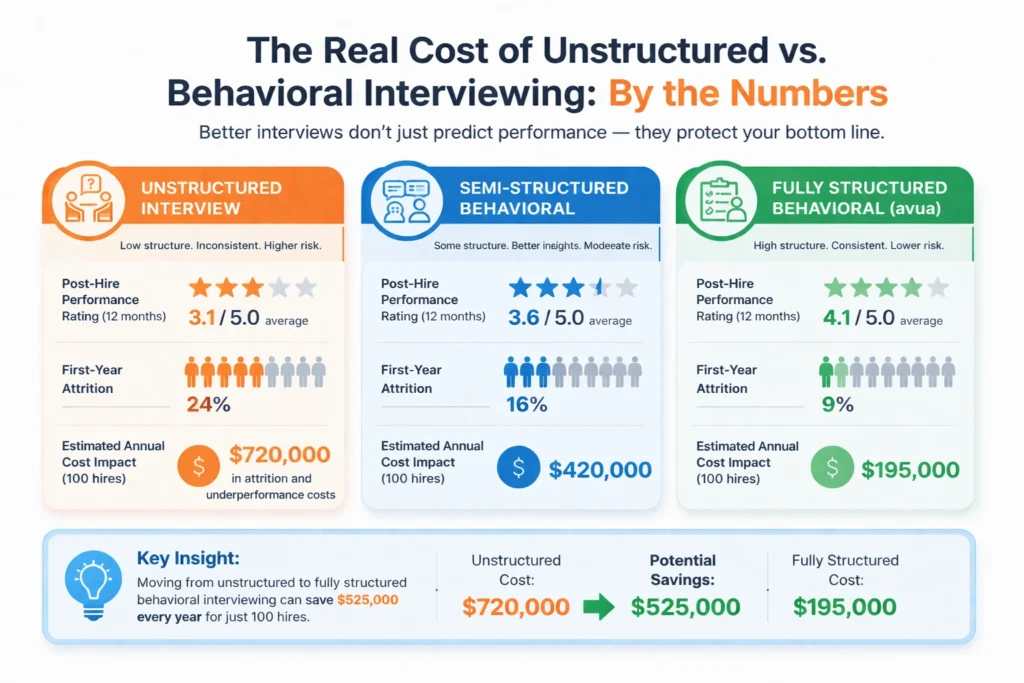

The Real Cost of Unstructured vs. Behavioral Interviewing: By the Numbers

| Interview Approach | Post-Hire Performance Rating (12 months) | First-Year Attrition | Estimated Annual Cost Impact (100 hires) |

|---|---|---|---|

| Unstructured Interview | 3.1 / 5.0 average | 24% | $720,000 in attrition and underperformance costs |

| Semi-Structured Behavioral | 3.6 / 5.0 average | 16% | $420,000 |

| Fully Structured Behavioral (avua) | 4.1 / 5.0 average | 9% | $195,000 |

The cost calculation uses a blended replacement cost of $30,000 per departure and a productivity gap cost of $15,000 per underperforming hire in the first 12 months. The improvement from unstructured to fully structured behavioral interviewing represents a recoverable cost of $525,000 annually at 100 hires per year, from a process change that requires training investment and rubric development but no additional headcount.

Related Terms

| Term | Definition |

|---|---|

| STAR Framework | Situation, Task, Action, Result: the structural model for both asking and evaluating behavioral interview questions |

| Competency Framework | The defined set of skills, behaviors, and knowledge areas that predict performance in a given role or organization |

| Behavioral Anchored Rating Scale (BARS) | A scoring rubric that defines what strong, average, and weak behavioral responses look like with specific descriptors |

| Structured Interview | Any interview format that uses standardized questions, consistent sequencing, and defined scoring criteria |

| Inter-Rater Reliability | The degree of agreement between independent assessors scoring the same candidate, used as a quality metric for interview programs |

| Situational Interview | An interview format using hypothetical scenarios rather than past experience, often paired with behavioral questions for junior roles |

Frequently Asked Questions

What makes a behavioral interview question good?

A good behavioral question targets a single, specific competency that is relevant to performance in the role. It is unambiguous enough that all candidates interpret it the same way. It cannot be answered credibly with a hypothetical. And it produces responses that are evaluable against an anchored rubric, meaning there is a clear difference between a strong response and a weak one that assessors can apply consistently. Generic questions that target vague qualities like “leadership” or “communication” rarely meet all four criteria.

How many behavioral questions should an interview include?

Four to six questions per interview session is the evidence-based standard. Below four questions and you may not have sufficient behavioral evidence to make a defensible competency assessment across the required dimensions. Above six questions and candidate fatigue and time constraints reduce the quality of later responses even as the number of data points increases. If multiple competencies need to be assessed, distribute them across multiple interview sessions or assessors rather than compressing them into a single extended interview.

What should an interviewer do when a candidate cannot think of a specific example?

First, check whether the question is genuinely role-relevant and realistic for the candidate’s experience level. If a junior candidate genuinely has not faced the situation the question describes, the question may not be appropriate for their stage. If the question is appropriate, allow the candidate a moment to think, and if needed, offer a reframe: “Take a moment. This could be from any professional context, including internships, volunteer work, or academic projects.” If the candidate still cannot provide an example, move to the next question and return at the end if time permits. A pattern of inability to provide behavioral examples across multiple competencies is itself a signal worth documenting.

Can behavioral interviews be legally challenged?

Like all employment practices, behavioral interview processes can be subject to legal scrutiny if they produce adverse impact outcomes (certain protected groups failing at disproportionately higher rates) or if the questions themselves touch on protected characteristics. The defenses against legal challenge are the same as those that produce better interview outcomes: standardized questions applied consistently to all candidates, scoring rubrics based on job-relevant competencies, documented evidence of the job-relatedness of the competency framework, and calibrated assessors applying consistent standards. A well-designed behavioral interview program that documents its validity and applies it consistently is more legally defensible than an unstructured process, not less.

How should candidates prepare for a behavioral interview?

Effective preparation involves identifying four to six professional experiences that demonstrate strong performance across different competency areas (leadership, problem-solving, communication, conflict navigation, delivery under pressure) and structuring each as a clear STAR narrative. The goal is not to script answers word-for-word but to have a library of well-organized experiences that can be deployed and adapted across different behavioral questions. The most common preparation failure is being able to recall what happened but not having thought through the specific actions taken and the measurable outcomes achieved, which are the elements the interviewer most needs to evaluate.

Conclusion

The behavioral interview is the closest thing the hiring profession has to a scientific instrument. It is not perfect, and no honest assessment of predictive validity should suggest it is. Candidates can over-prepare, interviewers can be inconsistent, and past behavior in one context does not always translate perfectly to performance in another. But among the tools available for evaluating candidate suitability before making a hiring decision, it is the one most firmly grounded in evidence, most reliably consistent when properly administered, and most defensible when the hiring decision is later scrutinized.

The gap between the behavioral interview as it is designed to work and the behavioral interview as it actually functions in most organizations is not a gap in theory. It is a gap in execution: in question design rigor, in rubric development, in interviewer training, and in the organizational will to apply a structured process consistently rather than defaulting to the conversational comfort of an unstructured chat.

In 2026, closing that execution gap is not just a best practice. It is a competitive advantage. The organizations that hire from evidence rather than impression make fewer mistakes, recover faster from the ones they do make, and build interviewing cultures in which candidates feel genuinely assessed rather than socially auditioned. That matters for quality. It also matters for equity. And it matters for the organization’s ability to defend every hire it makes to the people who funded the headcount and the people who will work alongside the hire every day.