The candidates hiring professionals feel best about after an interview are not always the most qualified. They are the most familiar. The ones who reflect back an image of competence that matches a template the interviewer did not know they were carrying.

This is hiring bias. Not malice. A systematic, often invisible set of cognitive shortcuts that cause decision-makers to consistently favor certain candidates for reasons unrelated to their ability to do the job.

Bias operates at every stage: in how job descriptions are written, which resumes survive automated screening and AI resume screening tools, how behavioral interviews are scored, and which offers get extended at what salary. It is not a single act. It is a pattern produced by a process not designed to prevent it.

In 2026, reducing bias is a legal obligation, an ethical imperative, and a competitive advantage. Organizations that address it access a broader applicant pool, make more accurate assessments, and build workforces that actually reflect the markets they serve.

The core metric for tracking bias at the process level is the Adverse Impact Ratio (AIR): the ratio of selection rates for a protected group to the selection rates for the highest-passing group at any given hiring stage. An AIR below 0.80 (the “four-fifths rule” widely referenced in EEOC guidance) indicates statistically significant adverse impact that warrants investigation.

Adverse Impact Ratio = (Selected % of protected group) ÷ (Selected % of top group)

What is Bias in Hiring?

Bias in hiring is a pattern of systematic deviation from merit-based candidate evaluation, in which irrelevant personal characteristics, cognitive shortcuts, or structural process failures cause employment decisions to consistently favor or disfavor candidates based on factors unconnected to job performance.

The critical word is systematic. Individual errors in judgment are inevitable. Bias in hiring refers to patterns that emerge across many decisions, made by many people, over time.

When a demographic group consistently passes resume screening at lower rates than comparable candidates, that is a bias signal. When certain interviewers consistently rate candidates from specific backgrounds lower than their colleagues rate the same candidate, that is a bias signal. When salary offers differ by gender or ethnicity after controlling for legitimate pay factors, that is a bias signal.

Both forms exist. Implicit bias is harder to detect and harder to address because it is invisible to the person exhibiting it, which is why structural process design matters more than individual awareness training in producing measurable change.

Is Your Hiring Process Selecting the Best Candidates or the Most Familiar Ones?

There is a version of bias management that involves sending the hiring team to a half-day unconscious bias workshop, feeling thoughtful about it for a week, and then returning to the same process with the same outcomes. Research consistently shows this produces minimal long-term impact. Awareness without structural change does not produce behavioral change at scale.

Organizations that actually move the needle have redesigned their processes: writing job descriptions against minimum viable requirements, implementing blind resume review, using structured behavioral interviews with standardized rubrics, and running adverse impact analysis at every funnel stage before gaps compound.

The cost of failing to do this is financial and competitive, not just ethical. A process that systematically filters out qualified candidates is not maintaining quality standards, it is shrinking the effective talent pool and hiring from the remaining fraction, under the impression the selection was rigorous.

The evidence is consistent. Resume audit studies have found callback rate differentials of 30–50% based on name alone. Studies on gender and interview assessment have found identical verbal responses rated differently based on perceived gender. Research on educational prestige has found hiring managers in elite firms can distinguish between target and non-target university candidates in under six seconds, and that this distinction drives more of the screening decision than any specific qualification.

The talent being filtered out is not marginal. In many cases, it is the strongest candidate in the pool, who happened to trigger a cognitive shortcut in the reviewer.

The reframe for TA leaders: bias in hiring is not a diversity program. It is a quality problem. A process contaminated by bias produces systematically inaccurate assessments of candidate quality. Debiasing it does not lower the bar, it raises the accuracy of the assessment that determines who clears it.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

Types of Bias in Hiring

Bias in hiring is not a single phenomenon. It operates through distinct cognitive and structural mechanisms, each requiring a specific design response.

Affinity Bias

The most pervasive and consequential form of hiring bias. Affinity bias is the tendency to favor candidates who share characteristics, backgrounds, interests, or experiences with the evaluator. It is the hiring manager who gravitates toward the candidate who attended their university, the recruiter who connects most easily with someone from their hometown, the panel who ranks highest the candidate whose communication style matches the team’s existing register. Affinity bias feels like good judgment because the familiarity it generates is experienced as competence recognition. It is not. It is pattern matching against a template that happens to be the evaluator themselves.

Confirmation Bias

Once a hiring decision-maker forms an initial impression of a candidate, confirmation bias causes them to seek evidence that confirms it and discount evidence that contradicts it. A candidate who makes a strong first impression receives the benefit of the doubt on ambiguous responses. A candidate who stumbles early is interpreted unfavorably throughout regardless of subsequent performance. This is why panel interviewers should not share scores until each has rated independently, and why behavioral rubrics requiring evidence-based justification for every score produce more accurate results than holistic impression ratings.

Halo and Horn Effects

The halo effect occurs when one strong positive quality creates a generalized positive assessment across all dimensions. The horn effect is the inverse. Both are particularly destructive in unstructured interviews, where evaluators have latitude to construct a narrative around an initial impression rather than evaluating each competency independently against a defined standard. A candidate who is exceptionally well-presented may receive high scores on problem-solving and leadership that reflect their appearance rather than their capabilities.

Attribution Bias

Attribution bias refers to the tendency to explain the same behavior or outcome differently depending on who is exhibiting it. A candidate who describes a project failure is “self-aware and growth-oriented” if they fit the evaluator’s prototype and “unreliable” if they do not. A candidate who advocates assertively for their perspective is “confident and decisive” in one demographic context and “aggressive” in another. The behavioral evidence is identical. The interpretation is where bias enters.

Recency Bias

Candidates interviewed most recently are disproportionately salient in evaluators’ memories when the hiring decision is made. This produces systematically unfair outcomes for candidates interviewed early in the process. The structural counter is scoring each candidate immediately after the interview, before the next interview begins, and using those contemporaneous scores rather than end-of-process impressions as the primary evaluation data.

Structural Bias

Beyond individual cognitive biases, hiring processes embed structural bias through design choices. A job description requiring ten years of experience for a role where five years of demonstrated competence would suffice structurally excludes candidates with non-linear career paths or caregiving gaps. A resume screening process weighting educational prestige structurally disadvantages candidates from non-target institutions regardless of capability. Each of these design choices produces adverse impact on specific demographic groups, often without any individual decision-maker intending to discriminate.

Bias in Hiring vs. Related Concepts

Understanding where bias fits within the broader landscape of hiring quality and equity:

| Concept | Definition | Relationship to Bias in Hiring |

|---|---|---|

| Adverse Impact | Statistically significant difference in selection rates across demographic groups | The measurable outcome of bias in the hiring process |

| Discrimination | Unlawful differential treatment based on protected characteristics | Bias can produce discrimination; not all bias is legally actionable |

| Diversity | Representation of varied demographic groups in the workforce | Bias reduction is a primary mechanism for improving diversity |

| Structured Interviewing | Standardized questions, consistent sequencing, rubric-based scoring | A direct structural counter to multiple forms of interviewer bias |

| Pay Equity | Equivalent compensation for equivalent work across demographic groups | Bias in compensation decisions is a specific manifestation of hiring and promotion bias |

| Affirmative Action | Policy-based preference for underrepresented groups to correct historical imbalance | Distinct from bias reduction; addresses outcome rather than process |

The important distinction in this table is between adverse impact and discrimination. Adverse impact is a statistical observation about hiring outcomes. It does not automatically indicate illegal discrimination, but it does indicate a process that requires investigation to determine whether the cause is a legitimate, job-relevant screening criterion or a bias-contaminated evaluation standard.

What the Experts Say?

Bias in hiring is not primarily a character problem. It is a design problem. The organizations that reduce it are not the ones who hire more virtuous people. They are the ones who build processes that do not require virtue to produce fair outcomes.

– Iris Bohnet, Professor of Public Policy at Harvard Kennedy School and author of “What Works: Gender Equality by Design

How to Measure Bias in the Hiring Process?

Measuring bias requires tracking selection rates across demographic groups at each stage of the hiring funnel, not just at the offer stage. A bias signal that appears at the resume screening stage will be invisible in end-of-process diversity data if the affected groups are not reaching the interview stage in sufficient numbers to show up in later-stage analysis.

Funnel-Stage Adverse Impact Analysis

The adverse impact ratio should be calculated at each of the following transition points: application to resume screen pass, resume screen pass to interview invitation, interview invitation to interview completion, interview completion to shortlist, shortlist to offer, and offer to acceptance. A ratio below 0.80 at any stage triggers investigation of that stage’s screening criteria and process.

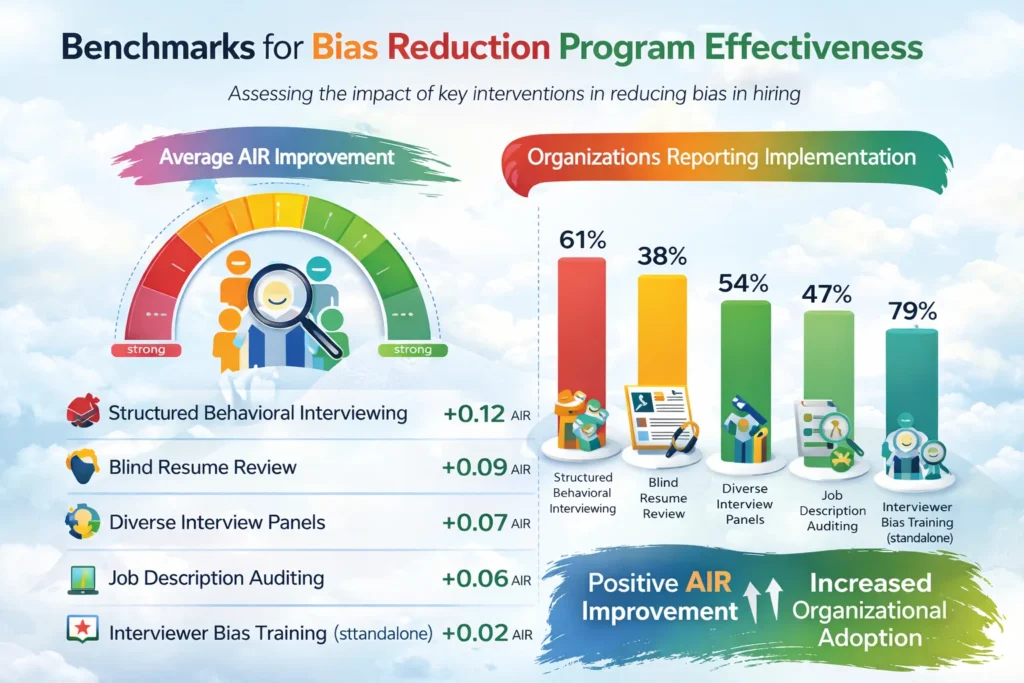

Benchmarks for Bias Reduction Program Effectiveness (2026)

| Intervention | Average AIR Improvement | Organizations Reporting Implementation |

|---|---|---|

| Structured Behavioral Interviewing | +0.12 AIR | 61% |

| Blind Resume Review | +0.09 AIR | 38% |

| Diverse Interview Panels | +0.07 AIR | 54% |

| Job Description Auditing | +0.06 AIR | 47% |

| Interviewer Bias Training (standalone) | +0.02 AIR | 79% |

The table is instructive on two levels. First, the structural interventions (structured interviewing, blind review, job description auditing) consistently outperform the awareness-only intervention (training) in producing measurable AIR improvement. Second, the most commonly implemented intervention (training, at 79%) is the one with the smallest measurable effect. Organizations are investing most heavily in the intervention that moves the metric least.

Key Strategies for Reducing Bias in the Hiring Process

How AI and Technology Interact with Bias in Hiring?

The relationship between AI and bias in hiring is the most important and most frequently misunderstood dimension of automated recruitment technology in 2026. AI does not inherently reduce bias. Depending on how it is designed, trained, and deployed, it can amplify and scale bias with efficiency and an appearance of objectivity that makes the resulting inequity harder to detect and harder to challenge.

When AI Amplifies Bias

AI hiring tools trained on historical hiring data from organizations with biased selection processes reproduce and scale those biases. If the historical data reflects a pattern of preferring candidates from certain universities, the model learns to prefer those universities. The appearance of algorithmic objectivity can make the resulting bias more difficult to challenge because candidates, regulators, and even the organization’s own employees may assume that an AI-generated score is inherently fairer than a human evaluation. It is not, unless the model has been specifically designed to prevent the reproduction of biased historical patterns.

When AI Reduces Bias

AI tools designed with explicit bias reduction goals can outperform human reviewers on specific equity dimensions, particularly in high-volume early-funnel processing where human fatigue and inconsistency are significant bias amplifiers. AI resume screening tools that evaluate candidates against defined, job-relevant competency signals rather than credential proxies can surface qualified candidates that keyword-based or human-reviewed processes would filter out. The critical design distinction is what the AI is trained to optimize: a model trained to predict who gets hired reproduces human bias; a model trained to predict who performs well in the role produces a meaningfully different and more equitable result.

Auditing AI Systems for Adverse Impact

Every AI-assisted hiring tool should be subject to regular adverse impact analysis: does the tool’s output produce a selection rate difference across demographic groups that exceeds the 0.80 threshold? This analysis should be conducted before deployment, repeated at regular intervals during deployment, and repeated after any significant model change. Organizations that have purchased AI hiring tools from third-party vendors should contractually require this audit data and conduct their own independent validation rather than relying solely on vendor-provided assurances.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Bias in Hiring and Legal Compliance

Bias in hiring intersects with employment law in ways that create genuine legal exposure for organizations that do not manage it actively.

Disparate Treatment and Disparate Impact

US employment discrimination law recognizes two distinct liability theories. Disparate treatment occurs when an employer intentionally treats candidates differently based on a protected characteristic. Disparate impact occurs when a facially neutral employment practice has a disproportionate adverse effect on a protected group and cannot be justified by business necessity. Bias in hiring can produce liability under either theory: explicit bias produces disparate treatment, and structural process bias that creates adverse impact can produce disparate impact liability even without discriminatory intent.

The Business Necessity Defense

When an employment practice produces adverse impact, the employer can defend it by demonstrating the practice is job-related and consistent with business necessity. This defense requires evidence that the screening criterion genuinely predicts job performance, not merely that the employer believes it does. Organizations with validated screening criteria through job analysis and criterion validity studies are in substantially stronger legal position than those applying unvalidated criteria on the basis of intuition or tradition.

Documentation and Audit Trails

Organizations that maintain documented adverse impact analysis, record the legitimate basis for each screening criterion, and can demonstrate consistent application of those criteria across all candidates are significantly better positioned in regulatory investigations and litigation than those operating informally. Documentation is not primarily a legal defensive measure. It is the infrastructure of a well-designed hiring process. The legal protection is a byproduct of operational quality.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Hiring Managers Resisting Structured Processes | Frame structure as accuracy-enhancing rather than constraint-imposing; use post-hire data to demonstrate the quality improvement it produces |

| Identifying Bias When Outcomes Look Diverse | Run adverse impact analysis at every funnel stage, not just at the offer stage; diversity at the offer stage can mask significant bias at earlier stages |

| AI Tools Producing Biased Outputs | Require vendors to provide adverse impact data; conduct independent validation; audit AI outputs quarterly against demographic pass-rate data |

| Affinity Bias in “Culture Fit” Assessments | Replace “culture fit” with “culture add” criteria defined by specific, observable behavioral standards; require evidence-based justification for culture fit assessments |

| Structural Bias in Job Requirements | Audit job descriptions against minimum viable requirements; question every requirement that cannot be directly traced to a specific job performance need |

Real-World Case Studies

Case Study 1: The Professional Services Firm

A firm noticed strong entry-level diversity but significant attrition of underrepresented talent between years two and five. Investigation found performance ratings for underrepresented employees more frequently used subjective language (“lacks executive presence”) while majority-group employees with equivalent outputs more often received specific accomplishment-based feedback. The firm redesigned its review template to require behavioral evidence for every rating, eliminated “executive presence” as a criterion, and implemented rating calibration sessions. Within two years, the attrition gap in years two through five reduced by 41%.

Case Study 2: The Technology Company

A technology company ran an AI resume screening tool for two hiring cycles before conducting its first adverse impact analysis. The tool was screening out female candidates at an AIR of 0.64 for software engineering roles, well below the 0.80 threshold. The model had been trained on historical hiring data reflecting a pattern of primarily hiring male engineers and had learned to weight signals that correlated with gender. The company suspended the tool, audited the training data, and rebuilt the model against post-hire performance data rather than historical hiring decisions. After redeployment, the AIR improved to 0.91 within two hiring cycles.

Case Study 3: The Healthcare Provider

A healthcare provider struggled to hire diverse candidates into clinical leadership despite a diverse pipeline at the clinical staff level. Adverse impact analysis revealed an AIR of 0.58 for underrepresented candidates at the final interview stage, after those candidates had advanced through earlier stages at near-parity rates. The final-stage panel was demographically homogeneous and unstructured. The organization implemented structured behavioral interviews, required panel diversity with at least two members from underrepresented groups, and introduced independent scoring before group discussion. The final-stage AIR improved from 0.58 to 0.84 within three hiring cycles, with no measurable change in 12-month performance ratings of leaders hired through the redesigned process.

Building a Bias Monitoring Dashboard: What to Track?

An AC without measurement infrastructure is a process masquerading as a system. Six metrics form the core of a useful performance dashboard:

Bias in Hiring Across the Recruitment Funnel

Job Description and Sourcing

Bias enters the funnel before a single application is reviewed, through the language and requirements of the job description and through the sourcing channels used to generate the pipeline. Job descriptions with excessive requirements, gendered language, or credential inflation restrict the diversity of the applying pool before the process has started. Sourcing strategies that rely exclusively on employee referrals or specific university relationships replicate the demographic profile of the current workforce rather than expanding it.

Resume Screening

The most extensively studied point of bias in hiring. Resume-based screening is vulnerable to name-based bias, institutional prestige bias, and career linearity bias that disadvantages candidates with gaps, career changes, or non-traditional trajectories. Structural interventions include blind review, structured screening criteria applied consistently, and AI-assisted screening tools trained on performance data rather than historical hire data.

Interviewing

The most psychologically complex bias environment in the hiring process. Affinity bias, confirmation bias, halo and horn effects, attribution bias, and recency bias operate simultaneously in the live interview setting. Structural interventions include standardized behavioral questions, anchored scoring rubrics, independent scoring before group discussion, and diverse panels whose varied implicit templates partially offset each other in the consensus process.

Offer and Negotiation

Bias at the offer stage most frequently manifests as differential offer levels for equivalent candidates, differential salary negotiation outcomes based on negotiation likelihood differences across demographic groups, and differential speed and quality of follow-up communication that influences offer acceptance rates for underrepresented candidates. Structured offer processes with documented, market-calibrated starting points reviewed for equity before extension are the primary structural counter.

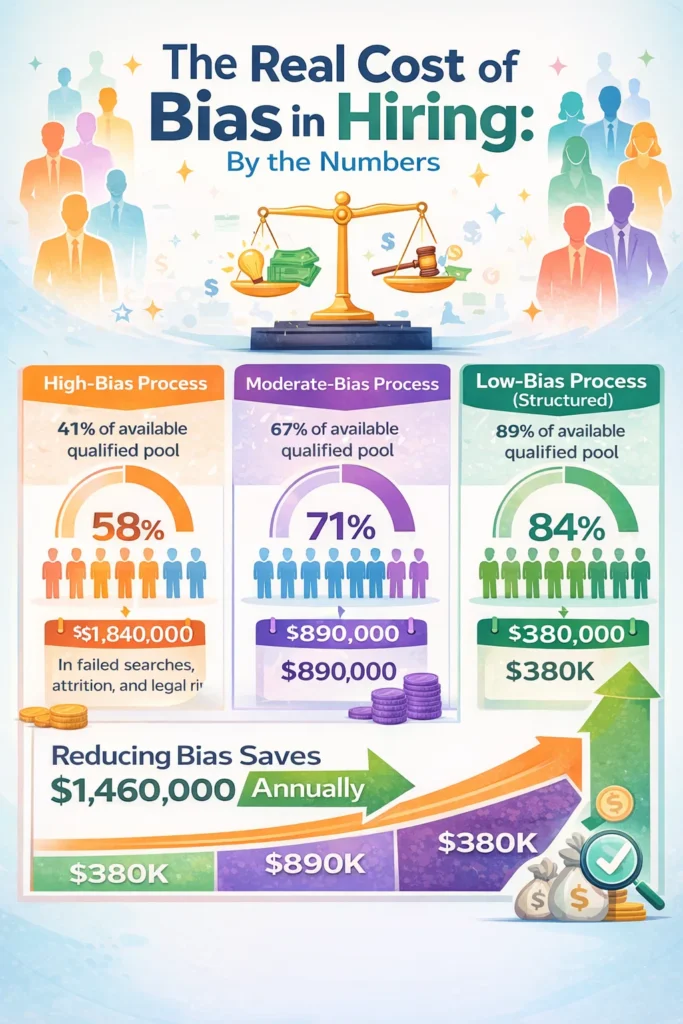

The Real Cost of Bias in Hiring: By the Numbers

| Scenario | Effective Qualified Pool Accessed | Offer Acceptance Rate | Estimated Annual Cost (100 hires) |

|---|---|---|---|

| High-Bias Process | 41% of available qualified pool | 58% | $1,840,000 in failed searches, attrition, and legal risk |

| Moderate-Bias Process | 67% of available qualified pool | 71% | $890,000 |

| Low-Bias Process (Structured) | 89% of available qualified pool | 84% | $380,000 |

The cost calculation includes failed search cost at $8,000 per failed offer process, first-year attrition from poor role fit at $30,000 per departure, and a pro-rated allocation of legal and reputational risk exposure from adverse impact patterns that are eventually investigated or litigated. The legal risk cost is the most variable and most difficult to estimate, but EEOC investigations and settlements in employment discrimination cases routinely reach six to seven figures for organizations without documented bias-reduction processes in place.

Related Terms

| Term | Definition |

|---|---|

| Adverse Impact | A statistically significant difference in selection rates between demographic groups at any stage of the hiring process |

| Affinity Bias | The tendency to favor candidates who share characteristics with the evaluator |

| Implicit Bias | Unconscious attitudes or stereotypes that influence decision-making without the evaluator’s awareness |

| Structured Interview | A standardized interview format using consistent questions, sequencing, and rubric-based scoring, designed to reduce evaluator bias |

| Diverse Slate | A policy requiring that the candidate pool presented for interview or offer consideration includes candidates from underrepresented groups |

| Disparate Impact | The legal theory under which a facially neutral employment practice that disproportionately affects a protected group can constitute unlawful discrimination |

Frequently Asked Questions

Can bias in hiring be eliminated entirely?

No. Bias is a feature of human cognition, not a defect that can be debugged out of a person. The goal is systematic mitigation: designing processes that reduce the influence of irrelevant factors on hiring decisions to the greatest degree possible, and measuring outcomes to track progress and identify remaining gaps. The difference between a high-bias and low-bias hiring process is not the presence or absence of cognitive shortcuts in individuals. It is the degree to which the process design prevents those shortcuts from determining the outcome.

Is unconscious bias training effective?

When used as the primary or only intervention, research consistently shows it produces minimal long-term change in hiring outcomes. Training without structural change is insufficient. The most effective bias-reduction programs use training to create shared conceptual language, then implement structural changes (standardized questions, rubric scoring, adverse impact tracking) that do not depend on individual virtue to produce equitable outcomes.

What is the four-fifths rule?

A heuristic referenced in EEOC guidance stating that a selection rate for any protected group that is less than 80% of the selection rate for the highest-passing group indicates potential adverse impact warranting investigation. It is not an absolute legal standard, and statistical significance of the difference also matters in formal analysis. An AIR below 0.80 should trigger a stage-level review, not necessarily a conclusion of illegal discrimination.

How should organizations handle the tension between diversity goals and merit-based hiring?

This tension is largely a false framing. Bias-contaminated hiring is not merit-based. It is familiar-face-based. When organizations reduce bias in their hiring processes, the definition of qualified candidates typically expands rather than contracts, because the process is now assessing merit rather than resemblance to existing employees. Redefining quality as demonstrated capacity to perform the role, and designing the assessment to measure that specifically, resolves the tension in most cases.

What should a candidate do if they believe they have experienced bias in a hiring process?

Candidates who believe they experienced discriminatory treatment have legal recourse in most jurisdictions. In the United States, complaints can be filed with the EEOC or the relevant state fair employment agency. Candidates should document relevant interactions contemporaneously, note specific statements or patterns suggesting discriminatory motivation, and consult with an employment attorney if the evidence is substantial.

Conclusion

Bias in hiring is not a problem at the edges of the talent acquisition function. It is a problem at its center. It determines who gets considered, who gets assessed fairly, who gets an offer, and at what price. It shapes the workforce that gets built, the perspectives that get included, and the performance that results. And it does all of this largely invisibly, under the cover of decisions that feel like judgment and look like meritocracy.

The organizations that take bias seriously enough to design against it are not doing so primarily because the law requires it, though in many jurisdictions it does. They are doing so because a hiring process that is contaminated by irrelevant factors is not a rigorous hiring process. It is a process that is wrong, consistently and systematically, in ways that cost the organization talent, money, and competitive position.

The path forward is not complicated. It requires honest measurement of where bias is operating in the funnel, structural process design that reduces the influence of irrelevant factors, regular auditing of outcomes across demographic groups, and the organizational will to act on what the data shows rather than explaining it away.

None of this requires perfect people. It requires better processes. The distinction is everything.