Interviews are notoriously subjective, two people can meet the same candidate and walk away with opposite opinions. Competency-based interviewing fixes this by shifting the focus from vague impressions to an evidence-based framework. While a Behavioral Interview provides the questioning technique, the competency framework defines exactly what is being measured.

This structure is the most effective way to combat Bias in Hiring, ensuring every member of your Applicant Pool is judged against consistent, role-relevant standards. By 2026, AI Hiring tools have made this discipline accessible at scale, automating competency mapping and rubric calibration across global panels. This disciplined approach doesn’t just improve hiring quality; it elevates the Candidate Experience by replacing “gut feel” with a fair, professional, and transparent evaluation process.

When every interviewer is measuring the same thing, the final decision becomes data-driven rather than opinion-based.

The primary metric validating competency-based interview quality is Inter-Rater Reliability (IRR), measured using Cohen’s kappa:

Cohen’s kappa = (Observed Agreement − Expected Agreement) ÷ (1 − Expected Agreement)

A kappa above 0.61 indicates substantial assessor agreement and suggests the competency framework and rubrics are specific enough for consistent application. A kappa below 0.40 signals that assessors are effectively measuring different things, which undermines the entire purpose of the structured approach.

What is a Competency-Based Interview?

A competency-based interview is a structured hiring conversation organized around a pre-defined competency framework for the role, in which each interview question is explicitly mapped to a specific competency, each response is evaluated against a standardized behavioral rubric, and assessment scores are aggregated across competencies to produce a comparable, defensible candidate evaluation.

Three elements separate a genuinely competency-based interview from an interview that merely uses behavioral questions:

The first is the competency framework itself: a defined, role-specific list of the capabilities and behaviors that predict success in the position. This framework is built before candidates are evaluated, not inferred from the strongest candidates after the fact.

The second is question mapping: every question in the interview guide traces explicitly to a specific competency. The interviewer is not improvising in the room. They are working from a structured guide where each question has a clear purpose.

The third is rubric-based scoring: evaluators do not walk away with an overall impression. They score each competency independently against anchored descriptors that define what a strong, adequate, and weak response looks like in observable behavioral terms.

Together, these three elements transform the interview from a conversation that produces impressions into one that produces data.

Is Your Interview Actually Measuring What Matters?

Most interviewers believe they are good judges of candidates. Most are not, or at least not as consistently as they think. The research on unstructured interview reliability is fairly discouraging: without structured questions and rubrics, two interviewers assessing the same candidate independently typically agree on their overall evaluation only about 50% of the time. That is barely better than chance, and it means that whether a candidate gets hired frequently depends more on which interviewer they happened to get than on whether they are actually qualified for the role.

The fix is not finding better interviewers. It is building a better process.

Competency-based interviewing addresses the reliability problem at its source by giving all interviewers the same questions to ask and the same framework to score against. When two interviewers disagree on a score, it is now a diagnosable problem: either the rubric needs more specific anchors, the interviewers need calibration, or the candidate truly produced ambiguous evidence. Any of those conclusions is more useful than “we just have different opinions.”

Organizations using rigorously implemented competency-based interview programs report 41% higher inter-rater reliability, 29% better prediction of 12-month performance ratings, and significantly lower first-year attrition compared to those using unstructured interview formats. These are not marginal improvements. They reflect the fundamental difference between a systematic evidence collection process and an unstructured conversation that produces whatever the interviewer happened to notice.

For talent acquisition leaders, the competency framework is also one of the most powerful tools for alignment between recruiters and hiring managers. When both parties explicitly agree on which competencies the interview will assess, what evidence would constitute a strong demonstration of each, and how decisions will be made from the resulting scores, the shortlist disagreements that derail hiring processes and extend time-to-fill are dramatically reduced. The competency framework does not just improve interview quality. It creates shared language for a conversation that is otherwise conducted with incompatible mental models.

Here is a scenario that plays out more often than most organizations would like to admit. A software company is filling a senior engineering lead role. Three finalists go through final-round interviews with a panel of five: a recruiter, two engineering managers, a VP of Product, and the CTO. After the interviews, the panel convenes. The two engineering managers favor Candidate B for their technical depth. The VP of Product favors Candidate C for their communication style with non-technical stakeholders. The CTO is drawn to Candidate A for the strategic thinking they displayed in one specific exchange. The recruiter, who does not have a technical opinion, defers to whoever speaks most confidently.

Nobody is wrong, exactly. But they were not evaluating the same things. There was no agreed competency framework, no shared question set, and no scoring rubric. The decision is going to be made on the basis of whoever argues most persuasively in the debrief, which is not the same as whoever is most qualified.

A competency-based process would have defined three to five critical competencies for this role before any candidate was interviewed, assigned each panel member to assess specific competencies in their designated interview, collected independent rubric scores before the debrief, and structured the debrief around comparing evidence rather than exchanging impressions. The outcome might have been the same. But the process would have been reproducible, defensible, and genuinely comparative.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

Building a Competency Framework

The competency framework is the foundation of everything that follows in a competency-based interview process. It is worth investing time here, because a poorly constructed framework produces a well-executed assessment of the wrong things.

Defining Role-Relevant Competencies

Competencies are not job requirements. A job requirement is a credential or qualification: a degree, a certification, years of experience. A competency is an observable behavior pattern that predicts performance: problem-solving under ambiguity, stakeholder influence without authority, team performance improvement.

The best competency frameworks are built from two sources. The first is job analysis: a structured examination of the role’s actual demands, the situations the person will regularly face, and the behavioral patterns that research and organizational experience suggest produce success in those situations. The second is top-performer analysis: research into the specific behavioral patterns that distinguish the organization’s highest performers in the role from those who are adequate, producing a competency model grounded in internal evidence rather than generic best-practice frameworks.

A competency framework for a given role typically includes four to eight competencies. Fewer than four risks missing critical dimensions. More than eight creates an interview that is too long to execute well and produces assessor fatigue that degrades scoring quality.

Competency Levels and Behavioral Anchors

Not all competencies look the same at every level of the organization. Strategic thinking at an individual contributor level manifests as structured problem decomposition and logical prioritization. At a senior leadership level it manifests as systems-level awareness, cross-functional consequence evaluation, and long-horizon decision-making. The competency framework should specify not just what the competency is but what it looks like at the level being assessed.

Behavioral anchors translate this specification into observable, evaluatable terms. A behavioral anchor for “commercial awareness” at a sales manager level might look like: demonstrates ability to identify business impact of non-commercial decisions; consistently frames team activities in terms of revenue and customer lifetime value; proactively identifies risk-adjusted opportunities in their pipeline data. These anchors give the interviewer and the rubric specific behavioral indicators to look for, rather than requiring evaluators to infer what “commercial awareness” means under the pressure of an active interview.

Weighting Competencies

Not all competencies are equally important for every role. A sales role might weight client relationship management and commercial orientation most heavily, with analytical problem-solving as a secondary consideration. A data engineering role might invert that weighting entirely. Making these weighting decisions explicit before the interview process begins ensures that the aggregate candidate score reflects the organization’s actual priorities rather than the averaging of equally weighted assessments across unequally important dimensions.

Competency-Based Interview vs. Related Interview Formats

| Format | Question Structure | What It Measures | Primary Differentiator |

|---|---|---|---|

| Competency-Based Interview | Mapped to framework; rubric-scored | Specific, role-relevant competencies | Framework organization and systematic scoring |

| Behavioral Interview | Past experience questions | General behavioral patterns | Questioning technique rather than framework structure |

| Situational Interview | Hypothetical scenario questions | Judgment in defined situations | Future-oriented rather than evidence-based |

| Structured Interview | Standardized questions for all candidates | Consistent, comparable candidate data | Consistency without necessarily being competency-mapped |

| Unstructured Interview | Conversational, interviewer-led | Whatever the interviewer happens to notice | No systematic measurement |

| Case Interview | Real-time problem solving | Analytical process and thinking | Performance task rather than behavioral evidence |

The most important distinction in this table is between a behavioral interview and a competency-based interview. A behavioral interview is a questioning technique: it asks candidates about past experiences using the STAR framework. A competency-based interview is an organizational architecture: it structures the entire interview process around a framework of specific competencies, uses behavioral questioning as a primary tool within that structure, and collects rubric-scored data for each competency independently. All competency-based interviews use behavioral questions. Not all behavioral interviews are competency-based.

What the Experts Say?

A competency framework is not a checklist. It is an agreement about what matters. When the whole team agrees on what they are looking for before they meet the candidate, the debrief becomes a conversation about evidence rather than a contest of impressions.

– Tomas Chamorro-Premuzic, Professor of Business Psychology at University College London and Columbia University, and author of “Why Do So Many Incompetent Men Become Leaders?

How to Design a Competency-Based Interview Guide?

Step 1: Map Competencies to Interview Stages

In a multi-stage interview process, different competencies should be assessed at different stages rather than attempting to cover all competencies in every interview. This prevents redundant coverage (multiple interviewers assessing the same competency without coordination) and fatigue (candidates and interviewers both performing less well as sessions extend). A typical distribution might assign technical competencies to an early specialist interview, interpersonal and collaborative competencies to a peer interview, and strategic and leadership competencies to a senior leader interview.

Step 2: Write Competency-Mapped Questions

For each competency, develop two to three behavioral questions that ask candidates to provide specific evidence from their experience. Each question should be worded to elicit a STAR-structured response (Situation, Task, Action, Result) and should be sufficiently specific that it cannot be answered with a vague generality. “Tell me about a time you influenced a decision you did not have formal authority over” is a competency-mapped question. “Tell me about your leadership style” is not.

Include follow-up probe questions that the interviewer can use when a candidate’s initial response is thin, hypothetical, or missing the Action element that is analytically most important: “That’s helpful context. Can you tell me specifically what you did next?” or “What was your individual contribution to that outcome?”

Step 3: Build Behavioral Anchored Rating Scales

For each competency, develop a rating scale with three to five levels and specific behavioral descriptors at each level. The descriptors should be written in terms of observable candidate behaviors during the interview, not in terms of the ideal candidate profile.

A strong response to a stakeholder influence question at Level 4 might be described as: “Candidate provides a specific example of changing a significant decision through influence rather than authority, describes their approach in terms of understanding the stakeholder’s priorities rather than just asserting their own, and articulates the outcome and what they would do differently.” This descriptor gives the scorer a concrete reference for what a strong response looks like, reducing the subjective interpretation that produces low inter-rater reliability.

Step 4: Calibrate Interviewers Before the Process Begins

Before any candidate interviews occur, the interviewing panel should participate in a calibration session: reviewing the competency framework, practicing applying the rubric to sample responses (ideally video responses that all panel members can evaluate independently and then compare), and discussing the differences in their scores to develop shared understanding of where the rubric anchors apply.

Calibration sessions that identify and resolve rubric interpretation differences before the live process consistently produce higher inter-rater reliability scores than those that assume shared understanding without verification.

Formula: Inter-Rater Reliability

Cohen’s kappa = (Observed Agreement − Expected Agreement) ÷ (1 − Expected Agreement)

Where $P_o$ is the proportion of occasions when two assessors agree, and $P_e$ is the proportion of agreements expected by chance given the rating distribution. Target kappa of 0.61 or above before beginning live candidate evaluation.

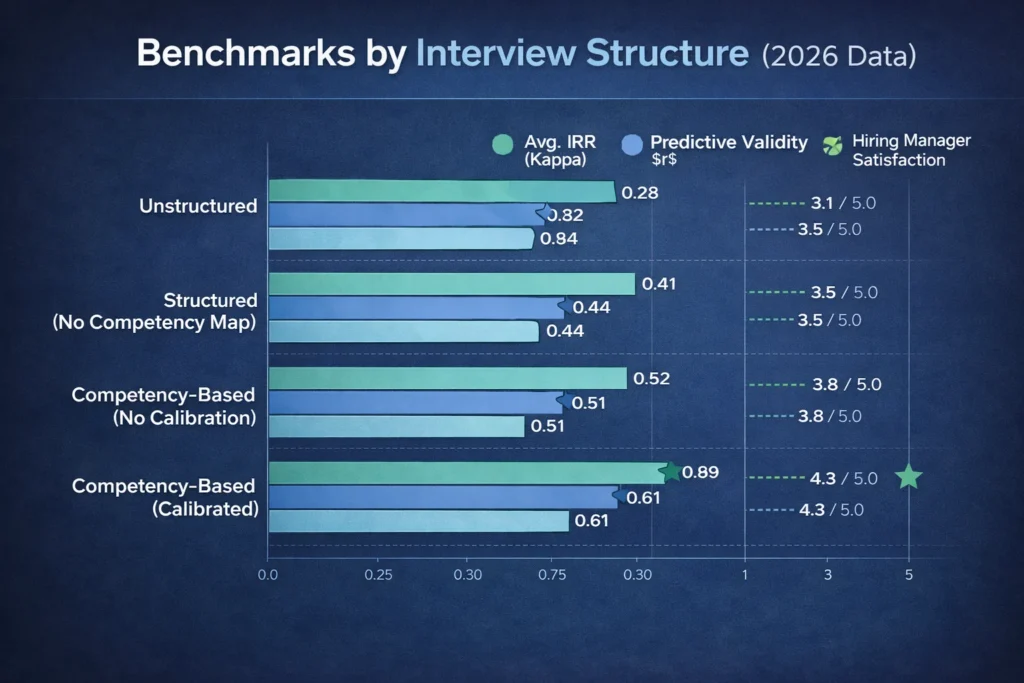

Benchmarks by Interview Structure (2026 Data)

| Interview Structure | Avg. IRR (Kappa) | Predictive Validity ($r$) | Hiring Manager Satisfaction |

|---|---|---|---|

| Unstructured | 0.28 | 0.34 | 3.1 / 5.0 |

| Structured (No Competency Map) | 0.41 | 0.44 | 3.5 / 5.0 |

| Competency-Based (No Calibration) | 0.52 | 0.51 | 3.8 / 5.0 |

| Competency-Based (Calibrated) | 0.69 | 0.61 | 4.3 / 5.0 |

The calibrated competency-based interview achieves IRR and predictive validity scores that approach the ceiling of what interview-based assessment can deliver. The jump from non-calibrated to calibrated is significant: calibration is not an optional refinement. It is what converts a competency framework into a consistently applied measurement instrument.

Key Strategies for Implementing Competency-Based Interviewing at Scale

How AI Is Transforming Competency-Based Interviewing?

Automated Competency Framework Generation

Building a competency framework from scratch for every new role type is time-intensive work that most hiring teams do not have the capacity to do well. AI systems trained on job performance research, industry competency databases, and the organization’s own historical hire and performance data can generate validated competency framework drafts for new roles in minutes.

These drafts still require hiring manager review and customization, but they eliminate the blank-page problem and the generic framework copy-paste that produces misaligned assessments.

AI-Assisted Question Bank Development

Once the competency framework is defined, AI can generate a pool of behavioral questions for each competency, including follow-up probe options, phrased to elicit STAR-structured responses and calibrated to the appropriate difficulty level for the role and seniority being assessed. Recruiters and hiring managers review and select from the generated bank, which is faster and typically produces more varied, competency-precise questions than manual authorship alone.

Asynchronous Response Scoring

For competency-based interviews conducted in asynchronous video format, NLP-powered scoring tools can evaluate candidate responses against the competency rubric, identifying the presence and quality of STAR elements, the relevance of the behavioral example to the target competency, and the specificity of the evidence provided. This does not replace human judgment on qualitative dimensions but provides a structured first-pass that helps reviewers prioritize their attention on the most ambiguous or most promising responses.

Calibration Support Tools

AI tools that present all panel members with the same sample responses and collect their independent rubric scores, then automatically calculate inter-rater reliability and surface the specific scoring disagreements for discussion, make calibration sessions dramatically more efficient. Rather than requiring all panel members to be in the same room reviewing printed response transcripts, the calibration can occur asynchronously with AI-supported analysis of score variance, reducing the logistical barrier to running calibration sessions before each hiring cycle.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Competency-Based Interviewing and Diversity, Equity, and Inclusion

Competency-based interviewing is one of the most powerful structural DEI tools in the hiring process, because it directly addresses two of the most consequential sources of inequity in interview-based assessment.

Standardization Reduces Affinity Bias Leverage

Affinity bias operates most powerfully in unstructured settings where the evaluator has maximum discretion to notice and weight whatever they find most compelling. A hiring manager who is inclined toward candidates who remind them of themselves has full latitude in an unstructured interview to weight the familiarity signals they perceive as competence indicators.

In a competency-based interview with anchored rubrics, that latitude is reduced: the evaluator is required to provide behavioral evidence for their scores, and a high score that is not supported by specific candidate-provided evidence is visible as an unsupported assessment when rubric scores are reviewed.

This does not eliminate affinity bias. But it makes it harder to operationalize and easier to identify when it does occur.

Consistent Criteria Reduce “Culture Fit” as a Rejection Rationale

“Culture fit” is one of the most common and most equity-problematic reasons given for candidate rejection in interview-based hiring. It is typically described as a holistic impression that the candidate would or would not thrive in the organizational environment, and it is frequently a proxy for similarity to the existing team rather than a genuine assessment of value alignment.

Competency-based interviewing does not eliminate the culture assessment, but it forces it to be defined in behavioral terms rather than holistic impression. “Demonstrates collaborative problem-solving in cross-functional contexts” is a culture-relevant competency that can be assessed behaviorally. “Just did not seem like a good fit” is not.

Documented Evidence Creates Auditability

When a competency-based interview produces documented rubric scores with evidence summaries for each competency, the hiring decision has an audit trail. If a candidate from an underrepresented background challenges a rejection, the organization can demonstrate what competencies were assessed, what evidence each evaluator used, and how the scores compared across candidates. This auditability is both a legal protection and an equity mechanism: the knowledge that assessment decisions will be documented tends to improve the quality and fairness of those decisions.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Hiring Managers Not Following the Question Guide | Frame the structured guide as a tool that makes the debrief faster and the decision easier; demonstrate with data that unstructured interviews produce weaker decisions |

| Low Inter-Rater Reliability Despite Framework | Run calibration sessions before each cycle; add more specific behavioral anchors to the rubric at the levels where disagreement is highest |

| Competency Framework Outdated Relative to Role Evolution | Schedule annual framework review; update based on post-hire performance validation data |

| Candidates Giving Hypothetical Responses | Train interviewers in redirection technique; build explicit probes for past-experience specificity into the question guide |

| Panel Debrief Dominated by Senior Voice | Implement independent scoring before discussion as a non-negotiable protocol; present all scores simultaneously rather than sequentially |

Real-World Case Studies

Case Study 1: The Financial Services Firm

A financial services firm had been experiencing a persistent 34% first-year attrition rate among relationship manager hires, despite a rigorous multi-round interview process. Post-exit analysis consistently found that the primary failure mode was poor client communication under pressure: relationship managers who presented well in interviews but struggled when client conversations became difficult or ambiguous.

A review of the interview process revealed that no structured competency covered client communication specifically. The interview relied on general behavioral questions about communication style and an unstructured conversation with the hiring manager, who typically spent time exploring the candidate’s industry knowledge.

A competency-based redesign added “managing difficult client conversations” as an explicit competency with behavioral anchors calibrated to the relationship manager level, two mapped behavioral questions requiring specific past-experience evidence, and a case component where candidates responded to a simulated difficult client situation.

In the first two hiring cycles following the redesign, first-year attrition in the relationship manager cohort dropped from 34% to 17%. Hiring manager satisfaction with new hire quality improved from 3.3 to 4.4 out of 5.0. The specific competency addition changed the composition of the hire without changing anything else in the sourcing or compensation approach.

Case Study 2: The Technology Company

A technology company conducting engineering hiring across four global locations was experiencing significant variance in hiring decisions: candidates who were rejected by one office were being hired by another for equivalent roles, and the performance data showed no pattern that would justify the disparity. Investigation revealed that each location’s engineering team had developed its own informal interview approach, with different questions, different criteria, and no shared framework.

They implemented a global competency-based interview framework for engineering roles, covering five competencies with calibrated behavioral anchors across three seniority levels, a standardized question bank per competency, and a calibration program run quarterly with all interviewers. Within two hiring cycles, inter-rater reliability across locations (measured using kappa) improved from an average of 0.31 to 0.66. The hire quality variance across locations reduced significantly, and the quarterly calibration sessions became a mechanism for sharing interview practice learnings that improved individual interviewer capability over time.

Case Study 3: The Healthcare Provider

A healthcare provider building a clinical leadership pipeline implemented a competency-based interview program after discovering that its leadership promotion decisions were producing poor outcomes: promoted leaders were consistently rated highly on clinical competencies but struggling with the strategic thinking, stakeholder management, and team development competencies that their leadership roles demanded. The promotion interview had focused almost entirely on clinical capability, which was necessary but not sufficient for the expanded scope.

The competency framework for clinical leadership roles was rebuilt to include both clinical excellence and the behavioral competencies most predictive of leadership effectiveness in the organization’s specific context. Three-level behavioral anchors were developed by interviewing the organization’s highest-rated clinical leaders about the specific situations and behaviors that had most shaped their leadership effectiveness.

In the 18 months following implementation, the proportion of newly promoted clinical leaders rated as “performing at or above expectations” at their 12-month review improved from 54% to 79%. The pipeline of individuals identified through the competency-based process as high-potential clinical leaders also diversified: the more objective, structured assessment reduced the degree to which informal sponsorship and social visibility were determining who was nominated for leadership development conversations.

Building a Competency-Based Interview Dashboard: What to Track?

Competency-Based Interviewing Across the Hiring Process

Role Design and Framework Development

The competency framework should be developed as part of role design, alongside the job description and the scoring rubric, before sourcing begins. This sequencing ensures that the sourcing strategy, the candidate persona, and the assessment framework are all aligned around the same definition of what success looks like.

Structured Interview Execution

During live interviews, the competency-based structure gives interviewers clarity about what they are there to assess and how. The question guide prevents the natural tendency to ask whatever comes to mind, and the rubric prevents the natural tendency to score on holistic impression. Both constraints make interviewers more effective without requiring them to be exceptional.

Panel Debrief and Decision Making

The panel debrief in a competency-based process is a structured evidence review rather than an open-ended impression exchange. Independent scores are shared first, agreements are noted, disagreements are discussed with reference to specific evidence from candidate responses, and the decision framework works from competency-weighted aggregate scores. The conversation is still qualitative and human, but it is grounded in a shared framework rather than competing mental models.

Onboarding and Development Planning

The competency assessment data generated during the interview process is a resource that should not end at the hire decision. Sharing aggregated competency assessment insights with the onboarding manager, specifically which competencies the new hire demonstrated at strong levels and which were assessed as development areas, enables a more targeted and more effective early-tenure development plan.

The Real Cost of Unstructured Interviewing: By the Numbers

| Interview Approach | IRR (Kappa) | First-Year Attrition | Hiring Manager Satisfaction | Est. Annual Cost (100 hires) |

|---|---|---|---|---|

| Unstructured | 0.28 | 27% | 3.1 / 5.0 | $810,000 |

| Structured (No Competency Map) | 0.41 | 21% | 3.5 / 5.0 | $630,000 |

| Competency-Based (Calibrated) | 0.69 | 13% | 4.3 / 5.0 | $390,000 |

The cost calculation includes first-year attrition replacement at $30,000 per departure, rehiring cost at $8,000 per failed hire replaced, and the estimated productivity cost of underperforming hires in the 12-month window. At 100 hires per year, the difference between unstructured interviewing and calibrated competency-based interviewing represents $420,000 in annual recoverable cost, from a process change that requires framework development and interviewer training but no additional headcount or technology

Related Terms

| Term | Definition |

|---|---|

| Competency Framework | The defined set of skills, behaviors, and attributes that predict success in a given role; the foundation of competency-based interviewing |

| Behavioral Interview | A questioning technique that asks candidates about past experiences; the primary tool used within a competency-based interview structure |

| Behavioral Anchored Rating Scale (BARS) | A scoring rubric that defines what strong, average, and weak responses look like at each competency level with specific behavioral descriptors |

| Inter-Rater Reliability (IRR) | The degree of agreement between independent assessors evaluating the same candidate; the primary quality metric for competency-based interview programs |

| STAR Framework | Situation, Task, Action, Result: the structural model for organizing behavioral interview responses and evaluating their completeness |

| Structured Interview | Any interview format that uses standardized questions and defined scoring; competency-based interviews are the most rigorous form of structured interviewing |

Frequently Asked Questions

How many competencies should a role have in its interview framework?

Four to eight competencies is the range that consistently works well in practice. Below four, the framework may miss critical performance dimensions. Above eight, the interview becomes too long, assessors experience fatigue that degrades scoring quality, and candidates find the process exhausting. Most well-designed competency frameworks for professional roles settle on five or six competencies, with one or two assessed per interview stage in a multi-stage process.

How is a competency different from a skill?

A skill is a specific technical capability: proficiency in Python, the ability to build a financial model, knowledge of regulatory frameworks. A competency is a broader behavioral pattern that predicts performance: analytical thinking, stakeholder influence, adaptive communication. Skills can be listed, taught, and tested directly. Competencies must be assessed through behavioral evidence of how a person actually approaches situations that require them. The competency framework for a role typically includes both skill-adjacent competencies (like technical problem-solving) and interpersonal behavioral competencies (like collaborative leadership).

Can competency-based interviews be used for entry-level or graduate hiring?

Yes, with adaptation. Entry-level candidates may lack extensive professional experience to draw from, so behavioral questions should explicitly allow responses from academic, volunteer, extracurricular, or part-time work contexts. The competency framework itself should reflect the behaviors that predict development potential and learning agility at the entry level rather than the fully developed competencies expected at senior levels. Many organizations use a simplified competency framework with two to three core competencies for entry-level assessment, expanding to five or more for more senior roles.

How should the competency framework handle roles that evolve rapidly?

Build framework review into the hiring cycle as a standing process step: before each new search, the hiring manager reviews the competency framework and confirms it still reflects the role’s current demands. Any competencies that have become less central to role success should be removed or deprioritized. Any new competency demands that have emerged should be added. The bi-annual performance validation cycle that compares interview scores against performance ratings will also surface competencies that are no longer predicting outcomes, signaling framework drift before it significantly affects hiring quality.

Should candidates be told in advance which competencies they will be assessed on?

Yes, and sharing this information consistently produces better quality responses and higher candidate satisfaction scores without inflating the scores in a way that reduces their predictive validity. Candidates who know they will be assessed on stakeholder communication, data-driven decision-making, and change management can prepare more specific, more detailed behavioral examples than those who receive generic interview preparation guidance. The interview is designed to assess their actual behavioral history, not their ability to recall it spontaneously under pressure. Transparency about what will be assessed produces richer evidence.

Conclusion

The competency-based interview is not a test with right answers. It is a structured process for gathering the specific behavioral evidence that allows a meaningful comparison between candidates and a defensible hiring decision.

What it replaces is not the human judgment in the room. It is the chaos in the room: the misaligned criteria, the unspoken disagreements, the impressions that cannot be compared because they were collected in different ways by different people looking for different things. When those problems are eliminated, the human judgment that remains is not just more consistent. It is more accurate, because it is applied to a shared framework against shared evidence rather than competing against other people’s impressions.

The organizations that have built genuine competency-based interview programs have not just improved their IRR scores. They have built interviewing cultures where the debrief is a conversation about evidence rather than a contest of opinions, where the hiring decision is something everyone can explain rather than something they have to defend, and where the candidates who get hired are consistently the ones who were most capable of doing the job, not just the most compelling in the room.

That is what the framework is for. And it works.