The interview has always been a conversation; what’s changed is the “where” and “when.” In 2026, an interview might happen in a boardroom or via an asynchronous interview recorded by a candidate at 9 PM. This isn’t a “lesser” version of the traditional sit-down, it’s a deliberate evolution of the candidate journey.

Digital interviews come in two flavors: synchronous (live video) and asynchronous (pre-recorded). While some see them as mere conveniences, savvy organizations use them to replace or supplement Automated Screening. By decoupling the assessment from a shared scheduling window, companies can evaluate communication and judgment more flexibly.

However, the technology only works if the design is intentional. Integrating a behavioral interview framework into these digital touchpoints ensures you’re measuring actual competency rather than just “on-camera presence.” When done right, this tech-forward approach respects the Candidate Experience by offering flexibility while providing recruiters with deeper insights than a resume ever could. The goal in 2026 isn’t just to use digital tools because they’re available, but to use them to find the human fit behind the digital screen.

The primary metric for digital interview effectiveness is the Digital Interview Conversion Rate (DICR): the proportion of digital interview completions that result in advancement to the next hiring stage.

DICR (%) = (Candidates Advancing from Digital Interview ÷ Candidates Completing Digital Interview) × 100

Tracked alongside completion rate (the proportion of candidates invited who complete the interview) and compared against the advancement rate from equivalent in-person screening stages, DICR reveals whether the digital interview format is performing as an effective screening tool or whether it is producing either too much or too little differentiation between candidates.

What is a Digital Interview?

A digital interview is a technology-mediated candidate assessment in which interview content is exchanged via video platform, in either live synchronous or pre-recorded asynchronous format, enabling remote evaluation of candidates that produces comparable qualitative assessment to in-person interviews while expanding geographic reach, improving scheduling flexibility, and in the asynchronous format, enabling standardized question delivery and time-shifted review.

The two primary formats differ in fundamental ways that affect how they should be used:

Synchronous Digital Interview (Live Video)

A real-time conversation conducted via video conferencing platform. The dynamic is conversationally equivalent to an in-person interview: candidates and interviewers interact live, questions can be followed up, candidates can ask questions, and the full bandwidth of real-time conversation is available. The primary advantages over in-person are geographic flexibility and reduced scheduling friction. The primary limitation is that it retains all the scheduling complexity of the in-person format and is subject to the same unstructured assessment risks when not combined with a standardized question framework.

Asynchronous Digital Interview (Pre-Recorded)

A format in which candidates record video responses to pre-set questions within a defined time window, without a live interviewer present. Candidates receive the questions, record their responses (typically with a limited number of takes and a time limit per response), and submit the recording for asynchronous review.

The primary advantages are scheduling independence (candidates complete the interview when convenient, reviewers watch when convenient), standardized question delivery (all candidates receive identical questions in identical format), and the ability to evaluate a larger candidate volume without proportional recruiter time investment. The primary limitation is the absence of real-time conversational exchange, which constrains the depth and follow-up available.

Is Your Digital Interview Measuring Candidates or Just Filtering Them?

Digital interviews, particularly the asynchronous format, are one of the most commonly misused tools in the modern recruiting toolkit. They are adopted for efficiency reasons, deployed with minimal design investment, and evaluated primarily on completion rates and time savings rather than on whether they are producing better hiring decisions.

The misuse pattern is recognizable: a company adds an asynchronous video interview to their process because they want to reduce the volume of phone screens the recruiting team is conducting. They set up five generic questions that could apply to any professional role. They ask candidates to record 90-second responses. They review the recordings looking for confident presentation and professional appearance. They advance candidates who “seemed strong.” They have no rubric, no competency mapping, and no validation that any of this correlates with performance in the role.

This process is not a screening improvement. It is phone screening with video recording. The efficiency gain is real. The assessment quality improvement is not.

A well-designed asynchronous digital interview is something fundamentally different: it asks competency-mapped behavioral questions, evaluates responses against a structured rubric, produces scores that can be compared across candidates on specific dimensions, and has been validated (or is being built toward validation) against post-hire performance data. This version of the tool is not just more efficient than a phone screen. It is more predictive, more consistent, and more equitable, because standardized question delivery eliminates the variance introduced by different interviewers asking different questions in different ways.

Research comparing well-designed asynchronous video interviews with unstructured phone screens finds that the asynchronous format produces 34% higher inter-rater reliability and 28% better prediction of first-round in-person interview performance, when combined with structured rubrics and assessor calibration. The efficiency gain is real and substantial. But it is secondary to the assessment quality gain, which is what actually improves hiring decisions.

The scenario that illustrates the design gap: two companies in the same industry both implement asynchronous video interviewing for their customer success hiring. Company A uses a vendor platform with five generic questions, no rubric, and no calibration. Advancement decisions are made based on reviewer impression. Company B maps three competency-based questions to the specific capabilities most predictive of customer success performance in their context, develops behavioral anchors for each competency at three rating levels, runs a calibration session with all reviewers before the first hiring cycle, and tracks the correlation between video interview scores and 90-day performance ratings.

Three hiring cycles later, Company A has reduced phone screen volume by 40% and has seen no measurable change in hire quality. Company B has reduced screening time by 38%, improved hire quality scores by 23%, and has begun using the validated competency data from video reviews to refine their onboarding program. Same technology. Completely different outcomes.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Digital Interview Format Decision: A Framework

When to Use Synchronous Digital (Live Video)

Live video interviews are most appropriate when the role requires direct interpersonal assessment that benefits from real-time conversational exchange: senior leadership roles where relationship dynamics and strategic thinking depth are being assessed; client-facing roles where the interview itself is a demonstration of the communication style the role requires; and final-round assessments where the depth of two-way conversation has more value than the consistency of standardized delivery.

Live video is also appropriate when the candidate population expects it and when the absence of a live format would create a negative candidate experience signal. Senior candidates for specialized roles who are asked to record responses to five questions rather than speak with a senior interviewer may perceive the asynchronous format as a lack of organizational investment in the candidate experience.

When to Use Asynchronous Digital (Pre-Recorded)

Asynchronous video interviews are most appropriate for high-volume early-stage screening where standardized assessment of a consistent question set adds more value than live conversational follow-up; for roles where communication quality, professional presence, and specific verbal competencies are being assessed at an early stage; and for organizations with geographically distributed candidate pools where scheduling live interviews across time zones creates significant friction.

They are also particularly effective for roles where the candidate response can be meaningfully evaluated against a rubric without follow-up: structured behavioral questions that ask for a complete STAR-format response, situational judgment questions that assess reasoning against a defined framework, and technical explanation questions where the candidate’s ability to explain a concept clearly can be assessed from a recording.

The format is less appropriate for senior roles where candidates will perceive it as impersonal, for highly specialized technical assessments where interactive follow-up is essential to assess depth, and for organizations without the design investment to build structured rubrics and assessor calibration processes.

Digital Interview vs. Related Assessment Formats

| Format | Timing | Interviewer Presence | Standardization Level | Best Use Case |

|---|---|---|---|---|

| Asynchronous Video Interview | Candidate-scheduled, reviewer-time-shifted | None during recording | High (identical questions for all) | High-volume early screening; competency assessment at scale |

| Synchronous Video Interview | Scheduled live session | Real-time | Moderate (structured guide recommended) | Mid-to-final round; relationship and depth assessment |

| In-Person Interview | Scheduled on-site | Present | Variable | Final rounds; culture immersion; senior roles |

| Phone Screen | Scheduled live call | Voice only | Low typically | Initial qualification check |

| AI-Scored Video Assessment | Candidate-scheduled | None | Very high (automated scoring) | Large-scale, early-stage filtering with structured rubric |

| Panel Interview (Video) | Scheduled live session | Multiple interviewers, video | Moderate | Structured multi-perspective assessment; competency coverage |

What the Experts Say?

The companies getting the most from asynchronous video interviewing are not using it to avoid talking to candidates. They are using it to talk to candidates more fairly, because every candidate gets the same questions in the same format with the same time to respond. That consistency is what produces better data. The efficiency is a bonus.

– Madeline Laurano, founder of Aptitude Research and talent acquisition technology analyst

Designing a High-Quality Digital Interview

Step 1: Map Questions to Competencies

Before selecting or writing any interview questions, define the specific competencies being assessed in the digital interview stage. Each question should map explicitly to one competency, and the full question set should cover the three to five competencies most critical to evaluate at this stage of the process. Generic questions (“Tell me about yourself,” “What is your greatest strength?”) do not map to competencies and should not be in a structured digital interview.

For asynchronous interviews, the mapping should be visible in the review interface: the reviewer should know which competency each question is assessing before they score the response.

Step 2: Design Questions for Asynchronous Response

Behavioral questions designed for live interviews often do not translate directly to asynchronous format. In a live interview, a follow-up probe can extract the Action element from a candidate whose initial response was situation-heavy. In an asynchronous format, there is no follow-up. Questions should be written to elicit complete STAR-structured responses without prompting: “Tell me about a specific time when you had to adapt your communication style for a difficult audience. Please describe the situation, what you did specifically, and what the outcome was.” The specificity of the question design carries more weight in asynchronous format than in live conversation.

Step 3: Build Behavioral Rubrics Before Reviewing

Every competency assessed in the digital interview should have a behavioral rubric with three to five rating levels and specific behavioral anchors describing what a strong, average, and weak response looks like. Rubrics built before reviewing reduces the anchoring effect of early responses on later scoring and produces significantly higher inter-rater reliability than impression-based review.

Step 4: Calibrate Reviewers Before the First Cycle

Before any live candidate reviews, all reviewers should evaluate the same set of sample responses independently, compare their scores, and discuss divergences. The calibration session identifies rubric interpretation differences before they affect real candidate assessments and is the single highest-impact reliability improvement available for asynchronous video interview programs.

Step 5: Set Candidate-Friendly Technical Parameters

Response time limits should reflect what a thoughtful candidate genuinely needs to give a complete response to a competency-based behavioral question: 90 seconds is typically too short for a full STAR-structured response to a complex question; 3 minutes is typically sufficient. The number of takes allowed per question affects response quality and candidate experience: one practice question with unlimited takes followed by one take per assessment question is a common and candidate-positive design. Advance notice of question topics at the competency level (not the specific questions) is both equitable and produces richer responses.

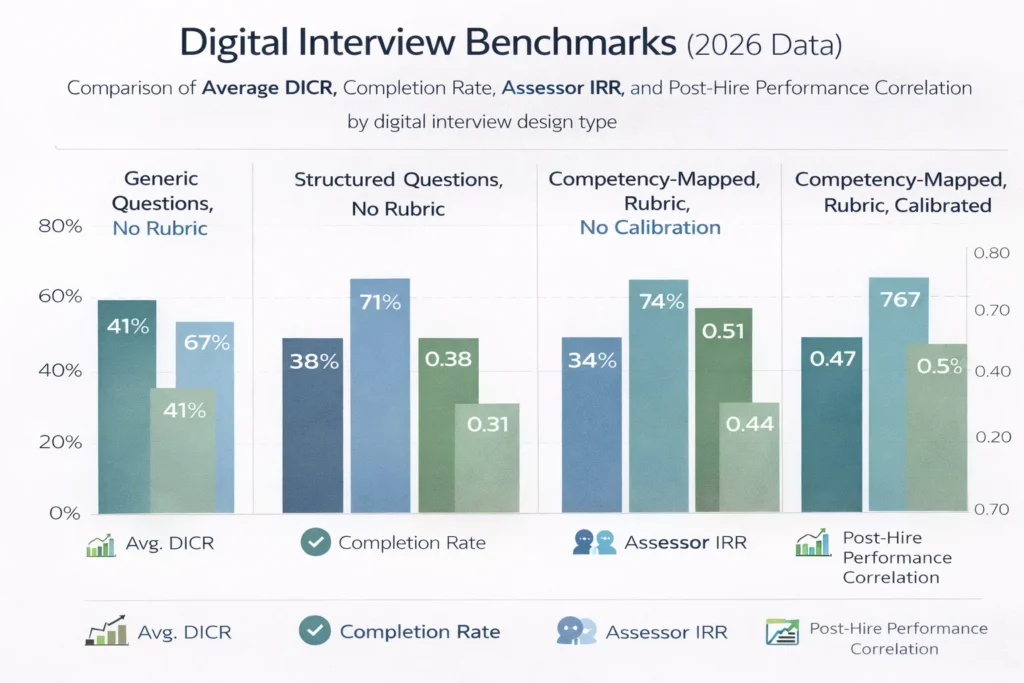

Digital Interview Benchmarks (2026 Data)

| Digital Interview Design Type | Avg. DICR | Completion Rate | Assessor IRR | Post-Hire Performance Correlation |

|---|---|---|---|---|

| Generic Questions, No Rubric | 41% | 67% | 0.29 | 0.21 |

| Structured Questions, No Rubric | 38% | 71% | 0.38 | 0.31 |

| Competency-Mapped, Rubric, No Calibration | 34% | 74% | 0.51 | 0.44 |

| Competency-Mapped, Rubric, Calibrated | 31% | 76% | 0.67 | 0.58 |

Several patterns in this table are worth examining. First, the DICR actually decreases as design quality improves: a well-designed, competency-mapped digital interview with calibration is more selective (advancing fewer candidates) because it is making finer distinctions between candidate quality. A high DICR on a generic-question interview is not evidence of good screening. It is evidence of insufficient differentiation.

Second, the post-hire performance correlation for a calibrated, competency-mapped digital interview (0.58) approaches the validity ceiling for most behavioral assessment formats. This figure exceeds the performance correlation for most unstructured phone screens and many in-person interviews conducted without structured rubrics.

Third, completion rate increases as design quality improves, which may seem counterintuitive. The explanation is likely that a well-designed interview with clear competency-relevant questions feels purposeful to candidates rather than arbitrary, and candidates are more willing to invest time in a process that appears to be genuinely evaluating them fairly.

Key Strategies for Maximizing Digital Interview Effectiveness

How AI Is Transforming Digital Interviewing?

Automated Question Generation

AI systems can generate competency-mapped behavioral interview questions calibrated to role level and competency framework from a role description and competency input, significantly reducing the question design burden for hiring teams implementing asynchronous video interviews across many role types.

NLP-Assisted Response Analysis

Natural language processing tools can analyze the structural completeness of behavioral responses (presence of all STAR elements), the relevance of the example provided to the target competency, and the specificity of the evidence offered. These analyses can serve as a scoring aid for human reviewers, flagging incomplete responses or highlighting specific elements of a response that are most relevant to the competency rubric.

Bias Detection in Scoring

AI tools that monitor reviewer scoring patterns across demographic groups can identify potential bias signals in human review, for example if a specific reviewer is systematically scoring candidates from one demographic group lower than their response content warrants relative to the calibrated rubric. These alerts are not definitive bias identification but are early warning signals that warrant investigation.

Predictive Validity Improvement

Platforms that connect digital interview scores to post-hire performance data over time can identify which specific question types, response elements, and competency scores are most predictive of performance for specific role families, enabling continuous improvement of the question and rubric design based on organizational performance evidence rather than general best practices.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Digital Interviewing and Diversity, Equity, and Inclusion

Digital interviewing has a complex relationship with DEI outcomes that involves both significant equity opportunities and specific risks.

The Equity Opportunity: Standardization

Asynchronous video interviewing, when properly designed, is one of the most structurally equitable assessment formats available because it delivers identical questions in identical format to every candidate. In a traditional phone or in-person screening environment, different interviewers ask different questions in different ways, creating variance that research consistently shows is amplified by demographic bias. Asynchronous video interviews eliminate this source of variance by design.

The equity advantage is fully realized only when combined with structured rubrics and assessor calibration. Unstructured review of asynchronous video responses can introduce as much bias as unstructured in-person assessment because the format removes question variance without addressing evaluator variance.

The Equity Risk: Technology Access and Comfort

Asynchronous video interviewing assumes that all candidates have reliable internet access, a functional camera and microphone, a private space for recording, and sufficient familiarity with video recording technology to produce a response that reflects their actual capabilities rather than their technology access. These assumptions are not universally valid, and candidates from lower socioeconomic backgrounds, older candidates, candidates in geographies with unreliable internet infrastructure, and candidates with certain disabilities may face disproportionate barriers to performing well in asynchronous video formats that have nothing to do with their job-relevant capabilities.

Mitigation strategies include: offering a phone-based alternative for candidates who cannot complete the video format; providing sufficient response window (72 hours minimum) to reduce the impact of scheduling constraints; offering practice questions with unlimited takes; and not penalizing candidates for environmental factors such as home office quality or lighting.

AI Scoring and Demographic Bias

AI scoring tools applied to video interview responses have shown documented demographic bias in multiple independent audits: differences in scoring by race, gender, and age that correlate with demographic characteristics rather than response content. Organizations using AI-assisted scoring in digital interviews should require vendors to provide demographic bias audit data, conduct their own adverse impact analysis of scoring outputs, and ensure that human review remains the determinative assessment layer rather than AI scoring alone.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Low Candidate Completion Rates | Add personalized video introduction from hiring team; reduce response time requirements; provide clear instructions and practice question |

| Reviewer Impression-Based Scoring | Build behavioral rubrics before first review cycle; conduct calibration sessions; require evidence documentation for each score |

| Generic Questions Not Differentiating Candidates | Map questions to specific role competencies; design for complete STAR-format responses; remove questions that all candidates answer similarly |

| Candidate Experience Complaints About Impersonality | Add human video introduction; shorten the question set; provide specific timeline communication after submission |

| AI Scoring Producing Demographic Variance | Require vendor audit data; conduct independent adverse impact analysis; ensure human review is the determinative layer |

Real-World Case Studies

Case Study 1: The Financial Services Company

A financial services company with a high-volume graduate hiring program was conducting phone screens with 400 to 600 candidates per hiring cycle, requiring 12 to 15 recruiter hours per cycle just for initial screening. They implemented an asynchronous video interview platform with five generic questions and a two-take recording limit per question.

In the first cycle, completion rate was 58%. The recruiting team reviewed recordings looking for confident presentation and clear communication. Time-to-screen improved significantly. Hire quality, measured by 90-day manager satisfaction, showed no improvement.

An assessment design review identified the root issue: the five generic questions were not differentiating candidates on the competencies most relevant to the graduate roles. A redesign produced three competency-mapped behavioral questions aligned to the graduate program’s competency framework, a structured rubric with behavioral anchors, and a 60-minute assessor calibration session.

In the second cycle after redesign, completion rate improved to 74%. DICR dropped from 44% to 29% (more selective screening). Assessor IRR improved from 0.31 to 0.63. At the 90-day review, the redesigned cohort’s manager satisfaction scores were 31% higher than the prior year’s cohort. Time-to-hire for the program improved by 11 days because more selective screening meant fewer candidates advanced to in-person stages that were not going to result in offers.

Case Study 2: The Technology Startup

A 90-person technology startup was using live video interviews for all early-stage screening, creating a significant scheduling burden for two recruiters managing 15 to 20 open roles simultaneously. Each early-stage screen required 30 minutes of recruiter time plus scheduling overhead averaging 45 minutes per session.

They transitioned early-stage screening to asynchronous video for roles below the senior manager level, implementing a three-question competency-based format with a structured review rubric. The transition reduced early-stage screening time per candidate from 75 minutes to 22 minutes (including review). Recruiters reallocated the saved time to deeper engagement with mid-stage candidates and hiring manager relationship development.

Candidate completion rate was initially 63%, below the 75% target. Adding a 90-second personalized video introduction from the relevant hiring manager for each role improved completion rate to 79% within two hiring cycles. Candidate feedback on the asynchronous format was positive: 71% rated it as fair, and 67% said it gave them a better chance to present themselves than a phone screen would have.

Case Study 3: The Healthcare Network

A regional healthcare network was filling nursing and allied health roles across 12 facilities, with candidates often located 60 to 90 minutes from the nearest facility. In-person initial interviews were producing significant candidate dropout: 34% of candidates who were scheduled for in-person screens were not attending, citing travel burden as the primary reason.

They implemented synchronous video interviews for all initial clinical screening rounds, with an option for asynchronous video for candidates who could not attend a scheduled live session. The asynchronous option used a five-question format developed with clinical team input and reviewed by the facility’s nurse managers.

Candidate no-show rate for initial screens dropped from 34% to 9%. Geographic reach expanded to include qualified candidates who would not previously have been able to participate in in-person screening. The time between initial contact and completed initial screen dropped from 11 days to 4 days. First-year retention for the new hire cohort was comparable to the prior in-person screening cohort, confirming that the format change had not compromised assessment quality.

Building a Digital Interview Dashboard: What to Track?

Digital Interviewing Across the Hiring Lifecycle

Early-Stage Screening

The asynchronous video interview is most commonly and most appropriately used at the early-stage screening phase: after initial application review and before live recruiter or hiring manager involvement. At this stage, its ability to deliver standardized questions to a high candidate volume and produce comparable, rubric-scored data is most valuable.

Mid-Stage Assessment

Synchronous video interviews function equivalently to in-person interviews at mid-stage and can be used for competency-specific assessments by hiring manager panels, technical assessments, and culture-oriented conversations. The live format is more appropriate than asynchronous at this stage because the depth of two-way conversation adds value that standardized recording cannot replicate.

Final Round and Offer

For senior roles, in-person final rounds remain the gold standard where feasible, because the full-bandwidth in-person experience produces assessment data and relationship development that video cannot fully replicate. For geographically distributed hiring or candidates who cannot travel, synchronous video final rounds are an effective alternative. Asynchronous format is generally inappropriate for final-round assessment.

Related Terms

| Term | Definition |

|---|---|

| Asynchronous Video Interview | A digital interview format in which candidates record responses to pre-set questions without a live interviewer present, for time-shifted review by the hiring team |

| Synchronous Video Interview | A live video call interview conducted through a video conferencing platform, replicating the real-time conversational dynamic of in-person interviewing |

| Structured Interview | Any interview format using standardized questions and rubric-based scoring; the methodology most compatible with and most needed in digital interview design |

| One-Way Interview | An alternative term for asynchronous video interview, referring to the one-directional recording format |

| AI-Assisted Scoring | The use of machine learning and NLP tools to analyze digital interview responses and generate competency scores or ranking signals; requires human review and regular bias auditing |

| Candidate Experience | The overall quality of a candidate’s process experience; digital interview design and communication quality are significant components at the screening stage |

Frequently Asked Questions

Is a video interview as effective as an in-person interview?

Asynchronous video is superior for early screening due to its standardization and reliability. However, in-person interviews remain essential for final rounds, where relationship depth and cultural signals are critical. The most effective strategy uses digital tools for consistency and in-person meetings for conversational depth.

What is the difference between a digital interview and an AI interview?

A digital interview uses video technology, while an AI interview involves algorithms in delivery or scoring. Fully automated AI reviews are controversial and face heavy regulation due to bias risks. For best results, use AI as an analytical tool to assist human reviewers rather than replacing them entirely.

How long should an asynchronous digital interview be?

Aim for 3–5 questions (2–3 minutes each) to balance assessment depth with application completion rates. Exceeding 15 total minutes leads to significant candidate drop-off. For senior roles, a 12-minute limit—4 questions at 3 minutes each—is the ideal standard for maintaining a positive candidate experience.

Should candidates be warned about digital interview questions in advance?

Providing advance notice of competency areas, not specific questions, results in richer, more equitable responses. Since behavioral interviews assess experience and judgment rather than “correct” answers, this transparency doesn’t compromise predictive validity. Instead, it prevents “surprises” that typically disadvantage candidates with less access to professional interview coaching.

How do you handle candidates who struggle with the technology?

Provide a phone-based alternative for candidates who cannot complete the video format due to technology access barriers. Document the technical accommodation clearly in the process. Evaluate phone responses against the same rubric as video responses where possible. Do not score candidates on the quality of their video production, lighting, or audio quality unless these are genuinely relevant to the role. The assessment should be of the candidate’s capabilities, not their home office setup.

Conclusion

The digital interview did not replace the interview. It changed the conditions under which interviews happen, expanded the pool of candidates who can participate, and created a new set of design decisions about how to get the most from a format that differs meaningfully from the in-person conversation it often supplements or replaces.

The organizations using digital interviews well have recognized something important: the format is not the assessment. A video camera recording a candidate answering generic questions is not inherently more predictive than a phone call asking the same questions. What makes a digital interview more effective than an alternative is the quality of the question design, the rigor of the rubric, the calibration of the reviewers, and the validation of the tool against post-hire outcomes.

When all of those elements are present, the digital interview is not a compromise. It is a structural improvement over the unstructured, high-variance, geography-limited, scheduling-intensive alternative it replaces. It gives more candidates a fair chance to demonstrate their capabilities, gives more reviewers a consistent framework for evaluating those demonstrations, and gives the organization more comparable, more defensible, and ultimately more predictive data on which to build hiring decisions.

That is what an interview is for. The camera is just the room.