Here’s a scenario that should feel familiar to anyone who’s ever run a high-volume hiring campaign. You have 200 applicants for a role. You need to get to a shortlist of 15. You’ve got two recruiters, a hiring manager who’s available Tuesday afternoons and alternating Fridays, and candidates scattered across four time zones. The math on live screening interviews doesn’t work , not without burning out your team, losing half the candidate pool to scheduling friction, and spending three weeks on a process that should take five days.

This is the problem the asynchronous interview was built to solve.

An asynchronous interview is a pre-recorded, candidate-led evaluation format in which applicants respond to a structured set of questions , on video, audio, or in writing , on their own schedule, without a live interviewer present. The responses are then reviewed by recruiters or evaluated by AI systems at a time that suits the hiring team. No calendar coordination. No “does Tuesday at 3pm work for you?” email chains. No candidate dropping out because your only available slot clashed with their current employer’s team meeting.

In 2026, asynchronous interviews aren’t a compromise on quality , they’re a deliberate upgrade to the early-funnel screening process. AI-powered hiring platforms have made it possible to not just collect these responses, but to analyze them: scoring verbal communication, evaluating response structure, flagging behavioral signals, and producing ranked candidate shortlists that would previously have required hours of recruiter time. The result is a screening layer that scales with the pipeline, not against it.

The core metric governing this format is the Screening Efficiency Ratio (SER) , the number of qualified candidates advancing per hour of recruiter time invested. In live phone-screen models, the average SER hovers around 1.8. In well-executed async interview programs, it consistently reaches 6.0 or above. That’s not a marginal improvement. That’s a structural rethink of where human attention belongs in the hiring funnel.

What is an Asynchronous Interview?

An asynchronous interview is a structured, self-paced evaluation in which candidates record responses to predetermined questions , typically via video , without a live interviewer, and submit them for review at the recruiter’s convenience.

The format eliminates the single biggest constraint in early-stage screening: the need for both parties to be available simultaneously. Unlike a live phone screen or video interview, no scheduling is required. Unlike a written application, it captures verbal communication, presence, and the kind of behavioral signals that text can’t convey.

In practical terms, candidates receive a link, review the interview brief, and record responses within a defined window , typically 24 to 72 hours. Each question usually comes with a preparation time (30 seconds to two minutes) and a response time limit (one to three minutes). The finished recording is submitted and enters a review queue that the hiring team works through on their own timeline.

In 2026, the majority of asynchronous interview platforms offer AI-powered response analysis as a core feature: sentiment scoring, communication clarity ratings, keyword alignment with role competencies, and flagging of high-potential responses for human review. The human recruiter remains in the loop , but for the nuanced, high-judgment work, not the administrative volume.

Is the Live Phone Screen Dead , or Just Overdue for Retirement?

Let’s be specific about what the traditional phone screen actually costs. A 30-minute screening call, when you account for the scheduling email thread, recruiter preparation, the call itself, and the debrief note, consumes 60 to 90 minutes of total recruiter time per candidate. For a pipeline of 200 applicants, that’s up to 300 hours before a single qualified candidate has been identified. At a fully-loaded recruiter cost of $55/hour, you’ve spent $16,500 on a process that a well-configured async interview program can replicate in 12 hours of review time.

That’s the operational case. But the more interesting argument isn’t about cost , it’s about signal quality.

The phone screen is, structurally, a flawed assessment instrument. It’s conducted under time pressure on both sides. The recruiter is typically working from a loose framework rather than a standardized rubric. The candidate is responding in real time, without preparation, to questions they’ve never seen. The result is a conversation that tells you a lot about how someone performs in a rushed, unprepared call , which is a narrow and not particularly predictive proxy for job performance.

The async interview introduces structured standardization to a stage of the funnel that has historically operated on improvisation. Every candidate answers the same questions in the same sequence, reviewed against the same criteria. The playing field is leveled , not by making the process easier, but by making it consistent. Companies replacing first-round phone screens with async programs report an average reduction in time-to-shortlist of 68%, while simultaneously improving hiring manager satisfaction with the quality of candidates presented.

Consider the scenario this prevents. A candidate for a content strategy role submits an application that looks unremarkable on paper , a mid-tier university, a mismatched job title, two years at a company most people haven’t heard of. In a phone-screen-first process, they’re screened out in the resume review because the recruiter has 200 applications and limited bandwidth for borderline cases. In an async process, they get three minutes to answer: “Walk me through a piece of work you’re proud of and why.” They do it with clarity, specificity, and genuine enthusiasm. They’re the shortlist. They’re the hire. The phone screen model never gave them the chance.

The ROI math is real. If an organization hires 100 people per year and reduces time-to-hire by 12 days through async adoption, with an average revenue impact of $1,200 per unfilled role per day, the annual value recovered is $1.44 million , before accounting for recruiter overhead reduction or the offer acceptance improvements that come from a faster, more respectful process.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind the Asynchronous Interview

The async interview is a fundamentally different psychological environment from the live interview , and understanding what that means for the data you collect is essential to designing one that works.

Performance Anxiety and the Camera Effect

Recording yourself answering interview questions alone , timer counting down, camera light blinking , is genuinely uncomfortable. The absence of a live interviewer removes some anxiety sources: no status differential, no one reacting in real time, no social dynamic to navigate. But it replaces them with a different pressure: the permanence of the recording and the awareness that your response will be reviewed, possibly multiple times, by people you can’t respond to.

Research consistently shows that preparation and platform familiarity are the strongest predictors of async interview performance , not underlying communication skill. Candidates who have practiced the format and understand the time constraints perform measurably better than those encountering it cold. This has direct implications for design: providing a genuine practice question (not a “test your mic” placeholder, but a real sample question in the real format) is the single highest-leverage improvement available. It doesn’t inflate scores; it removes the format penalty that would otherwise suppress them.

The Absence of Social Calibration

In a live interview, both parties constantly adjust , the interviewer softens a follow-up when a candidate looks confused; the candidate elaborates when the interviewer leans forward. These micro-calibrations are invisible but consequential. The async interview removes all of that feedback, which means the quality of the question design matters more than in any other format. A vague question in a live interview can be rescued by conversation. In async, it just produces vague answers. The burden shifts from interviewer skill to question architecture , more accountable and more scalable, but requiring upfront investment that organizations frequently underestimate.

Cognitive Load and Time Pressure

Preparation time and response time limits are the most consequential , and most frequently miscalibrated , variables in async interview design. Too short a preparation window and you’re measuring spontaneous recall rather than structured thinking. Too long a response window and candidates waffle. The research consensus in 2026 points to 90 seconds of preparation and 2 to 3 minutes of response time as optimal for behavioral and situational questions , long enough to organize a meaningful answer, short enough to prevent over-rehearsal that makes responses feel scripted rather than genuine.

Asynchronous Interview vs. Other Early-Funnel Screening Methods

The async interview doesn’t replace every other screening tool , it occupies a specific and complementary position in the funnel:

| Method | What It Captures | Key Difference from Async Interview |

|---|---|---|

| Resume Screen | Work history and credentials | Static; no behavioral or communication signal |

| Phone Screen | Basic communication and fit | Live; unstructured; low consistency across candidates |

| Aptitude Test | Cognitive ability and reasoning | Measures thinking capacity; not communication or motivation |

| Structured Interview | Deep behavioral competency | High-fidelity but high-cost; appropriate post-shortlist |

| Video Cover Letter | Candidate-led self-presentation | Unstructured; no standardized evaluation criteria |

| Async Interview | Communication, motivation, and role-specific thinking | Standardized, scalable, reviewable, and AI-analyzable |

The async interview is most valuable at the transition point between resume screening and shortlisting , the stage where you’ve filtered for minimum qualifications but haven’t yet committed the time and resource of a structured interview. It’s not a replacement for the live interview; it’s a precision instrument for determining who deserves one.

The insight that reshapes how most TA teams think about this: the async interview doesn’t just add a data point. It changes the nature of the data available at the early funnel stage. For the first time, recruiters reviewing a shortlist can watch candidates answer questions and form evidence-based impressions, rather than inferring everything from a document that was optimized to get through an ATS.

What the Experts Say?

The asynchronous interview is the most underrated equalizer in modern recruitment. It gives every candidate the same stage, the same questions, and the same time , and the best communicators rise to the top regardless of their postcode or their pedigree.

– Hung Lee, Curator of Recruiting Brainfood

How to Measure and Improve Asynchronous Interview Performance?

Running an async interview program without measuring it is approximately as useful as running a phone screen program without taking notes , which, in fairness, some organizations are still doing.

Formula

Screening Efficiency Ratio (SER) = Qualified Candidates Advanced / Recruiter Screening Hours

A SER below 2.0 suggests either that the pre-screening filter isn’t doing its job (too many unqualified candidates are reaching the async stage) or that the async interview questions aren’t discriminating effectively between strong and weak responses. Both are fixable , but you need the metric to know which problem you’re solving.

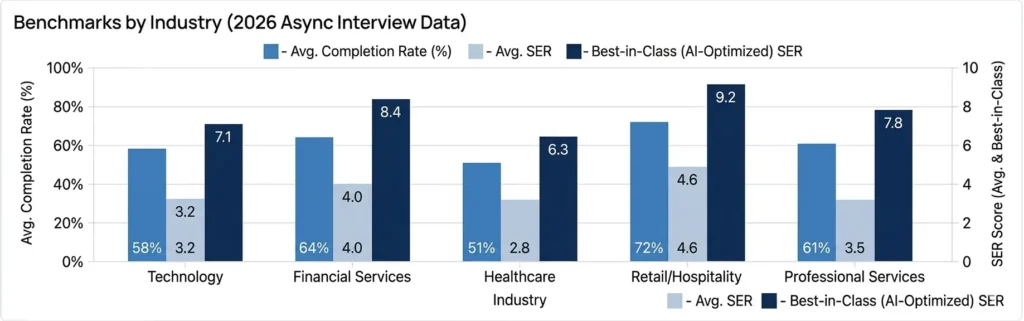

Benchmarks by Industry (2026 Async Interview Data)

| Industry | Avg. Completion Rate | Avg. SER | Best-in-Class (AI-Optimized) SER |

|---|---|---|---|

| Technology | 58% | 3.2 | 7.1 |

| Financial Services | 64% | 4.0 | 8.4 |

| Healthcare | 51% | 2.8 | 6.3 |

| Retail/Hospitality | 72% | 4.6 | 9.2 |

| Professional Services | 61% | 3.5 | 7.8 |

The completion rate gap between average and best-in-class maps reliably to three variables: how the invitation is framed (transactional versus engaging), whether a genuine practice question is provided, and how mobile-optimized the recording interface is. None of these are technology problems , they’re communication and design problems, which means they’re fully within a TA team’s control to fix.

Key Strategies for Improving Asynchronous Interview ROI

The gap between an async interview program that candidates resent and one they appreciate is almost entirely in the design and framing decisions made before a single question is recorded:

How AI and Automation Elevate the Asynchronous Interview?

The async interview format generates structured, comparable, reviewable data at scale , which makes it one of the most AI-compatible stages of the entire hiring funnel. The tools available in 2026 have made it genuinely transformative:

NLP-Powered Response Analysis

NLP can analyze async video and audio responses across multiple dimensions simultaneously: vocabulary range, sentence structure, logical sequencing, specificity of examples, and alignment with the role’s required competencies. This isn’t about filtering for “right answers” , it’s about identifying structural qualities of strong responses (concreteness, causal reasoning, relevance) and flagging them for human review. The AI doesn’t make the hiring decision; it compresses the review queue from 200 to the 30 most worth a recruiter’s time.

Sentiment and Tone Analysis

Beyond the words, AI can analyze paralinguistic qualities of async video responses: vocal confidence, pacing consistency, emotional range, and the presence of hedging language that correlates with low conviction. Used appropriately , as a signal to investigate rather than a verdict , this adds a dimension that text-based screening cannot provide. Used clumsily, it introduces bias. The design principle matters as much as the technology.

Automated Competency Tagging

AI systems trained on the organization’s own competency framework can tag each response against the specific behaviors the role requires , flagging where examples demonstrate the target competency clearly, partially, or not at all. This produces a competency heat map for each candidate that’s immediately readable by a hiring manager, without requiring the recruiter to synthesize and interpret raw recordings first.

Smart Scheduling Triggers

Once a candidate completes their async interview and receives a strong AI-generated rating, automation can immediately trigger the next step , a structured interview invitation, a technical assessment, or a hiring manager notification , without waiting for manual review. In practice, this compresses the average time between async completion and next-stage invitation from 5 days to under 12 hours. For high-demand candidates, that speed is a tangible competitive advantage.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Asynchronous Interviews and Diversity & Inclusion

The async interview, more than almost any other hiring tool, has genuine structural potential to reduce inequity in early-funnel screening , but that potential is not automatic. It requires intentional design decisions at every stage.

Removing the Scheduling Penalty

Live interview scheduling disproportionately disadvantages candidates in inflexible-hours roles , retail, hospitality, healthcare, manufacturing , and those with caregiving responsibilities that make “can you do 2pm Thursday?” a genuinely difficult question. The async interview removes the scheduling variable entirely. A candidate with a 7am warehouse shift and two children under five can complete their interview at 10pm if that’s when their life permits. The talent pool expands when availability stops being a qualification.

Standardization as an Equalizer

When every candidate answers the same questions in the same format with the same time parameters, the advantages that accrue to practiced interviewees , typically those with elite educational backgrounds and professional coaching , are partially neutralized. The candidate who has never had a mock interview gets the same question architecture, the same preparation window, and the same evaluation rubric. That’s not a complete solution to structural inequality in hiring, but it’s a meaningful reduction in one of its operating mechanisms.

Accessibility by Design

Async interview platforms should support closed captions, screen reader compatibility, adjustable playback speeds, and alternative response formats for candidates who cannot use video due to disability or bandwidth constraints. These aren’t edge-case features , they’re the baseline of a professionally run program. Platforms that don’t offer them are not accessible; they’re merely digital.

Mitigating AI Evaluation Bias

AI-powered async analysis has documented failure modes: accent bias in speech analysis, vocabulary scoring that correlates with educational privilege rather than job-relevant communication, and confidence proxies that misread cultural norms as low conviction. The solution isn’t to avoid AI , it’s to audit outputs for adverse impact before deploying at scale, and to treat AI scoring as a signal to investigate rather than a verdict to enforce.

Common Challenges & Solutions

| Challenge | Solution |

|---|---|

| Low Completion Rates | Reframe the invitation, provide a practice question, and ensure mobile optimization |

| Candidate Perception (“Impersonal”) | Add a short personalized video from the hiring manager introducing the role before the questions begin |

| AI Scoring Bias | Conduct quarterly adverse impact audits; calibrate scoring models against diverse performance data |

| Question Design Weakness | Build questions to a STAR-framework brief; pilot with internal staff before deploying to live pipeline |

| Technical Drop-off | Build a fallback audio-only or written response option for connectivity or device issues |

Real-World Case Studies

Case Study 1: The Global E-Commerce Platform

A rapidly scaling e-commerce company hiring 400 customer experience reps per quarter across six countries had a phone-screen process consuming 28 recruiter hours per week , producing inconsistent shortlists because different recruiters used different evaluation frameworks. They replaced first-round phone screens with a four-question async interview program, with AI-powered competency tagging against five role-specific behaviors. Recruiter screen time dropped by 74%. Shortlist-to-offer conversion improved by 31% because hiring managers received structured, comparable candidate profiles instead of recruiter summaries. Time-to-hire compressed from 22 days to 11.

Case Study 2: The Professional Services Firm

A mid-size consulting firm was struggling with a specific failure mode: candidates who performed brilliantly in live interviews but underdelivered in the role. Post-mortem analysis revealed that live interviews were rewarding polished interview performance , the practiced poise of candidates who’d been coached , rather than substantive thinking. They redesigned their async interview to include a “thinking out loud” question: a short, ambiguous business scenario where candidates explained their reasoning process on video. Removing the live audience produced dramatically more authentic responses. Hiring manager satisfaction with shortlisted candidates rose 40% within two hiring cycles.

Case Study 3: The Healthcare System

A regional hospital network needed to fill 200 nursing roles across multiple facilities in a 90-day window , a volume requiring eight recruiters at full capacity under the old model. Instead, they deployed a three-question async interview with a 48-hour completion window for every applicant passing the credential screen. AI scoring flagged the top 25% of respondents for human review within two hours of submission. They met the 90-day target with a team of three recruiters. Post-hire candidate satisfaction scores were 19% higher than the previous cycle, with candidates consistently citing “flexibility” and “time to think” as the reasons.

Building an Asynchronous Interview Dashboard: What to Track

A well-instrumented async program generates more useful data than almost any other early-funnel stage. The metrics worth tracking:

Asynchronous Interview Across the Candidate Lifecycle

The async interview is typically positioned at a single point in the funnel , early-stage screening , but its applications extend further than most TA teams currently use it:

Pre-Application Async Prompt

A “tell us why this role interests you” video prompt embedded in the job posting itself functions as both a self-selection tool and an early engagement signal. Candidates who complete it unprompted are demonstrating genuine interest; the responses give sourcing teams early visibility into candidate quality before the formal application is submitted. Conversion-to-shortlist rates for candidates who complete pre-application prompts are typically 2.5x higher than for standard form submissions alone.

Screening Async Interview

The primary deployment: the structured, multi-question format used to replace or supplement the first-round live screen. This is where the SER metric is most directly applicable and where AI-assisted review delivers the greatest efficiency gain. Three to five questions. A defined completion window. A shortlist, faster and with more supporting evidence than any phone-screen process can reliably produce.

Panel-Prep Async Interview

A targeted async question sent between the structured interview and final panel stage , focused on a specific competency or scenario the earlier stage left ambiguous. Rather than prolonging the panel to revisit an unclear answer, the async prompt gathers the clarifying evidence ahead of time, making the final conversation more focused and more productive.

Internal Mobility Async Interview

For internal candidates applying to different teams, async interviews provide structure without the social complexity of being evaluated by colleagues. Internal candidates often underperform in live panels for internal roles because familiarity disrupts the professional register. An async format restores structure without the stiffness of a formal panel , and separates the evaluation cleanly from the relationship.

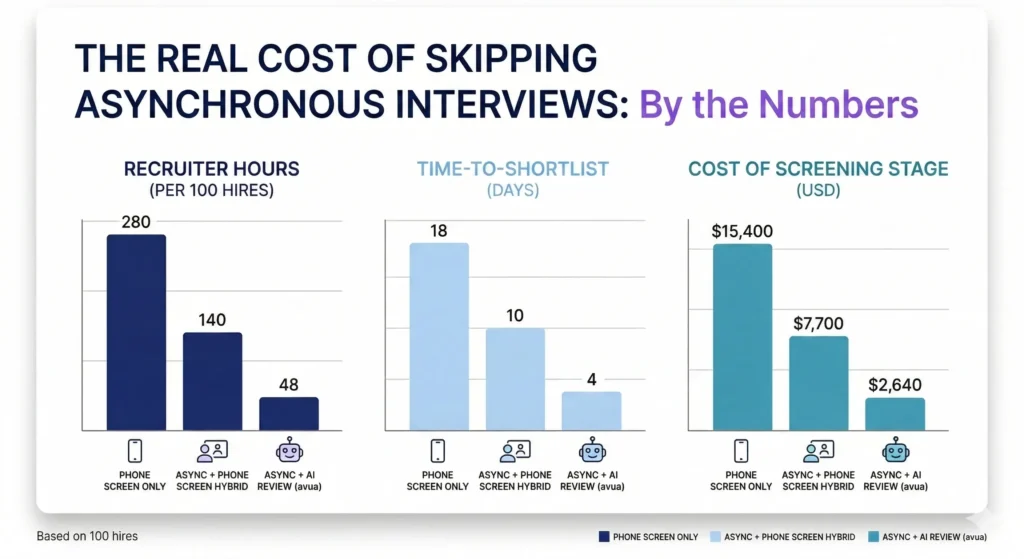

The Real Cost of Skipping Asynchronous Interviews

| Scenario | Recruiter Hours (per 100 hires) | Time-to-Shortlist | Cost of Screening Stage |

|---|---|---|---|

| Phone Screen Only | 280 hours | 18 days | $15,400 |

| Async + Phone Screen Hybrid | 140 hours | 10 days | $7,700 |

| Async + AI Review (avua) | 48 hours | 4 days | $2,640 |

The “hidden cost” in the phone screen column isn’t just recruiter time , it’s the candidate attrition that happens in the 18 days between application and shortlist. Every day a strong candidate spends waiting for a callback is a day they might accept an offer from an organization with a faster process. In a market where top performers are off the market within 10 days of starting a search, an 18-day shortlisting process isn’t just inefficient, it’s structurally self-defeating.

Related Terms

| Term | Definition |

|---|---|

| One-Way Video Interview | Another name for the async video interview; responses recorded without a live interviewer |

| Synchronous Interview | A live, real-time interview format , the direct opposite of asynchronous |

| Structured Interview | An interview using standardized, pre-determined questions applied consistently to all candidates |

| Screening Efficiency Ratio | The number of qualified candidates advanced per hour of recruiter screening time |

| AI Competency Tagging | Automated identification and scoring of role-relevant behaviors within candidate responses |

| Time-to-Shortlist | The number of days between application receipt and delivery of a qualified shortlist to the hiring manager |

Frequently Asked Questions

How long should an asynchronous interview take for the candidate?

The optimal total candidate time investment , including setup, practice question, and all response recording , is between 15 and 25 minutes. Below 15 minutes and you’re not gathering enough signal to justify the ask. Above 30 minutes and completion rates begin to drop meaningfully, particularly for candidates who are currently employed and completing the interview outside of work hours.

Can candidates retake their responses in an async interview?

It depends on the platform and the program design. Allowing unlimited retakes can produce highly polished but over-rehearsed responses that reduce authenticity. Allowing one retake per question , common best practice in 2026 , balances candidate comfort with response naturalness. No retakes at all introduces significant format-anxiety noise that suppresses performance below its true level.

Does async interview performance predict live interview performance?

Moderately, but not perfectly , and that’s by design. The formats assess overlapping but non-identical competencies. Async interviews measure structured communication, preparation quality, and response clarity. Live interviews additionally measure adaptability, active listening, and conversational dynamism. Both data points are useful; neither is sufficient alone.

How does AI scoring handle non-native English speakers fairly?

This is one of the most actively developed areas in async interview technology. The current best practice involves training AI scoring models on linguistically diverse candidate populations, calibrating against human expert ratings across accent and language backgrounds, and using semantic analysis (meaning and structure) rather than phonetic or vocabulary-frequency scoring that correlates with native speaker status. Organizations using off-the-shelf AI scoring should explicitly verify their vendor’s approach to this before deploying at scale.

Is an async interview appropriate for senior-level roles?

Yes, with adjusted design. Senior candidates benefit from longer preparation windows, fewer but deeper questions, and framing that positions the interview as a mutual exploration rather than a screening gate. A well-designed async interview for a VP-level role feels qualitatively different from an entry-level screening format , and should. The methodology scales across levels; the calibration needs to reflect the seniority of the audience.

Conclusion

The asynchronous interview is what happens when a hiring process finally stops pretending that the interview is a conversation and acknowledges that, at the early funnel stage, it’s actually an evaluation. One side is assessing; the other side is being assessed. The live phone screen blurs that reality with small talk, scheduling friction, and the social performance of mutual politeness. The async interview is more honest , and, perhaps counterintuitively, more respectful of everyone’s time as a result.

For candidates, it means a genuine opportunity to be heard at their best, on a schedule that fits their life, answering questions they’ve had time to think about. For TA teams, it means a scalable, structured, evidence-rich alternative to a process that was consuming hundreds of hours and producing inconsistent results. For organizations, it means a faster path to the people they’re actually looking for , which, in 2026’s talent market, is the only competitive advantage that compounds.

The future of early-funnel screening isn’t a better phone call. It’s the elimination of the phone call as a screening instrument, replaced by a format that generates better data in less time with less friction on both sides. The organizations that have made that transition aren’t going back. The ones that haven’t yet are leaving quality and speed on the table with every hiring cycle.