Picture a Monday morning in a mid-size technology company. A product manager role went live on Friday. By the time the recruiter opens their laptop, there are 340 applications in the queue. The hiring manager needs a shortlist of eight by Wednesday. There is one recruiter on this role. The math does not work unless something in the process does the first pass automatically.

That something is automated screening.

Automated screening is the use of technology to evaluate, filter, and rank job applicants against predefined criteria without requiring a human to manually review every application. In its earliest form, it was a keyword-matching exercise in an Applicant Tracking System. In 2026, it is a multi-layered AI process that parses resumes, scores responses to pre-screening questions, evaluates asynchronous interview recordings, flags behavioral signals, and delivers a ranked shortlist to a recruiter who never had to read a single unqualified application to get there.

For organizations operating at volume, automated screening is not a nice-to-have. It is the only mechanism that makes high-quality, consistent early-funnel evaluation possible at scale. AI-powered hiring platforms have transformed what was once a blunt instrument into a precise one: capable of identifying candidates who would have been filtered out by a keyword mismatch in a legacy ATS, while simultaneously catching misrepresentation and misalignment that a hurried human reviewer would have missed.

The headline metric governing this function is the Screening Precision Rate (SPR): the proportion of candidates who pass automated screening and are subsequently confirmed as genuinely qualified by a human recruiter. In organizations using first-generation keyword-based ATS screening, SPR averages around 42%. In organizations using AI-powered multi-signal screening, it consistently exceeds 78%. That gap represents hundreds of hours of recruiter time recovered, and a meaningfully better candidate experience for the applicants on the right side of the filter.

What is Automated Screening?

Automated screening is a technology-driven process that assesses job applicants against role-specific criteria at the earliest stages of the hiring funnel, reducing the candidate pool from the full applicant volume to a qualified shortlist without manual review of every individual application.

The process typically operates across three layers. The first is structural matching: does the candidate’s stated experience, location, qualifications, and availability align with the minimum requirements of the role? The second is behavioral and competency signal evaluation: do their responses to pre-screening questions, assessment scores, or asynchronous interview recordings indicate the potential the role demands? The third is ranking and prioritization: of the candidates who pass the first two layers, which ones are most likely to be strong fits based on the full combination of available signals?

In 2026, the most effective automated screening systems do not operate as binary pass-fail gates. They operate as continuous scoring engines that maintain a ranked, updatable shortlist as new applications arrive, giving recruiters real-time visibility into where the pipeline stands without waiting for a manual review batch to complete.

Is Automated Screening a Productivity Tool or a Bias Machine?

This is the question that has followed automated screening since it graduated from keyword matching to machine learning, and it deserves a direct answer.

Automated screening built on the wrong foundations is absolutely a bias machine. The most documented failure mode is training a model on historical hire data from a workforce that was already demographically skewed. The model learns that certain universities, certain job title sequences, and certain language patterns correlate with “good hires,” then screens accordingly. The problem is that historical “good hires” reflect the decisions of managers who may have been operating with their own biases intact. The model does not remove human bias from screening; it automates and scales it.

This is not a theoretical risk. High-profile examples from the 2018 to 2022 period involved automated screening systems that systematically disadvantaged women, candidates from non-Western universities, and applicants whose resumes contained employment gaps that correlated with caregiving. In each case, the model was doing exactly what it was trained to do. The problem was what it was trained on.

The version of automated screening that actually delivers on its promise is built differently. It is trained on role performance data, not hire data. It evaluates candidates against what predicts success in the role, not what predicts similarity to previous hires. It is audited regularly for adverse impact. And it is designed as a ranking and prioritization tool rather than an automatic rejection mechanism, keeping human judgment in the loop for every consequential decision.

Companies using AI-driven automated screening built on these principles report an average reduction in time-to-shortlist of 71%, alongside a measurable improvement in shortlist diversity compared to manual review processes. The manual review process, it turns out, introduces its own bias through inconsistent criteria application, reviewer fatigue, and the tendency to favor applicants whose backgrounds feel personally familiar to the reviewer.

For TA leaders, the strategic frame is this: automated screening done well is a bias reduction tool, not a bias amplification tool. A recruiter who has been reading applications for four hours on a Tuesday afternoon is not producing unbiased results. They are producing tired results. Consistent, criteria-driven automated evaluation, properly designed and regularly audited, outperforms inconsistent human review on both accuracy and fairness metrics.

Consider the scenario that makes this tangible. A logistics company posts a warehouse operations supervisor role and receives 280 applications in 72 hours. Analysis of past hiring cycles showed the recruiter’s shortlists overrepresented candidates from a specific geographic area and underrepresented candidates with non-linear career histories, not through deliberate intent but through cognitive shortcuts applied under time pressure.

An AI-powered automated screening system, configured against actual performance competencies, produced a shortlist of twelve in four hours. Seven of those twelve were candidates the manual process would not have surfaced. Three received offers. All three are still with the company two years later.

The ROI is concrete. If a company hires 200 people per year and automated screening reduces recruiter screening time per hire from six hours to one and a half hours, the recovered capacity at $60 per recruiter hour is $54,000 annually, before accounting for quality improvements and faster time-to-hire.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Technology Behind Automated Screening

Automated screening in 2026 is not a single technology. It is a stack of complementary tools, each handling a different signal type in the early-funnel evaluation process.

Resume Parsing and Semantic Matching

The foundation layer of any automated screening system is resume parsing: the extraction of structured data from the unstructured text of a resume or CV. In legacy ATS systems, this was achieved through keyword matching, which produced two well-documented failure modes. False negatives occurred when qualified candidates used different terminology from the job description (writing “people management” where the JD said “team leadership”). False positives occurred when candidates who had learned to keyword-stuff their resumes passed filters they should not have.

Modern semantic matching uses NLP to evaluate meaning rather than literal term presence. It recognizes that “led a team of six engineers” and “line management of a six-person technical team” are substantively equivalent, and that “proficiency in Python” and “Python developer since 2019 with production deployment experience” represent meaningfully different levels of depth. This is a significant improvement, but well-designed job requirements on the input side remain essential. Garbage in, garbage out is still the operative principle regardless of how sophisticated the matching engine is.

Structured Pre-Screening Questions

The second layer of automated screening is a structured question set that candidates complete alongside their application. These questions may be disqualifying (right to work, required certification) or differentiating (experience with a relevant technology, response to a situational prompt). AI systems evaluate differentiating responses for content quality, relevance, specificity, and the presence of indicators associated with strong role performance.

The design quality of these questions is the most frequently underestimated variable in screening program performance. A disqualifying question that inadvertently screens out protected groups is a legal and reputational liability. A differentiating question that is too vague produces responses that cannot be meaningfully evaluated. And a question set that is too long reduces completion rates, biasing the remaining pool toward candidates with more available time rather than more relevant experience.

AI-Powered Assessment Integration

The most significant development in automated screening over the past three years has been the integration of aptitude tests, situational judgment tests, and short-form asynchronous video responses directly into the screening workflow. Rather than treating assessment as a separate stage triggered after initial resume review, AI-powered platforms now fire the appropriate assessment automatically the moment an application meets baseline structural criteria, incorporating the results into the overall screening score in real time. This compresses what was previously a multi-week, multi-step process into a single candidate-side experience taking 20 to 35 minutes.

Behavioral Signal Analysis

The leading edge of automated screening is behavioral signal analysis: evaluating how candidates engage with the screening process itself, not just what they submit through it. Response time patterns, question abandonment rates, revision behavior in written responses, and engagement consistency across a multi-question application all carry secondary signal about candidate motivation and attention to detail. These signals adjust ranking scores within the pool of candidates who have already met the primary content criteria, rather than serving as standalone filters.

Automated Screening vs. Other Early-Funnel Hiring Tools

Automated screening is most powerful when it is understood as one component within a broader early-funnel architecture:

| Tool | Primary Function | Relationship to Automated Screening |

|---|---|---|

| ATS (Applicant Tracking System) | Application collection and workflow management | The infrastructure layer; automated screening runs within or alongside it |

| Resume Screening | Structural qualification filter | The most basic form of automated screening; modern systems go significantly further |

| Pre-Screening Questions | Disqualification and differentiation | A key input signal into the automated screening score |

| Aptitude Testing | Cognitive ability measurement | Integrated into advanced screening workflows as a scored signal |

| Asynchronous Interview | Communication and motivation evaluation | The richest signal available in automated early-funnel screening |

| Structured Interview | Deep competency evaluation | Post-shortlist; automated screening determines who reaches this stage |

The practical implication: organizations that treat automated screening as synonymous with ATS keyword filtering are using approximately 20% of the available capability. The remainder is being left on the table in the form of inconsistent shortlists, longer time-to-hire, and qualified candidates who fall through keyword-matching gaps.

What the Experts Say?

The best automated screening systems do not try to predict who will get hired. They try to predict who will perform. That is a fundamentally different objective, and the distinction determines whether the system helps or harms your talent strategy.

– Liz Ryan, Founder of Human Workplace and former Fortune 500 HR executive

How to Measure Automated Screening Effectiveness?

Measuring the performance of an automated screening program requires tracking signals at multiple points in the subsequent funnel, not just at the moment of shortlist delivery.

Formula

Precision = (Qualified after review ÷ Passed by screen) × 100

A Screening Precision Rate below 60% indicates that the automated filter is too permissive: too many candidates are passing who should not be advancing, and recruiter review time is being consumed on unqualified shortlists. Above 85% suggests the filter may be too restrictive and is likely excluding qualified candidates who would have been worth reviewing. The target range for most roles sits between 70% and 82%.

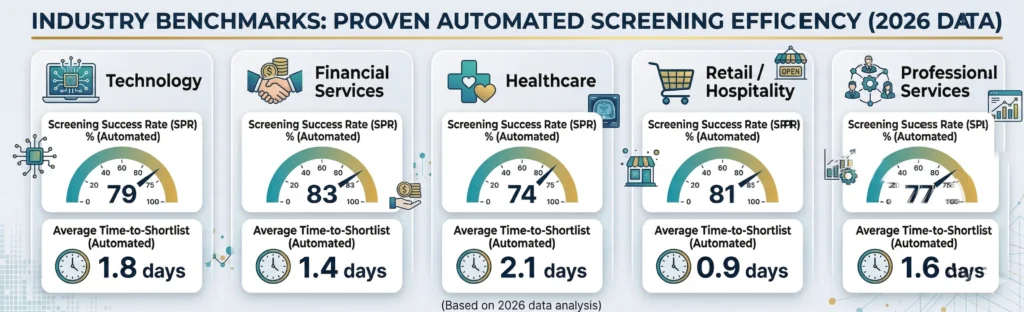

Benchmarks by Industry (2026 Data)

| Industry | Average SPR (Legacy ATS) | Average SPR (AI-Optimized) | Avg. Time-to-Shortlist |

|---|---|---|---|

| Technology | 41% | 79% | 1.8 days |

| Financial Services | 48% | 83% | 1.4 days |

| Healthcare | 38% | 74% | 2.1 days |

| Retail / Hospitality | 52% | 81% | 0.9 days |

| Professional Services | 44% | 77% | 1.6 days |

The technology sector’s relatively lower AI-optimized SPR compared to financial services reflects the broader variance in how technology roles are described and the inconsistency in how technical experience is documented across candidate pools. This is a job description quality problem as much as a screening technology problem.

Key Strategies for Improving Automated Screening ROI

Attrition reduction is not a single initiative. It is a system of interventions operating across the full employee lifecycle. The organizations that move their attrition rate meaningfully do so by addressing multiple leverage points simultaneously:

How AI Advances Automated Screening Beyond the ATS?

The gap between what a legacy ATS delivers and what an AI-powered screening platform delivers is not incremental. It is categorical.

Contextual Resume Evaluation

Where ATS keyword matching asks “does this resume contain the word Python,” AI contextual evaluation asks “does this person’s Python experience, in the context of their overall technical background and the types of projects they describe, suggest they can handle the Python requirements of this specific role?” The distinction matters most at the margins of a candidate pool, which is precisely where the highest-quality candidates who do not fit the conventional pedigree profile tend to live.

Dynamic Criteria Calibration

AI-powered screening systems can recalibrate their scoring criteria in real time as the applicant pool takes shape. If a role specification assumes a level of experience that the available candidate market does not support, a dynamic calibration system flags this early, allowing the hiring team to adjust criteria before weeks have passed and the pipeline has stalled. This eliminates one of the most common and expensive failure modes in high-volume recruiting.

Cross-Role Pattern Recognition

Organizations that have been using AI-powered screening for multiple hiring cycles accumulate a valuable asset: a dataset connecting early-funnel screening signals to post-hire performance outcomes across multiple roles and cohorts. AI systems identify cross-role patterns in this data, recognizing that a particular combination of pre-screening response characteristics predicts strong performance not just in the calibrated role, but in adjacent roles with similar underlying competency demands. The screening program becomes a continuous learning system rather than a static filter.

Real-Time Pipeline Health Monitoring

Advanced automated screening platforms provide TA leaders with a live view of pipeline quality: how many applications have been received, what percentage are meeting the screening threshold, where candidates are dropping out, and how the current pipeline compares to historical benchmarks. This real-time visibility allows sourcing strategy adjustments within days rather than weeks, preventing the scenario where a hiring team discovers at week four that their pipeline has been thin since week one.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Automated Screening and Diversity and Inclusion

Automated screening’s relationship with diversity and inclusion is the most scrutinized dimension of the technology, and rightfully so. Poorly designed automated screening can entrench inequity at scale with a speed and consistency that no human reviewer could match. Well-designed automated screening can surface talent that biased human review consistently misses.

The Algorithmic Bias Problem

The risk is worth naming clearly. Automated screening models trained on historical hiring data from organizations with homogeneous workforces will reproduce the screening patterns that produced those workforces. If the organization historically hired primarily from five universities, the model learns that pedigree is a positive signal.

If it historically passed over candidates with employment gaps, the model learns gaps are negative. Neither pattern necessarily correlates with job performance, but both will be applied consistently and at scale until someone audits the outputs and intervenes. The solution is to train models on performance outcomes rather than hiring decisions, conduct regular adverse impact analysis, and build human review checkpoints for candidates who were screened out.

Removing Affinity Bias from Early Screening

Human reviewers demonstrate well-documented affinity bias: the tendency to rate candidates with similar backgrounds more favorably than equally qualified candidates with different profiles. Automated screening, when calibrated against role performance criteria rather than biographical similarity, removes this mechanism from the evaluation. A recruiter who graduated from a particular university does not bring that affiliation to an AI scoring system. The field is leveled, at least to the degree that the scoring criteria themselves are genuinely role-relevant.

Designing for Accessibility

Automated screening interfaces must be accessible to candidates with disabilities, limited bandwidth, or non-standard devices. Pre-screening question formats should accommodate candidates who cannot type at speed or use video. Assessment tools integrated into the screening workflow must offer alternative response modes where needed. These are baseline design requirements for a program that does not systematically exclude protected groups before they have had the opportunity to demonstrate their qualifications.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| High False Negative Rate | Shift from keyword matching to semantic NLP evaluation; audit rejected candidates periodically to identify systematic gaps |

| Candidate Drop-off in Screening Flow | Reduce question count, improve mobile optimization, and provide a clear time estimate at the start of the process |

| Adverse Impact on Underrepresented Groups | Conduct quarterly demographic pass-rate audits; recalibrate scoring criteria against performance rather than historical hire profiles |

| Outdated Screening Criteria | Schedule bi-annual reviews of screening criteria with hiring managers; treat the job requirement as a living document |

| Over-reliance on Automation | Build human review checkpoints for borderline candidates; never allow automated screening to serve as the sole decision-maker for rejections |

Real-World Case Studies

Case Study 1: The Insurance Group

A national insurance group was processing 1,800 applications per month across 40 branch locations for entry-level customer service roles. Their manual screening process required a team of four recruiters spending 60% of their working hours on initial resume review, producing shortlists that branch managers consistently rated as “inconsistent in quality.” They implemented an AI-powered screening platform that evaluated applications across seven pre-screening questions, a ten-minute cognitive aptitude assessment, and a two-question asynchronous video response.

Recruiter time spent on initial screening dropped by 67%. Branch manager satisfaction with shortlist quality rose from 52% to 81% within three hiring cycles. First-year attrition among screened hires fell by 19%, because the screening process was now evaluating role-fit signals rather than keyword density.

Case Study 2: The Global Technology Firm

A technology company with engineering teams across nine countries was struggling with two simultaneous problems: a pipeline that was taking an average of 31 days to reach shortlist stage, and a diversity target that had been stagnant for three years. Analysis of their existing ATS screening data revealed that 74% of candidates screened out at the first stage were from non-Western universities, despite no formal exclusion of those institutions in the screening criteria.

The model had learned the pattern from historical hiring data. They rebuilt their screening logic from scratch, trained on a dataset of current employee performance ratings rather than hiring decisions, and introduced a blind first-stage screen that removed university name from the scoring algorithm. Time-to-shortlist dropped from 31 days to 9 days. The proportion of underrepresented candidates reaching the structured interview stage increased by 34% within six months.

Case Study 3: The Healthcare Network

A regional healthcare network needed to fill 150 nursing and allied health positions within a 60-day window following a rapid expansion of its outpatient services. Their existing manual screening process had a documented throughput capacity of approximately 40 positions per 60-day period, which meant the expansion timeline was not achievable without either hiring additional recruiters or changing the screening model. They implemented avua’s automated screening platform with role-specific criteria sets for each of the four position types being recruited.

The platform processed 2,400 applications, delivered ranked shortlists for all 150 positions, and flagged 340 candidates for priority recruiter review within 11 days. All 150 positions were filled within the 60-day target. Recruiter overtime during the campaign was zero, compared to an average of 140 overtime hours in previous high-volume campaigns.

Building an Automated Screening Dashboard: What to Track?

An automated screening program without measurement infrastructure is a black box. The metrics that make it transparent and improvable:

Automated Screening Across the Candidate Lifecycle

Automated screening is most commonly associated with the application stage, but its capabilities extend further across the talent journey.

Sourcing-Stage Screening

AI-powered sourcing tools now apply automated screening logic to passive candidate databases and social profiles before a formal application exists. By scoring potential candidates against role criteria and ranking outreach targets by predicted fit, these tools allow recruiters to focus their sourcing effort on candidates most likely to convert, rather than treating the entire reachable database as equally worth pursuing.

Application-Stage Screening

The primary deployment: evaluating submitted applications against role criteria to produce a ranked, qualified shortlist. This is where the efficiency and consistency benefits of automated screening are most directly measured and most directly felt by the hiring team.

Redeployment and Internal Mobility Screening

Organizations with mature automated screening programs apply the same logic to internal mobility: when a new role opens, the system automatically evaluates the existing employee database against the role criteria and surfaces internal candidates who meet the threshold. This reduces external hiring for roles that could be filled internally, improves retention by creating visible career progression pathways, and typically produces faster time-to-fill than external sourcing.

Post-Offer Verification Screening

A final application: using automated tools to verify the accuracy of information provided during the application and screening process. This is distinct from background checking and focuses on confirming that qualifications, experience, and certifications claimed during screening are accurately represented. Automated verification tools flag discrepancies for human investigation before onboarding begins, reducing the risk of post-offer rescissions and the disruption they create for both parties.

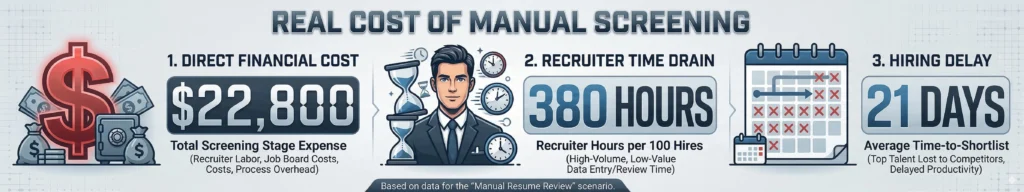

The Real Cost of Manual Screening: By the Numbers

| Scenario | Recruiter Hours per 100 Hires | Time-to-Shortlist | Screening Stage Cost |

|---|---|---|---|

| Manual Resume Review | 380 hours | 21 days | $22,800 |

| ATS Keyword Screening | 190 hours | 12 days | $11,400 |

| AI Automated Screening (avua) | 55 hours | 3 days | $3,300 |

The $19,500 gap between manual review and AI-powered automated screening at 100 hires per year represents recoverable recruiter capacity. At scale, that capacity can be redirected toward the high-judgment activities that actually require human expertise: building relationships with finalists, providing candidate feedback, partnering with hiring managers on role design, and running structured interviews that produce better hiring decisions. Manual screening is not just expensive in dollar terms. It is expensive in the opportunity cost of what your recruiting team could be doing instead.

Related Terms

| Term | Definition |

|---|---|

| Applicant Tracking System (ATS) | The software platform that collects, stores, and manages applications; the infrastructure within which automated screening often operates |

| Screening Precision Rate (SPR) | The proportion of automated screen-pass candidates confirmed as genuinely qualified by subsequent human review |

| Adverse Impact Ratio | The ratio of selection rates across demographic groups, used to identify potential discriminatory effects in screening criteria |

| Semantic Matching | NLP-based resume evaluation that assesses meaning and context rather than literal keyword presence |

| Boolean Search | A structured search technique using logical operators to identify candidates meeting specific criteria within talent databases |

| Pre-Screening Questions | Structured questions completed by candidates as part of the application, used as scored inputs into the automated screening evaluation |

Frequently Asked Questions

Does automated screening eliminate the need for recruiters?

No. Automated screening eliminates the need for recruiters to manually read every application, which is a rote task that adds limited judgment value. It does not eliminate the need for recruiters to evaluate shortlisted candidates, manage the interview process, handle offer negotiations, or make the judgment calls that determine hiring outcomes. The recruiter’s role shifts from volume processing to quality evaluation.

How does automated screening handle non-traditional candidates?

This depends entirely on how the screening criteria are configured. Systems built around keyword matching and pedigree proxies will consistently disadvantage non-traditional candidates. Systems designed around role performance predictors, with semantic NLP evaluation rather than literal matching, can identify non-traditional candidates who meet the substantive criteria of a role even when their background does not follow the conventional path.

What is the typical completion rate for automated screening processes?

Well-designed flows with a total candidate time investment of 20 to 30 minutes and clear progress indicators achieve completion rates of 68 to 74%. Poorly designed flows with no time estimate and excessive question volume see completion rates below 40%, which introduces a systematic bias toward candidates who are not currently employed and have more available time.

Can candidates game automated screening systems?

Keyword stuffing is a known issue. Modern AI-powered systems counter this through semantic evaluation that values specificity and context over keyword frequency. Incorporating timed assessments and asynchronous interview recordings into the screening workflow adds signals that are significantly harder to game than text-based application forms.

How often should automated screening criteria be updated?

At minimum, screening criteria should be reviewed at the start of each new hiring campaign for a given role, and comprehensively audited bi-annually across all active role types. A screening program running on static criteria not reviewed in 18 months is not evaluating the current role. It is evaluating a historical memory of it.

Conclusion

Automated screening is not the enemy of thoughtful hiring. It is the enabler of it. When the rote, volume-dependent work of initial candidate filtering is handled consistently, quickly, and with measurable precision by an AI system, the humans in the hiring process are freed to do the work that actually requires human judgment: evaluating potential, reading cultural fit, making sense of non-linear careers, and building the candidate relationships that turn good offers into accepted ones.

The organizations that treat automated screening as a replacement for quality in the early funnel will produce exactly what you would expect from a system that was asked to do the wrong job. The organizations that treat it as the infrastructure that makes quality possible at scale are the ones whose recruiters arrive on Monday morning, see 340 applications in the queue, and know with confidence that by Wednesday they will have a shortlist worth a hiring manager’s time.