There’s a version of hiring that goes something like this: read a resume, conduct a 30-minute interview, trust your gut, make an offer. It’s fast, it’s familiar, and it’s wrong about 46% of the time. The Assessment Center (AC) exists precisely because that process , charming as it is in its simplicity , produces expensive mistakes at scale.

An Assessment Center is a comprehensive, multi-method evaluation process where candidates perform a variety of standardized exercises, simulations, group tasks, structured interviews, case studies, and written exercises , observed simultaneously by multiple trained assessors to determine their suitability for a role. The defining features are breadth and deliberateness: no single exercise decides a candidate’s fate, and no single assessor’s impression goes unchecked.

In 2026, the methodology has migrated decisively from boardroom to browser. AI-powered hiring platforms have transformed what was once a two-day, hotel-ballroom exercise into a scalable, data-rich digital experience that companies of any size can deploy. The result is a hiring signal that goes far deeper than a resume and far wider than a structured interview , capturing the “soft skill” data points that single-layer tests systematically miss and producing a holistic view of candidate potential that holds up under scrutiny.

The core metric that validates this method is the Assessment Center Validity Coefficient ($V_c$) , the statistical correlation between AC performance and long-term job success. Consistently the highest validity coefficient in the recruitment toolkit, it’s the reason organizations serious about quality-of-hire keep returning to this methodology regardless of how much the format evolves.

What is an Assessment Center?

An Assessment Center is a holistic recruitment methodology that uses a battery of performance-based exercises to evaluate a candidate’s competencies and behavioral traits in simulated work environments.

The key distinction from a conventional interview or aptitude test is behavioral consistency across contexts. A structured interview tells you how a candidate talks about handling pressure. An Assessment Center puts them in a simulated high-pressure scenario and observes what they actually do. It captures performance across verbal, social, and analytical dimensions , the full range of competencies that the role demands , rather than relying on any single data point.

Are Traditional Assessment Centers Dying, or Just Being Reborn in the Cloud?

Let’s be honest about what the “Old World” Assessment Center actually involved. You’d book a conference facility, brief six senior managers to spend two days as assessors, fly in candidates from multiple cities, print scoring sheets, and then spend the following week collating handwritten notes into a coherent hiring decision. The process was expensive, exhausting, slow, and , despite its validity , accessible only to organizations with the budget to absorb all of the above.

The signal was excellent. The delivery mechanism was a logistical nightmare.

What’s happened since isn’t really the death of the Assessment Center. It’s a format correction , the core methodology surviving intact while everything scaffolding it gets rebuilt for the modern operating environment. AI-enabled ACs use VR simulations, real-time sentiment analysis, automated behavioral tagging, and instant multi-dimensional scoring.

A process that previously required three weeks of coordination now runs in 90 minutes on a laptop. Companies transitioning to AI-led Assessment Centers have seen a 65% reduction in cost-per-hire while simultaneously increasing predictive accuracy , a combination that’s essentially unheard of in recruitment technology.

For Talent Acquisition leaders, the critical reframe is this: the Assessment Center is the “Gold Standard” signal in hiring because it’s the only method that proves a candidate can do the job, not just narrate that they’ve done something vaguely similar in the past. Every other tool in the recruitment toolkit , aptitude tests, structured interviews, work samples , produces an inference. The AC produces an observation.

Consider a scenario that plays out in hiring pipelines every day. A candidate applies for a senior operations role. Her resume is non-linear , a career change in her late 20s, a stint at a company that went under, a gap year she used to build a side business. She fails the initial AI resume screen because the algorithm weights her for tenure and pedigree, not trajectory.

But in a virtual AC simulation , managing a cascading supplier crisis with live data inputs and a ticking clock , she’s the only candidate who isolates the root cause, communicates proactively with stakeholders, and resolves the scenario without waiting to be prompted. The resume said “risky hire.” The AC said “exactly who you need.”

The business case for doing this well , and the cost of continuing to do it wrong , is not subtle. If a firm hires 50 executives annually and an AI-powered AC reduces senior-level turnover by just 15%, the saved replacement costs (typically estimated at 200% of annual salary for executive roles) can exceed $1.5 million annually. That’s the math that moves Assessment Centers from an HR conversation to a CFO conversation.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind the Assessment Center

What makes Assessment Centers both powerful and tricky to design is that they’re not just measurement instruments , they’re social environments. The moment you put a candidate in an observed situation, psychology starts operating on the data in ways that require deliberate countermeasures.

The Observer Effect (Hawthorne Effect)

Candidates behave differently when they know they’re being evaluated , that’s not a character flaw, it’s a basic feature of conscious social performance. AI-moderated scoring mitigates this in a specific way: candidates assessed primarily by AI systems report significantly lower performance anxiety than those facing panels of senior humans whose body language they’re unconsciously reading throughout. Pre-AC briefings, practice scenarios, and transparent rubrics also measurably improve data quality , not because they help candidates cheat, but because they reduce anxiety-driven noise and surface actual competency signal.

Anchoring Bias and Assessor Fatigue

Human assessors are susceptible to first-impression anchoring , forming a dominant opinion within the first five minutes and selectively confirming it thereafter. Add a full day of back-to-back observations and you’ve introduced assessor fatigue on top of that.

AI scoring provides a structural counter-weight: consistent criteria regardless of sequence, no fatigue degradation across a long evaluation day, and a behavioral audit trail that calibrators can review when a human score appears anomalous. The goal isn’t to remove humans , it’s to reserve their interpretive capacity for the contextual judgment they’re uniquely good at.

Social Proof in Group Exercises

Group exercises are AC’s most psychologically complex component , and often the most revealing. The tension between standing out individually and building collaborative momentum creates a natural experiment in competing motivations. The subtler signal that assessors often miss: who is creating the conditions for others to perform well?

Psychological safety within the group exercise is partly a design variable , how the facilitator frames the task, how time pressure is calibrated, whether the brief rewards dominance or collective problem-solving. Structured participation mechanisms, rather than open free-for-alls, significantly improve data quality for introverted candidates and those from underrepresented groups.

Assessment Center vs. Other Recruitment Funnel Metrics

Understanding where the AC fits in the broader hiring toolkit requires clarity about what each tool is actually measuring:

| Metric | What It Measures | Key Difference from Assessment Center |

|---|---|---|

| Aptitude Test | Raw cognitive ability | AC measures behavioral application, not just intelligence |

| Structured Interview | Verbalized experience | AC observes actual performance in simulated tasks |

| Quality of Hire | Post-hire performance | AC is a predictor of this; Quality of Hire is the result |

| Candidate Satisfaction | Experience sentiment | AC is often seen as more “fair” but more demanding |

| Time to Productivity | Onboarding speed | High AC scores correlate with a 30% faster ramp-up |

The critical insight here is the distinction between threshold indicators and leading indicators. An aptitude test tells you whether a candidate crosses a minimum cognitive threshold. A structured interview tells you whether they can articulate relevant experience.

Neither tells you how they’ll actually behave when the job gets complicated. The Assessment Center is a leading indicator of cultural fit, leadership potential, and role-specific effectiveness , it predicts the shape of a person’s performance, not just the probability that they’re minimally qualified.

What the Experts Say?

The Assessment Center remains the single most predictive tool in the recruitment arsenal, but only if we strip away the human bias that has historically plagued its scoring.

– Madeline Laurano, Founder, Aptitude Research

How to Measure and Improve Assessment Center Efficiency?

Measuring AC performance as a program , not just evaluating individual candidates , is how TA teams identify whether their investment is generating proportionate return.

Formula

AC Efficiency (%) = (Number of Qualified Hires from AC / Total AC Participants) * 100

The benchmark target varies by role complexity and selectivity, but an efficiency rate below 10% is a signal that either the candidate pipeline arriving at the AC stage is insufficiently pre-screened, or the AC itself is too blunt to differentiate within a capable cohort.

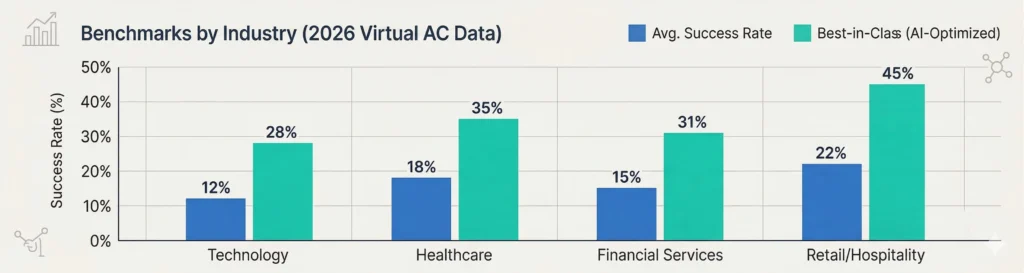

Benchmarks by Industry (2026 Virtual AC Data)

| Industry | Avg. Success Rate | Best-in-Class (AI-Optimized) |

|---|---|---|

| Technology | 12% | 28% |

| Healthcare | 18% | 35% |

| Financial Services | 15% | 31% |

| Retail/Hospitality | 22% | 45% |

The gap between average and best-in-class maps consistently to two variables: quality of the pre-AC screening funnel, and sophistication of the behavioral scoring framework. Organizations in the best-in-class bracket aren’t running harder assessments , they’re running smarter ones, built on competency models that were derived from actual top-performer data rather than generic “leadership competency” frameworks lifted from a textbook.

Key Strategies for Improving Assessment Center ROI

The levers for improving AC return on investment are well-documented. Execution is the differentiator:

How AI and Automation Solve Assessment Center Friction?

The four biggest historical limitations of Assessment Centers , cost, scale, consistency, and geographic reach, are all addressable with the AI tooling available in 2026. Here’s where the leverage is:

Automated Behavioral Tagging

NLP allows AI systems to analyze candidate speech and written responses during group tasks and case presentations , identifying leadership traits, communication clarity, collaborative language patterns, and decision-making confidence in real time. Rather than requiring an assessor to manually code behaviors while simultaneously observing a group exercise, the AI maintains a continuous behavioral log that assessors can validate and interrogate. Their role shifts from data collector to data interpreter , a significantly better use of senior talent.

VR/AR Immersion

VR has moved from novelty to practical AC infrastructure for roles where the simulated environment itself is a critical variable. Emergency response, high-frequency trading floors, surgical environments, plant management , contexts that were historically almost impossible to simulate in a standard AC format. A candidate for an operations role can now be placed in a simulated equipment failure scenario without leaving their home. The behavioral data is richer, the assessment is more role-specific, and the cost is a fraction of any physical equivalent.

Predictive Scoring Algorithms

The next frontier beyond consistent scoring is personalized scoring , comparing a candidate’s real-time behavioral profile against “Success Profiles” built from your own organization’s top performers. Rather than benchmarking against a generic competency model, the algorithm asks: does this person resemble the people who’ve already thrived here? Organizations that have built this capability report meaningful improvements in both Quality of Hire and 12-month retention.

Asynchronous Group Tasks

AI-facilitated asynchronous group exercises allow candidates in different time zones to participate in a structured collaborative task moderated by an AI that maintains context, tracks contributions, and ensures equitable participation. The result is a genuinely global talent pool, accessible through a methodology that previously required everyone in the same room at the same time.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Assessment Centers and Diversity & Inclusion

The Assessment Center, when designed thoughtfully, is one of hiring’s most powerful equalizers. When designed carelessly, it can replicate the biases it was meant to replace in a more sophisticated wrapper. The distinction matters.

The Digital Divide

Moving to virtual Assessment Centers expands access dramatically , no travel costs, no geographic barriers , but it introduces a new access variable: hardware and connectivity. A candidate completing a VR-based simulation on a high-spec desktop in a quiet office is having a fundamentally different experience than one on a shared tablet with an unstable connection.

AC design for 2026 requires explicit minimum-spec testing, low-bandwidth alternatives for data-intensive exercises, and technical support that treats a connectivity failure as a platform problem, not a candidate problem.

Language and Accessibility

Real-time translation tools and WCAG-compliant interfaces are baseline requirements for any organization serious about accessing the full global talent pool , not aspirational features.

For neurodivergent candidates, this extends to alternative response formats, extended time accommodations, and task designs that don’t conflate communication style with communication effectiveness. An autistic engineer who delivers exceptional technical analysis in structured written form is demonstrating exactly the competency being evaluated. Penalizing the format penalizes the wrong thing.

Bias in Behavioral Norms

“Leadership behaviors” as typically framed in Western competency frameworks , direct assertion, visible confidence, proactive agenda-setting , reflect a specific cultural script, not a universal standard of excellence.

Candidates from cultures where leadership is expressed through consensus-building, deference to collective wisdom, or quiet demonstration of competence will systematically under-score on rubrics calibrated to a different norm. AI scoring systems trained on diverse top-performer data , rather than on historical “successes” from a demographically narrow applicant pool , are the most structurally sound solution currently available.

Common Challenges & Solutions

| Challenge | Solution |

|---|---|

| Assessor Inconsistency | Use AI-moderated scoring to flag and investigate outliers in human grading |

| High Candidate Drop-off | Shorten AC duration and provide a mobile-optimized, low-friction entry point |

| Simulation Realism | Integrate live-data feeds into business case exercises for “current world” relevance |

Real-World Case Studies

Case Study 1: The Logistics Giant

A global shipping firm with operations across 40 countries had a chronic Time to Hire problem at the regional manager level. Physical, day-long ACs required candidate travel, assessors pulled from operations, and a three-week post-AC deliberation process. They replaced everything with a 90-minute AI-virtualized experience: three scenario-based exercises, automated behavioral tagging, and an AI-generated candidate comparison report within 24 hours.

Time to Hire dropped by 14 days. Assessor time investment dropped 70%. And candidate satisfaction scores , measured by post-AC NPS , actually went up, because the shorter, clearer process felt more respectful of candidates’ time.

Case Study 2: The Retail Bank

A retail bank with persistently high attrition in its customer service function recognized the problem wasn’t skills , it was disposition. They redesigned their AC to include a “high empathy” simulation: escalating customer scenarios scored on emotional regulation, active listening, and de-escalation, using NLP-based sentiment analysis to quantify behaviors that human assessors struggled to rate consistently. Within two hiring cohorts, the correlation between AC empathy scores and 90-day CSAT ratings reached $r = 0.62$. Overall CSAT scores rose 20% within 12 months.

Case Study 3: The Mobile-First Start-up

A series-B tech company needed to scale from 35 to 120 engineers across 12 countries in 18 months, with no physical HQ and a leadership team that believed cultural alignment was their primary performance driver. They built a VR-based AC centered on three role-specific simulations: a codebase triage exercise, a sprint planning task, and a stakeholder communication scenario.

Candidates across all 12 countries experienced identical conditions. At 6-month reviews, hiring managers reported 100% cultural alignment ratings, an outcome they attributed directly to the AC’s ability to surface values-in-action rather than values-in-interview.

Building an Assessment Center Dashboard: What to Track?

An AC without measurement infrastructure is a process masquerading as a system. Six metrics form the core of a useful performance dashboard:

Assessment Center Across the Candidate Lifecycle

The Assessment Center methodology isn’t only a hiring tool. Organizations increasingly recognize that the same infrastructure , behavioral simulation, multi-assessor observation, competency-based scoring , is valuable at multiple stages of the employee journey.

Pre-Application Simulation

“Day in the life” mini-tasks embedded in job postings or early application flows serve a self-selection function: they allow candidates to experience the reality of a role before committing to a full application. This isn’t just good candidate experience , it’s pipeline efficiency. Candidates who self-select out after a pre-application simulation were unlikely to be strong fits; the ones who lean in are already demonstrating genuine engagement with the work.

Selection Assessment Center

The primary high-fidelity stage: typically positioned after initial screening and before final interviews, this is where the AC earns its validity coefficient. The full battery of exercises , group task, business case, structured interview, role simulation , produces the comprehensive behavioral profile that makes an AC genuinely predictive rather than merely impressionistic.

Internal Promotion AC

Succession planning through informal “who does the senior leader like?” processes is one of the most reliably flawed mechanisms in organizational life. Internal Assessment Centers apply the same rigor to promotion decisions that external ACs apply to hiring , evaluating leaders against the competency demands of the role they’re moving into, not the one they’ve already mastered. Organizations with formal internal AC programs consistently report higher perceived fairness in promotion processes and stronger performance from newly promoted managers.

Developmental Assessment Center

Perhaps the most underutilized application: running existing employees through AC exercises not to evaluate them for roles, but to generate accurate, behaviorally-grounded maps of their strengths and development areas. Unlike self-assessment or manager feedback (both carry significant bias risk), a developmental AC produces data the employee and their manager can use to build a targeted growth plan. In a tight talent market, investing in the people you already have is both the right thing to do and the smart one.

The Real Cost of an Assessment Center: By the Numbers

| Scenario | Cost Per Candidate | Throughput Time | Wasted Spend (per 100 hires) |

|---|---|---|---|

| Physical AC | $2,500 | 3 Weeks | $250,000 |

| Hybrid AC | $900 | 1 Week | $90,000 |

| Virtual AI AC (avua) | $150 | 48 Hours | $15,000 |

The headline numbers are striking enough, but the more revealing figure is in the “hidden cost” calculation that never appears on a procurement invoice: senior manager time allocated to assessor roles, travel and accommodation for candidates who don’t advance, and the revenue impact of roles sitting open for an additional three weeks while the physical AC logistics play out. When those costs are fully loaded, the case for virtual AC isn’t just compelling , it’s difficult to argue against.

Related Terms

| Term | Definition |

|---|---|

| In-Tray Exercise | A simulation where candidates manage a prioritized inbox of tasks and decisions |

| Virtual Assessment | An AC conducted entirely online via video and digital tools |

| Behavioral Anchored Rating Scales (BARS) | A scoring system that links specific observed behaviors to numeric grades |

| Situational Judgment Test (SJT) | A precursor to ACs that asks how a candidate would handle a given scenario |

| Assessor Calibration | The process of ensuring all observers grade to a consistent, shared standard |

Frequently Asked Questions

How long should a modern Assessment Center take?

In 2026, well-designed virtual Assessment Centers should run between 90 and 120 minutes. Beyond that threshold, candidate fatigue starts introducing measurement noise , what you’re scoring is endurance, not competency. If your AC requires more time than that to generate the signal you need, the problem is usually exercise redundancy rather than insufficient depth.

Does a high Assessment Center score guarantee job success?

No method in recruitment science offers a guarantee, but the AC comes closest. With a validity coefficient of $r = 0.51$, it outperforms structured interviews ($r = 0.51$, though often measured inconsistently), aptitude tests ($r = 0.51$), and work samples ($r = 0.54$ , the only method that consistently rivals it). In combination with cognitive ability testing, Assessment Centers approach the ceiling of what predictive recruitment measurement can currently achieve.

Can AI really judge “soft skills” in an Assessment Center?

With important nuance: yes. Multi-modal AI analysis , combining tone, pacing, word choice, sentence structure, and logical sequencing , can quantify interpersonal effectiveness with reliability that outperforms untrained human observation. What AI cannot do is interpret the meaning of behavioral patterns without organizational context. That interpretive layer still requires human judgment. The best AC designs use AI for consistency and volume, and humans for contextual depth.

Are Assessment Centers fair for introverts?

Poorly designed ones aren’t. Well-designed Assessment Centers explicitly include individual “deep-work” components , written case analyses, solo decision-making exercises, asynchronous task responses , that allow candidates whose cognitive style is less suited to high-energy group dynamics to demonstrate their full capability. An AC that skews entirely toward group verbal performance is measuring extraversion, not competency.

Do candidates find Assessment Centers too stressful?

The evidence is more nuanced than the assumption. Candidates who experience transparent, gamified, well-briefed ACs consistently report higher procedural justice , the feeling that the process was fair and merit-based , than those who went through resume-and-interview processes alone. Stress in an AC is a function of ambiguity and perceived arbitrariness. Remove those, and candidates typically find the experience genuinely engaging.

Conclusion

Here’s the reframe the Assessment Center deserves: it is not a hurdle candidates must clear. It is a mutual discovery , an environment where candidates demonstrate who they are at their best, and organizations learn whether that person is the one they’ve been trying to find.

The virtualization of this methodology is the most significant development in assessment science since the format was standardized. For the first time, the AC’s extraordinary predictive power is accessible to organizations that don’t have the budget for two-day hotel events with six senior assessors in blazers. AI has democratized the gold standard.

Looking toward 2027, the trajectory is clear. Organizations that invest now in building behavioral data infrastructure , AI-scored ACs, top-performer success profiles, closed-loop predictive validation , will compound that advantage with every hire. The talent war isn’t won by the companies with the biggest hiring budgets. It’s won by the ones with the most accurate picture of what excellence actually looks like, and the systems to reliably find it.