Every employee who walks out the door voluntarily knows something their organization genuinely needs to hear. They know which manager behavior drove them out, which development opportunity never materialized, and which competitor offer they simply couldn’t turn down. They know the exact moment they decided that looking was worth the effort, and they know the gap between what the recruiter promised and what the job actually delivered.

Here’s the uncomfortable part: most organizations never find out any of it.

For that window of candor to produce real value, two things need to happen. The exit conversation needs to be designed to surface honest disclosure, not rehearsed politeness. And the data it produces needs to reach people who can actually act on it, not disappear into an HR file nobody opens again. If your candidate experience starts strong but your employee retention numbers tell a different story, exit interviews are often where the explanation lives.

Most exit interview programs fail on both counts.

An exit interview is a structured conversation or survey conducted with departing employees before or shortly after their last day, designed to collect candid feedback on why they left, what their experience looked like across the employee lifecycle, and what the organization could have done differently. In principle, it’s one of the most valuable tools in people operations. In practice, it’s one of the most consistently wasted ones.

The gap between the quality of data a well-run exit interview can produce and the improvements organizations actually make from it is persistent, avoidable, and expensive. In 2026, passive signals like Glassdoor reviews and LinkedIn activity monitoring supplement some of what exit conversations used to do alone.

But a direct conversation with a departing employee, conducted well, still produces the kind of contextual, causal reasoning that no algorithm quietly scraping public data can replicate. The exit interview remains relevant. The question is whether your organization is treating it like it is, or filing it away like it isn’t. Your employee engagement strategy is only as strong as your willingness to hear what departing employees actually think.

The primary metric for exit interview program effectiveness is Exit Insight Utilization Rate (EIUR):

$$\text{EIUR (%)} = \frac{\text{Exit Interview Themes That Produced Organizational Action}}{\text{Total Material Themes Identified}} \times 100$$

An EIUR below 20% indicates the program is collecting data without using it. Above 50% indicates a program where exit insights are genuinely driving organizational improvement. Most organizations, per SHRM research, operate below 30%.

What is Exit Interview?

An exit interview is a structured feedback collection mechanism employed at or near the point of an employee’s voluntary departure, designed to capture the specific reasons for departure, the quality of the employee’s experience across key dimensions of their employment, the organizational factors most influential in their decision to leave, and insights about what would have changed their decision or what the organization could improve for remaining employees.

The exit interview is typically conducted by an HR professional rather than the departing employee’s direct manager, because the most valuable data often concerns the manager’s own behavior, and the power dynamic of that relationship inhibits honest disclosure even post-departure. At senior levels, a third-party facilitator may produce more candid data than any internal interviewer.

Are Your Exit Interviews Producing Data or Just Documentation?

The most common exit interview failure mode is not that the conversation does not happen. It is that the conversation produces data that is documented, filed, and never systematically analyzed or acted on. The departing employee leaves feeling that the exercise was a bureaucratic formality. The feedback they provided joins a database that no one with decision authority regularly reviews. Nothing changes.

The organizations that extract genuine value from exit interviews share one characteristic that distinguishes them from those that do not: they have a defined process for converting exit data into organizational decisions. The data is analyzed for themes, the themes are reported to relevant decision-makers, the decision-makers are asked explicitly what they will do with specific findings, and the outcomes are tracked.

SHRM research finds that 96% of organizations conduct exit interviews, but only 32% systematically analyze the data and fewer than 20% can demonstrate a specific organizational change that exit interview data produced. The practice is nearly universal. The impact is rare. The gap is almost entirely in the analysis and utilization infrastructure, not in the conversation itself.

The scenario that makes this gap concrete: a healthcare organization conducts exit interviews with all voluntarily departing nurses. The interviews consistently identify two themes: inadequate nurse-to-patient ratios and a specific nursing manager whose behavior is described as inconsistent and sometimes demeaning. Both themes appear in 60 to 70% of exit interview records over an 18-month period.

The interviews are filed. The HR team reviews them individually as they arrive but has no systematic theme-aggregation process. The nursing manager receives no feedback. The staffing ratio issue is never escalated to operations leadership. Twenty-three nurses leave over the 18-month period. The organization spends approximately $690,000 in replacement costs. The exit interview data that could have prompted earlier intervention existed throughout.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Exit Interview Design Framework

Structural Components

Format: Structured conversation is generally preferable to written survey for most departing employees, because conversational probing produces more specific and more causally complete data than survey responses. Written surveys are appropriate as a supplement (particularly for themes where the anonymity of written response produces more candor) or as the primary mechanism when scheduling a conversation is not feasible.

Timing: Exit interviews conducted during the final week of employment sometimes elicit less candid responses because the employee is still formally employed and may have unresolved concerns about references or final settlements. Interviews conducted one to three weeks post-departure, when the employment relationship is fully concluded, often produce more candid data. Some organizations use both: a brief in-employment conversation and a follow-up survey 30 days post-departure.

Interviewer: HR professionals trained in exit interviewing conduct more effective sessions than untrained interviewers. The most critical skill is probing: the ability to follow up a surface-level response (“I wanted a new challenge“) with specific questions that reveal the organizational factors behind it (“What specifically made you feel you had reached the limits of challenge in this role? Were there opportunities you felt weren’t available to you here?”).

Documentation: Exit interview data should be documented in a consistent format that enables systematic analysis across departures. Structured templates that separate factual departure information (role, tenure, destination, voluntary vs. involuntary) from thematic content (departure reasons by category, specific incidents or factors cited, recommendations offered) produce analyzable data. Narrative-only documentation rarely enables the pattern detection that produces organizational insight.

Question Categories

Common Misconceptions About Exit Interviews

| Misconception | Reality |

|---|---|

| Exit interviews produce honest data | Exit interview data is affected by social desirability bias, residual loyalty, reference concerns, and departure circumstances. Designing for candor (post-employment timing, trained interviewers, anonymized reporting) improves data quality but does not eliminate these effects. |

| The stated reason for departure is the real reason | “Better opportunity” and “personal reasons” are frequently surface responses that conceal organizational factors the departing employee prefers not to raise explicitly. Probing questions are required to reach the organizational variables beneath the stated reason. |

| Exit interviews are sufficient as a retention intelligence source | Exit interviews are retrospective; the opportunity to retain has already passed. Stay interviews with current employees provide the same intelligence prospectively, when action is still possible. Exit interviews should supplement, not substitute for, stay interview programs. |

| Filing exit interviews constitutes using them | Systematic theme analysis, reporting to relevant decision-makers, and specific organizational action are required for exit interview data to produce value. Filing without analysis is documentation without insight. |

| Senior employees give the most useful exit data | Data from mid-level and frontline employees often provides the most actionable organizational intelligence because their departure decisions are most directly influenced by the day-to-day management and culture factors that exit interviews are designed to surface. |

| Online exit surveys produce equivalent data to conversations | Surveys produce higher completion rates but lower data richness. They are most effective for quantitative benchmarking (satisfaction ratings) and least effective for causal diagnosis (why the rating is what it is). |

Exit Interview vs. Related Talent Intelligence Mechanisms

| Mechanism | Timing | Data Type | Actionability | Trust Level |

|---|---|---|---|---|

| Exit Interview | Post-departure announcement | Retrospective; causal | Moderate (opportunity has passed) | High (candor enhanced by impending departure) |

| Stay Interview | Current employment | Prospective; preventive | High (opportunity still present) | Moderate (power dynamic active) |

| Pulse Survey | Ongoing | Point-in-time; satisfaction | High (ongoing feedback loop) | Moderate (social desirability present) |

| Glassdoor Review | Post-employment | Public; retrospective | Indirect (brand impact) | High (anonymity enhances candor) |

| 360 Feedback | Current employment | Multi-perspective; behavioral | High | Moderate |

| Skip-Level Interview | Current employment | Candid; management-focused | High | Moderate to high |

What the Experts Say?

The exit interview is not a retention tool. The retention window has closed. It is an organizational learning tool. The question is not ‘what would have kept this person?’ The question is ‘what does this person’s departure tell us about what we need to change for the 50 people who are still here?’ That reframe changes everything about how you design the program and what you do with the data.

– Beverly Kaye, Co-author of “Love ‘Em or Lose ‘Em: Getting Good People to Stay”

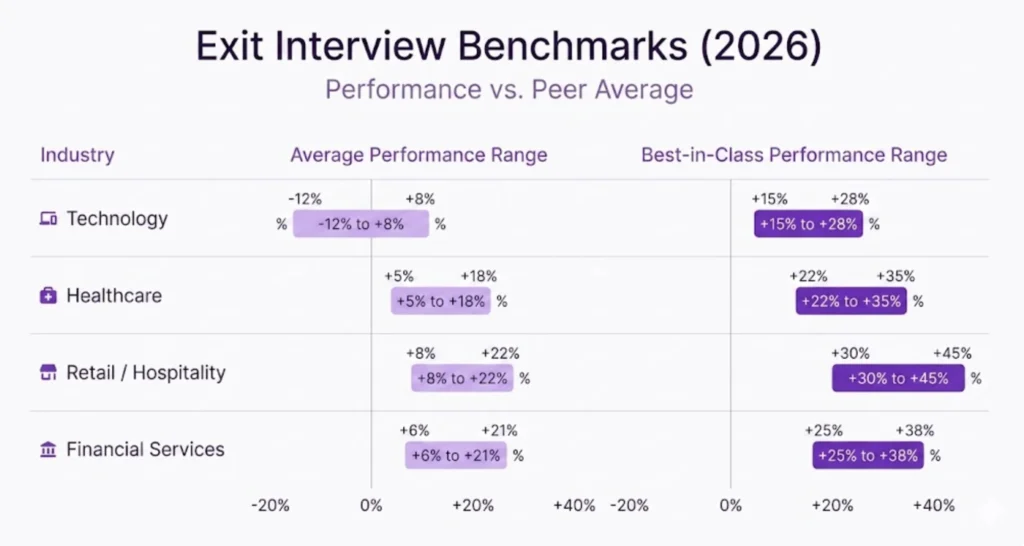

Exit Interview Benchmarks (2026)

| Program Quality Level | EIUR | Theme Identification Rate | Action Response Time | Voluntary Attrition Impact |

|---|---|---|---|---|

| No Program | 0% | N/A | N/A | Baseline |

| Basic (Filed, Not Analyzed) | 8% | Low | 6+ months | Minimal |

| Structured (Analyzed Quarterly) | 31% | Moderate | 90 days | -3 to -5% attrition |

| Advanced (Real-Time Analysis + Action Tracking) | 54% | High | 30 days | -8 to -12% attrition |

The voluntary attrition impact of advanced exit interview programs reflects not the interview itself but the organizational changes produced by systematic use of exit data. Organizations that identify and act on departure drivers within 30 days of theme identification consistently show lower subsequent voluntary attrition than those with slower or absent action processes.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Key Strategies for Maximizing Exit Interview Value

How AI Is Enhancing Exit Interview Programs?

Quick-Reference Cheat Sheet

EIUR (%) = (Exit Themes Producing Organizational Action / Total Material Themes Identified) × 100

The Exit Interview Question Framework:

- Primary departure reason (with probing)

- Work experience quality (manager, peers, workload)

- Development and growth opportunity

- Organizational culture factors

- Recruiting vs. reality gap

- What would have changed the decision

- Recommendations for remaining employees

Exit Interview Design Best Practices:

- Conduct 1 to 3 weeks post-departure when feasible (candor increases)

- Use trained HR interviewers, not direct managers

- Document in consistent format enabling cross-interview analysis

- Supplement conversation with 30-day post-departure survey

- Aggregate themes quarterly; report to accountability owners monthly for critical findings

Dos:

Don’ts:

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Low Exit Interview Completion Rate | Schedule during notice period; use brief post-departure survey as backup; emphasize organizational improvement framing |

| Departing Employees Not Being Candid | Use post-departure timing; trained interviewers; anonymized reporting; specific probing questions that surface organizational factors |

| Data Filed but Not Analyzed | Implement quarterly theme-aggregation process; assign EIUR tracking to HR analytics function |

| Findings Not Reaching Decision-Makers | Build structured reporting route from HR to accountability owners; require explicit acknowledgment of exit findings by relevant leaders |

| No Visible Organizational Change from Exit Data | Track EIUR explicitly; hold accountability owners to response commitments; publish anonymized summaries of exit themes and actions to employees |

Real-World Case Studies

Case Study 1: The Professional Services Firm

A 1,400-person professional services firm had conducted exit interviews for six years. The data had been filed in HR, reviewed by the HR director, and occasionally mentioned in annual people reports. EIUR was estimated at 11%.

A people analytics initiative conducted a retrospective analysis of five years of exit interview data and identified three themes appearing in over 50% of departure records: inconsistent feedback from senior managers, limited visibility into partnership track criteria, and a perceived culture of presenteeism that conflicted with flexible work expectations. None of these themes had triggered organizational action.

The findings were presented to the managing partner with specific business unit attribution and voluntary attrition cost estimates. The managing partner initiated three programs in response: a manager feedback quality training program, a formal partnership criteria transparency initiative, and a flexible work pilot in the business units with the highest attrition rates.

Within 18 months, voluntary attrition in the three pilot business units declined from 19% to 13%, generating an estimated $1.1 million in annual replacement cost savings. The exit data that produced these savings had existed for years; the analysis and accountability infrastructure was what had been missing.

Case Study 2: The Technology Company

A technology company was experiencing significant attrition among software engineers at the 18-to-30-month mark. Exit interviews cited “better opportunity” in 68% of departures. A decision to improve the probing depth of exit conversations revealed that “better opportunity” almost invariably meant “the other company offered a clearer technical growth path and a more interesting technical challenge than I was experiencing here.”

The specific finding was that engineers in two business units were working on maintenance and legacy code with limited exposure to the new product development work happening in two other units. Internal mobility between units was not well-supported. Engineers who wanted different technical challenges were finding them externally rather than internally.

The company implemented a structured internal technical rotation program and an internal mobility communication initiative. Within two hiring cycles, attrition among engineers at the 18-to-30-month mark declined from 26% to 17%. The exit data had been pointing to the problem; the probing had been insufficient to surface its specific nature until the interviewing approach was redesigned.

Related Terms

| Term | Definition |

|---|---|

| Stay Interview | A structured conversation with a currently employed high performer about what would make them more or less likely to remain; the prospective complement to the exit interview |

| Regrettable Attrition | Voluntary departures of high performers or critical role incumbents; the primary target population for exit interview utilization |

| Exit Survey | A written supplement or alternative to exit interview conversation; produces quantitative benchmarking data with potentially higher candor than in-person conversations |

| Voluntary Attrition | Employment separations initiated by the employee; the outcome that exit interview programs are designed to reduce through organizational learning |

| EIUR (Exit Insight Utilization Rate) | The proportion of material exit interview themes that produce specific organizational action; the primary program effectiveness metric |

Frequently Asked Questions

Who should conduct exit interviews?

HR professionals trained in exit interviewing produce the most useful data for most roles. Direct managers should not conduct exit interviews because the most valuable data often concerns their own behavior, and the power dynamic of that relationship inhibits honest disclosure. For C-suite departures, a board member, external consultant, or senior HR leader outside the direct reporting chain may produce more candid data than any internal HR interviewer.

Is it worth conducting exit interviews with employees who are involuntarily separated?

Exit interviews are primarily designed for voluntary departures. Involuntary separations, including performance-based terminations and RIF situations, produce different data and require different conversation design. For involuntary separations, the more valuable conversation is often a process review (was the performance management process followed appropriately?) rather than a departure-driver investigation. Some organizations conduct “exit surveys” for all separations but design them differently by separation type.

How long should an exit interview take?

A structured exit interview that covers the key question categories takes 30 to 45 minutes for most departing employees. Conversations that are substantially shorter than 30 minutes are likely not probing sufficiently to reach the organizational factors behind surface-level responses. Conversations longer than 60 minutes are typically less efficient in producing additional insight. Some organizations supplement the conversation with a brief written survey administered separately to capture additional structured data without extending the conversation timeline.

Should exit interview data be reported to the departing employee’s manager?

With appropriate aggregation and anonymization, yes. Individual exit interview comments should not be attributed to specific departing employees without their explicit consent. Aggregated themes from multiple exits within a manager’s team should be shared with that manager as performance feedback, because management quality is among the most commonly cited departure drivers and managers cannot improve behavior they do not receive feedback about. The reporting should be framed as development data rather than disciplinary evidence.

Conclusion

The exit interview is the organization’s last conversation with an employee whose departure has already happened. Its value isn’t in reversing that decision. It’s in making sure the insights that left with that person are used to prevent the next one.

Organizations that design exit interviews for candor, analyze the data systematically, and actually route findings to people with the authority to act on them are doing something most don’t: converting one of the most expensive events in employee retention into one of the most valuable feedback loops in their people operations toolkit. That’s not a small thing. Replacing a single mid-level employee can cost anywhere from 50% to 200% of their annual salary, and most of those departures had warning signs that a well-run exit process would have surfaced earlier.

The departing employee knows something their organization needs to hear. They’ve already lived through the gaps in your employee experience, the management blind spots, the culture inconsistencies, and the moments where a different decision might have kept them. The only question is whether your organization is willing to listen carefully enough, and act specifically enough, to make the conversation worth having for everyone who comes after them.