Hiring decisions made on gut feel alone have a habit of going wrong.

An interview scorecard is a structured evaluation tool that gives interviewers a consistent framework to assess candidates against predefined criteria, turning subjective impressions into something you can actually compare and act on.

For teams running competency-based interviews, scorecards are a natural fit. They bring the same structured thinking to the evaluation stage that competency frameworks bring to the questions themselves. But even outside formal interview formats, scorecards help reduce bias in hiring by making sure every candidate is measured against the same standard.

When integrated into a wider data-driven recruiting strategy, scorecards also generate useful hiring intelligence over time. Pair that with cleaner candidate management and you have a process that is both fairer and sharper.

The core metric governing scorecard effectiveness is Scorecard Predictive Validity: the correlation between scorecard ratings and post-hire performance outcomes, measured at 6 and 12 months.

Scorecard Predictive Validity = Correlation Coefficient (Scorecard Rating, 12-Month Performance Score)

High-quality scorecard programs achieve predictive validity coefficients of 0.45–0.62, compared to 0.18–0.28 for unstructured interviews. The difference is not marginal, it represents the difference between a process that predicts job performance meaningfully and one that predicts it at barely better than chance.

What is an Interview Scorecard?

An interview scorecard is a structured assessment document completed by each interviewer immediately following a candidate conversation, comprising predefined evaluation dimensions (competencies, skills, or behavioral indicators), a rating scale applied consistently across candidates, space for evidence-based written justification of each rating, and an overall recommendation, designed to standardize evaluation inputs, reduce bias, enable cross-interviewer calibration, and improve the quality of hiring decisions.

The defining characteristic is its standardization: every interviewer evaluating candidates for the same role uses the same scorecard. This is what makes the scorecard a calibration tool, without standardization across evaluators, post-interview debrief conversations are comparisons of different assessments of different things, not different perspectives on the same criteria.

Is Your Interview Process Measuring Candidates, or Measuring Interviewers?

The uncomfortable truth about most interview processes is that they measure interviewer impressions more reliably than they measure candidate capability. Without a scorecard, without predefined criteria, consistent questions, and documented evidence, interview evaluations primarily reflect the assessing habits, cognitive biases, and social chemistry reactions of the evaluators. Two candidates with identical skills will receive materially different evaluations from the same panel if their personal styles, communication approaches, or backgrounds trigger different affinity responses in different interviewers.

This is not conjecture. A widely cited meta-analysis of interviewing research found that unstructured interview ratings have inter-rater reliability of approximately 0.27, meaning two interviewers evaluating the same candidate agree on their assessment only 27% of the time. Structured interviews using scorecards achieve inter-rater reliability of 0.56–0.73, representing a more than two-fold improvement in evaluation consistency. More importantly, structured evaluations predict job performance at coefficients of 0.40–0.56, versus 0.20–0.29 for unstructured, meaning a structured scorecard process is approximately twice as accurate at predicting who will perform well in the role.

The cost of poor interview validity is directly calculable. For an organization making 50 hires per year with an average bad hire cost of $25,000 and a bad hire rate of 20%, annual bad hire costs total $250,000. Research on the performance improvement attributable to moving from unstructured to structured scorecard-based interviews suggests a bad hire rate reduction of 25–35%. Applied to a 20% bad hire rate and 50 annual hires, that is 3–4 avoided bad hires per year, $75,000–100,000 in avoided cost, from a scorecard program that costs essentially nothing to implement beyond design time.

The scenario that makes this concrete: a growing financial services firm conducts a post-hire audit and finds that its highest-performing new hires over a two-year period share one consistent pattern: they were interviewed by a single senior director who asked a consistent set of behavioral questions and documented their evaluations thoroughly.

The lowest-performing hires were concentrated in processes managed by four different hiring managers, each of whom used different questions, different evaluation criteria, and different formats for recording their assessments, ranging from detailed notes to a single word (“great” or “maybe”). The firm implements a standardized scorecard across all its hiring processes. In the subsequent year, its bad hire rate falls from 22% to 13%.

The data is unambiguous: scorecards improve hiring outcomes. The organizations not using them are not making a defensible choice, they are making a default one.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Interview Scorecard Design

The Halo Effect and Dimension Isolation

One of the most consistent biases in interview evaluation is the halo effect: the tendency for a strong initial impression on one dimension to positively color all subsequent evaluations. A candidate who delivers a compelling opening answer gets the benefit of the doubt on every subsequent question, regardless of the quality of those answers. A scorecard that requires independent evaluation of each competency dimension, with separate evidence documentation for each, mitigates the halo effect by forcing evaluators to assess each dimension on its own merits rather than through the lens of an overall first impression.

Social Desirability and Debrief Dynamics

When interviewers share their assessments verbally before documenting them, the first assessor’s opinion exerts disproportionate influence on all subsequent ones, a groupthink dynamic that is particularly pronounced when the first speaker is senior. Organizations that require written scorecard completion before any verbal debrief conversation reliably produce more independent evaluations than those that start with a verbal discussion. The structural rule is simple: no talking before writing.

Anchoring Bias in Sequential Evaluation

When interviewers evaluate multiple candidates in sequence, their assessments of later candidates are systematically anchored to the assessments of earlier ones. A candidate who follows an exceptional performer may be scored lower than their absolute quality would warrant; one who follows a weak performer may be scored higher. Scorecards that provide absolute rating scale anchors, with specific behavioral descriptions for each rating level, reduce anchoring bias by giving evaluators a fixed reference point that is independent of the comparison pool.

Interview Scorecard vs. Related Evaluation Tools

| Tool | Purpose | Timing | Standardization | Key Difference from Scorecard |

|---|---|---|---|---|

| Interview Scorecard | Structured per-interview candidate evaluation | During / immediately post-interview | High (predefined criteria + scale) | Core evaluation tool; per interview |

| Evaluation Matrix | Cross-candidate comparison on defined criteria | Post-process | Medium | Comparison-focused; used after multiple evaluations |

| Interview Guide | Structured question guide for interviewer | During interview | High | Defines questions; doesn’t capture ratings |

| Reference Check Form | Structured third-party evaluation | Post-interview | Medium | External input; different assessor |

| Assessment Report | Formal test/assessment output | Pre or post-interview | Very high | Automated; not human judgment |

What the Experts Say?

The scorecard is the most undervalued tool in recruiting. It is not a form, it is the organizational discipline of defining what good looks like before you see a candidate, so you aren’t defining it retroactively to justify whoever you already liked.

– Laszlo Bock, Former SVP People Operations, Google

How to Measure Scorecard Effectiveness?

Formula

Scorecard Predictive Validity = Pearson Correlation (Scorecard Total, 12-Month Performance Rating)

Inter-Rater Reliability = Intraclass Correlation Coefficient (ICC) across evaluators for same candidate

Scorecard Completion Rate (%) = (Completed Scorecards ÷ Total Interviews Conducted) × 100

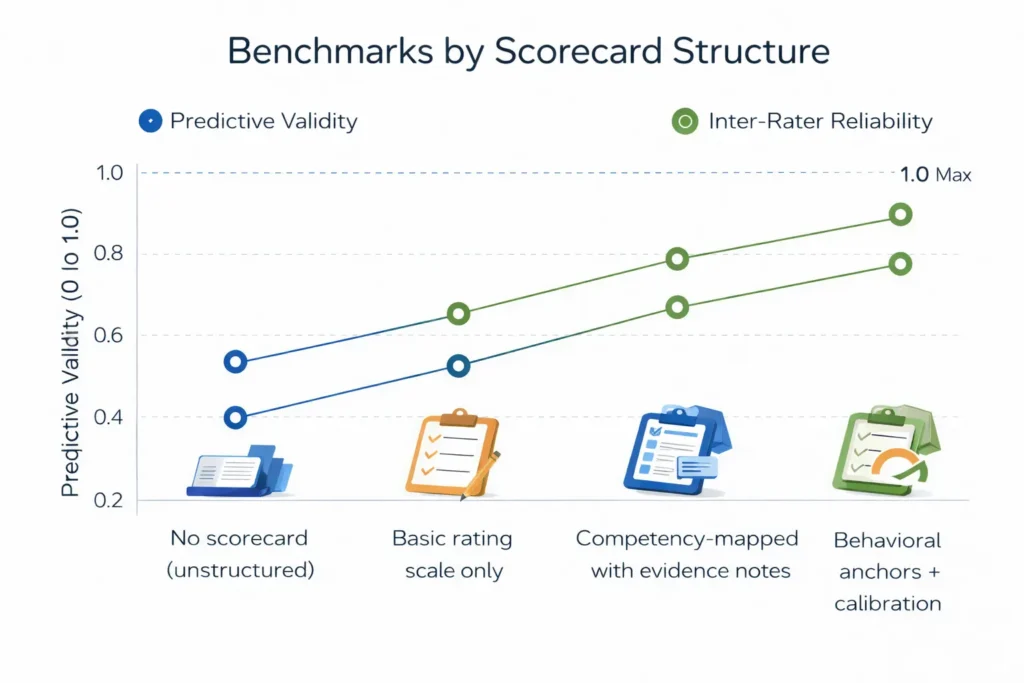

Benchmarks by Scorecard Structure

| Scorecard Type | Avg. Predictive Validity | Avg. Inter-Rater Reliability |

|---|---|---|

| No scorecard (unstructured) | 0.20–0.28 | 0.22–0.30 |

| Basic rating scale only | 0.28–0.35 | 0.35–0.42 |

| Competency-mapped with evidence notes | 0.40–0.51 | 0.54–0.65 |

| Behavioral anchors + calibration | 0.50–0.62 | 0.62–0.74 |

Key Strategies for Effective Scorecard Design and Use

How Can AI and Automation Improve Scorecard Quality?

AI-Generated Role-Specific Scorecards

Natural language AI tools can generate role-specific, competency-mapped scorecards from a brief description of the role and its success requirements, saving recruiters the time of building from scratch and ensuring that every search begins with a tailored, fit-for-purpose evaluation framework rather than a generic template.

Automated Completion Prompts

AI-powered hiring workflow tools can send completion reminders to interviewers immediately after each interview ends, with smart timing that prompts scorecard completion while the interview is fresh, rather than relying on interviewers to remember to complete forms hours later when recall accuracy has degraded.

Bias Detection in Scorecard Language

AI tools can analyze the language in scorecard justification fields for patterns consistent with specific biases, identifying evaluator comments that use demographic-proxy language, that are substantively lighter for specific candidate types, or that show significant divergence from other evaluators’ assessments without behavioral evidence basis. This analytical layer supports calibration conversations with specific, documented evidence rather than general awareness prompts.

Predictive Validity Tracking

AI analytics platforms can calculate and track the predictive validity of scorecard ratings at the role type and evaluator level, connecting interview scorecard scores to 12-month performance outcomes to identify which competencies, which evaluators, and which evaluation approaches are most predictive of success. This closes the feedback loop between evaluation quality and hiring outcome quality.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Interview Scorecards and Diversity & Inclusion

Scorecards as the Primary Bias Reduction Tool at the Interview Stage

The interview stage is where the majority of demographic attrition in hiring pipelines occurs, and the interview scorecard is the primary tool available to reduce that attrition through structural equity. By requiring all candidates to be evaluated against the same criteria, using the same evidence standard, scorecards remove the evaluative discretion that is the primary channel through which bias enters hiring decisions. Research on scorecard implementation in organizations with prior evidence of demographic disparity in interview advancement consistently shows reduction in advancement rate gaps between majority and underrepresented candidates.

Avoiding Criteria Bias in Scorecard Design

Scorecards can perpetuate bias if the criteria they measure are themselves biased. Criteria that require specific communication styles, educational backgrounds, or cultural reference frameworks that are more accessible to candidates from specific backgrounds than others are encoding bias into the evaluation structure rather than eliminating it. Scorecard criteria should be audited against the question: “Is this criterion genuinely predictive of performance in this role, or is it a proxy for demographic characteristics that are correlated with prior organizational norms?”

Transparent Feedback to All Candidates

Organizations that use structured scorecards are better positioned to provide specific, substantive feedback to candidates who are not selected, because the evaluation basis is documented and defensible. Providing meaningful feedback to internal and external candidates who are not selected, particularly those from underrepresented groups, builds employer brand and reduces the perception of opaque or arbitrary decision-making that disproportionately affects trust in hiring processes among historically excluded populations.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Interviewers completing scorecards from memory hours after the interview | Build completion reminder automation triggered by interview end time; require completion within 60 minutes |

| Panel members converging on the same rating regardless of individual assessment | Require written submission before verbal debrief; treat post-submission debrief as calibration, not consensus-building |

| Scorecard criteria applied inconsistently across interviewers | Run a 30-minute calibration session before the first candidate; review sample evidence descriptions for each rating level |

Real-World Case Studies

Case Study 1: The Consulting Firm

A management consulting firm with 12 hiring managers and no standardized evaluation process implemented competency-mapped scorecards across all consulting role hiring. The scorecards defined five competencies per role family, with four-level behavioral anchor descriptions for each. Inter-rater reliability measured at the first quarter post-implementation was 0.61, compared to a pre-implementation baseline estimate of approximately 0.24 (derived from retrospective analysis of divergent panel assessments in the prior year). Bad hire rate at 12 months fell from 19% to 11% over two annual hiring cohorts.

Case Study 2: The Technology Company

A technology company experiencing a demographic disparity in its engineering interview advancement rates (female candidates advancing from technical interview to final round at 0.61 the rate of equivalent-scoring male candidates) implemented a restructured scorecard that separated technical capability evaluation from communication style evaluation, with explicit behavioral anchors for each. The communication style criteria were redesigned to evaluate collaborative problem-solving approach (behaviorally defined and role-relevant) rather than assertiveness (which had been the previous implicit standard). Female candidate advancement rate improved to 0.93 over three hiring cycles.

Case Study 3: The Healthcare Network

A regional healthcare network redesigned its scorecard for nursing roles to be completable on mobile devices, recognizing that nurse managers conducting interviews were almost never at a desktop during their work day. The mobile scorecard, completable in under five minutes, increased scorecard completion rates from 44% (desktop form, completed later) to 91% (mobile form, completed immediately post-interview). Decision quality in debrief conversations improved measurably because evaluators were discussing documented evidence rather than reconstructed impressions.

Building a Scorecard Quality Dashboard: What to Track?

Scorecards Across the Hiring Process

Pre-Search: Criteria Definition

The scorecard process begins before any candidate is evaluated, at the role definition stage, where hiring stakeholders agree on the competencies to be assessed, the weighting of each, and the behavioral anchors that define each rating level. This upfront investment in criteria clarity is where the scorecard earns most of its predictive validity improvement.

Interview: Real-Time Evaluation

During the interview, the scorecard guides the interviewer’s question focus and provides a note-taking structure that captures evidence against specific criteria rather than general impressions. Interviewers who are simultaneously conducting an interview and completing a scorecard perform better evaluations than those who conduct the interview and document afterward, because the real-time structure keeps evaluation criteria front of mind.

Post-Interview: Documentation Before Debrief

The most critical scorecard timing rule: documentation before debrief. Interviewers who complete written scorecards independently before any group discussion preserve the evaluative independence that makes multi-interviewer processes valuable. The debrief then serves as a calibration conversation, comparing documented assessments and resolving divergences, rather than a consensus-building conversation that produces groupthink.

Post-Hire: Validity Analysis and Iteration

The scorecard’s value is fully realized only when its ratings are connected to post-hire performance outcomes. Organizations that track this connection, identifying which competency ratings most strongly predict performance, which evaluators’ scores are most and least predictive, and which scoring patterns correlate with early attrition, continuously improve their scorecard design based on outcome data.

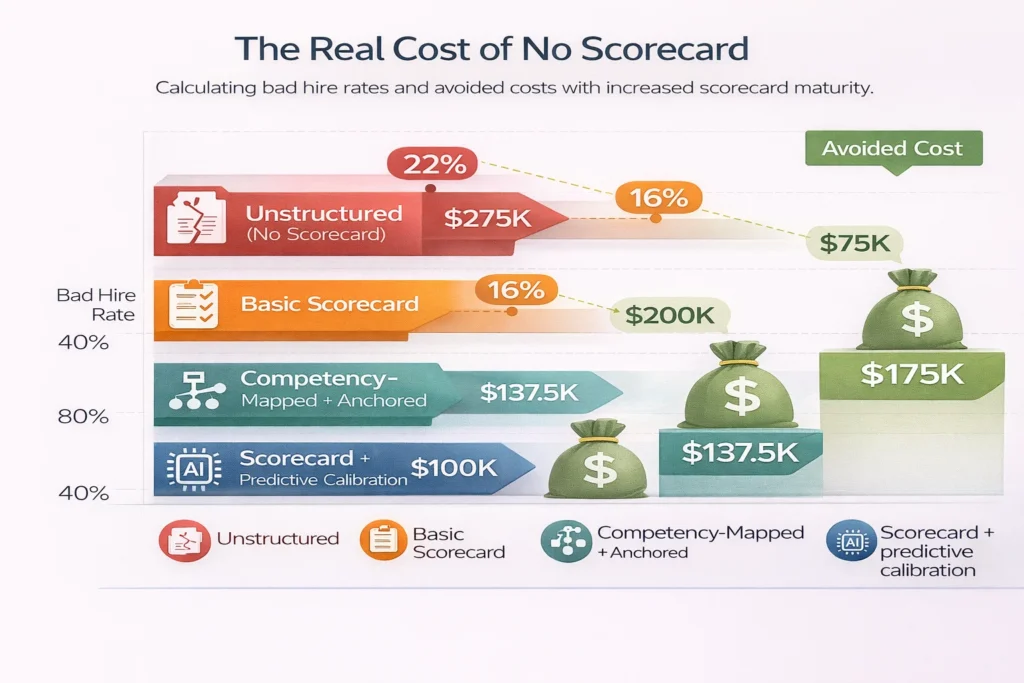

The Real Cost of No Scorecard

| Scenario | Bad Hire Rate | Annual Bad Hire Cost (50 hires) | Est. Annual Avoided Cost with Scorecard |

|---|---|---|---|

| Unstructured (no scorecard) | 22% | $275,000 | — |

| Basic scorecard | 16% | $200,000 | $75,000 |

| Competency-mapped + anchored | 11% | $137,500 | $137,500 |

| Scorecard + predictive calibration | 8% | $100,000 | $175,000 |

Bad hire cost assumed at $25,000 per instance.

Related Terms

| Term | Definition |

|---|---|

| Structured Interview | A standardized interview format using predefined questions evaluated against explicit criteria |

| Competency Framework | A defined set of skills, behaviors, and attributes that are assessed and developed within an organization |

| Inter-Rater Reliability | The degree of agreement between independent evaluators assessing the same subject using the same criteria |

| Behavioral Anchors | Specific behavioral descriptions associated with each rating level on an evaluation scale |

| Predictive Validity | The degree to which an assessment or evaluation predicts future performance outcomes |

Frequently Asked Questions

How many competencies should an interview scorecard include?

Research on scorecard design and evaluator cognitive load suggests three to six competencies per interview is optimal. Below three, the evaluation lacks sufficient breadth; above six, evaluators struggle to maintain focus and evidence quality degrades across dimensions. For roles requiring assessment of many competencies, the evaluation is better distributed across a multi-stage panel where each interviewer covers a focused subset.

Should scorecards be the same for all roles?

Core structure (rating scale, evidence documentation requirements, completion timing) should be consistent across roles to enable organizational-level quality tracking. Competency criteria should be role-specific, tailored to the skills and behaviors that are genuinely predictive of success in each particular role rather than using a generic universal rubric.

How do you handle significant score divergence between panel members?

Significant divergence (defined as two or more full rating levels between panel members on the same criterion) should be treated as a calibration discussion in debrief rather than averaged away. The goal is not to converge all scores to a consensus but to understand what each evaluator observed that led to their assessment, sometimes divergence reflects genuinely different candidate behavior in different conversations; sometimes it reflects evaluator calibration differences worth addressing.

Can scorecards be used for internal candidates?

Yes, and they should be. Applying identical scorecard evaluation standards to internal and external candidates for the same role is a structural equity requirement for fair internal hiring processes. Internal candidates who are assessed against different (typically lower or more informal) standards than external candidates for equivalent roles face an inequitable process that both undermines DEI goals and produces lower-quality internal hire outcomes.

Do candidates prefer structured scorecard-based processes?

Candidate experience research suggests that candidates who understand why a structured process is being used respond positively to scorecards, they perceive the process as fairer, more professional, and more respectful of their time than unstructured conversations. Candidates who experience structure without explanation sometimes perceive it as impersonal. The solution is transparency: explaining briefly at the start of the interview that the process uses structured evaluation to ensure consistency and fairness.

Conclusion

The interview scorecard is not a bureaucratic imposition on a process that was working fine without it.

It is the structural fix for a process that was producing inconsistent, bias-inflected evaluations and calling the results “judgment.” Organizations that have implemented well-designed scorecards, with specific criteria, behavioral anchors, independent completion protocols, and post-hire validity tracking, have measurably better hiring outcomes than those relying on debrief conversations to synthesize impressions that were formed without a shared framework.

The scorecard does not remove human judgment from hiring. It makes human judgment worth more by giving it something rigorous to work with.