Language is messy, contextual, and deeply human.

Teaching a machine to understand it is one of the most ambitious challenges in artificial intelligence, and Natural Language Processing is how that challenge gets solved. NLP is the branch of AI that enables computers to read, interpret, and generate human language in a way that is actually meaningful rather than purely mechanical.

In recruitment, NLP is already embedded in tools most hiring teams use every day. It powers AI resume screening that goes beyond keyword matching to understand context and intent. It drives chatbot recruiting tools that can hold genuine candidate conversations at scale. It sits behind automated screening systems that parse job descriptions and match them against candidate profiles with increasing accuracy.

Understanding NLP is no longer just for data scientists. For anyone involved in data-driven recruiting, it is quickly becoming essential context for every tool you buy and every process you build.

The core metric governing NLP system quality in recruitment is the Screening Precision Rate: the proportion of candidates flagged as qualified by the NLP system who are confirmed as genuinely qualified after human review.

Screening Precision Rate (%) = (Candidates Confirmed Qualified by Human Review / Total Candidates Forwarded by NLP System) x 100

Best-in-class NLP recruitment systems achieve Screening Precision Rates above 88%. Industry average across ATS deployments sits closer to 69%. The gap is driven almost entirely by model quality, training data diversity, and the frequency of model calibration, not by the sophistication of the underlying technology stack.

What is Natural Language Processing (NLP)?

Natural Language Processing is a subfield of artificial intelligence concerned with enabling computers to process and understand human language in all its ambiguity, context-dependence, and variation. In recruitment, NLP powers the systems that read a resume and identify the candidate’s most relevant experience, analyze a job description and extract its key requirements, match one against the other, and generate a relevance score that determines whether that candidate enters the human review pipeline.

Modern recruitment NLP operates primarily through transformer-based language models, architectures that represent the current state of the art in understanding contextual language meaning. These models understand that “directed a team” and “managed direct reports” describe the same capability, that “P&L responsibility” implies financial leadership even without the word “finance,” and that the absence of certain terminology does not mean the absence of the underlying skill. This semantic understanding is what distinguishes NLP-based screening from the keyword matching that preceded it, and what makes the quality of the NLP model a genuinely consequential business decision rather than a procurement detail.

Why Natural Language Processing Deserves More Strategic Attention Than It Gets

Most talent acquisition leaders can name their ATS vendor and their sourcing platforms. Fewer can name the NLP model their ATS uses, describe how it was trained, or articulate the last time its outputs were audited against human judgment. This is the strategic gap that defines NLP in most organizations: it is simultaneously one of the highest-leverage and least-examined pieces of recruiting infrastructure operating in the business.

The scale of the oversight is significant. In an organization making 300 hires per year, the NLP layer in the ATS makes the first pass on every one of those processes, before any human sees a resume. If the model’s Screening Precision Rate is 69% (the industry average), approximately 31% of the candidates being forwarded to recruiters are not genuinely qualified.

More critically, if the model’s False Negative Rate, the proportion of genuinely qualified candidates who are filtered out before human review, sits anywhere near the 18-22% range that internal audits at large enterprises have repeatedly identified, the organization is systematically losing one in five qualified candidates before a recruiter ever sees their name.

The ROI math around NLP quality is direct. Consider a technology company filling 200 engineering roles per year at an average time-to-fill of 47 days and a vacancy cost of $350 per role per day. If a better-calibrated NLP model reduces the False Negative Rate from 20% to 8%, meaning 24 additional genuinely qualified candidates enter the pipeline per year, and each of those candidates reduces average time-to-fill by 9 days on the roles they fill, the annual productivity gain is approximately $75,600. Against a model audit and recalibration cost of $12,000, the return exceeds 6:1.

The organizations that have conducted these analyses almost always discover that their NLP default settings were not designed for their specific talent market or hiring context.

The more subtle strategic risk is not false negatives alone but correlated false negatives. NLP models trained on historical hiring data tend to learn the language patterns of historically successful hires, which means they can systematically under-score resumes written in non-dominant English register, resumes from candidates who attended less-prestigious institutions, and resumes from career-changers whose relevant skills are described in the language of their origin field rather than their destination field.

These are not random errors; they are systematic patterns that compound over thousands of screenings into significant demographic effects. Research published in peer-reviewed journals and covered by Harvard Business Review found that leading commercial resume screening models showed statistically significant differential accuracy across demographic groups, with error rates measurably higher for candidates from underrepresented backgrounds in technical roles.

For TA leaders, the practical implication is to treat NLP model selection and auditing as a recruiting infrastructure decision, not a vendor procurement detail. The organizations that have built NLP auditing into their quarterly recruiting review, testing model outputs against human review on a random sample of screened-out candidates, consistently find actionable calibration opportunities and avoid the systematic talent loss that unchecked default settings produce. As data-driven recruiting practices mature across the industry, NLP auditing is becoming a standard component of recruiting operations, not an advanced capability reserved for teams with dedicated analytics resources.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind NLP in Recruitment

Language Pattern Matching and Who Gets Seen

NLP models learn from the language patterns in their training data, which means they perform best at recognizing language that resembles the language they were trained on. In recruitment contexts, this creates a subtle but consequential dynamic: candidates who write in the dominant professional register of their target field, the specific vocabulary, phrasing conventions, and structural choices overrepresented in historically successful resumes, receive higher relevance scores. Candidates who describe equivalent experience in different language receive lower scores.

This is not a flaw in the technology; it is an accurate reflection of its training distribution. The strategic implication is that NLP screening rewards candidates fluent in the language game of the field, not just the field itself.

The Objectivity Halo Effect

One of the most consistent findings in research on bias in hiring is that automated tools are perceived as more objective than human judges, even when their error patterns are demonstrably systematic. Recruiters who see a high NLP relevance score are significantly less likely to manually review the candidates the system filtered out than recruiters who set the shortlist manually, because the system’s decision carries an implicit legitimacy that a human decision does not. This objectivity halo effect means NLP errors propagate further through the hiring process than equivalent human errors would, because the feedback mechanism for correcting them has been suppressed by the perception of machine neutrality.

Candidate Adaptation and the Arms Race Effect

As NLP-based screening has become widespread, a countervailing behavior has emerged: candidates optimizing their resumes for NLP systems rather than for human readers. The ATS optimization industry, including tools that score resume keyword density against job descriptions, has grown substantially because candidates have correctly identified that Natural Language Processing screening is a distinct evaluation context with its own success criteria.

From a recruiting quality standpoint, this creates a signal contamination problem: resumes optimized for NLP systems score higher on relevance without necessarily representing higher candidate quality. Understanding this dynamic is important for calibrating the weight given to NLP screen scores relative to other evaluation inputs in the process.

NLP vs. Related Recruitment Technologies

| Approach | Mechanism | Accuracy | Bias Risk | Speed |

|---|---|---|---|---|

| NLP-Based Screening | Semantic language analysis | High (when audited) | Moderate (training-data dependent) | Very Fast |

| Keyword Matching | Exact term presence | Low (misses synonyms) | Low | Very Fast |

| Boolean Search | Logical operator queries | Medium | Low | Fast |

| Manual Resume Review | Human judgment | Variable | High (individual bias) | Slow |

| AI-Powered Assessment | Multi-signal behavioral and language | Highest | Lowest (with auditing) | Fast |

What the Experts Say?

NLP in hiring is not a filter, it is a lens. The question is not whether the lens is fast, it is. The question is whether the lens was ground from data that reflects the talent you actually want, or the talent you have always had. Those are not the same population, and they do not speak the same resume language.

– Tomas Chamorro-Premuzic

How to Measure NLP Effectiveness in Recruitment?

Formula

Screening Precision Rate (%) = (Candidates Confirmed Qualified at Human Review / Total Candidates Forwarded by NLP) x 100

False Negative Rate (%) = (Qualified Candidates Filtered Out by NLP / Total Qualified Candidates in Applicant Pool) x 100

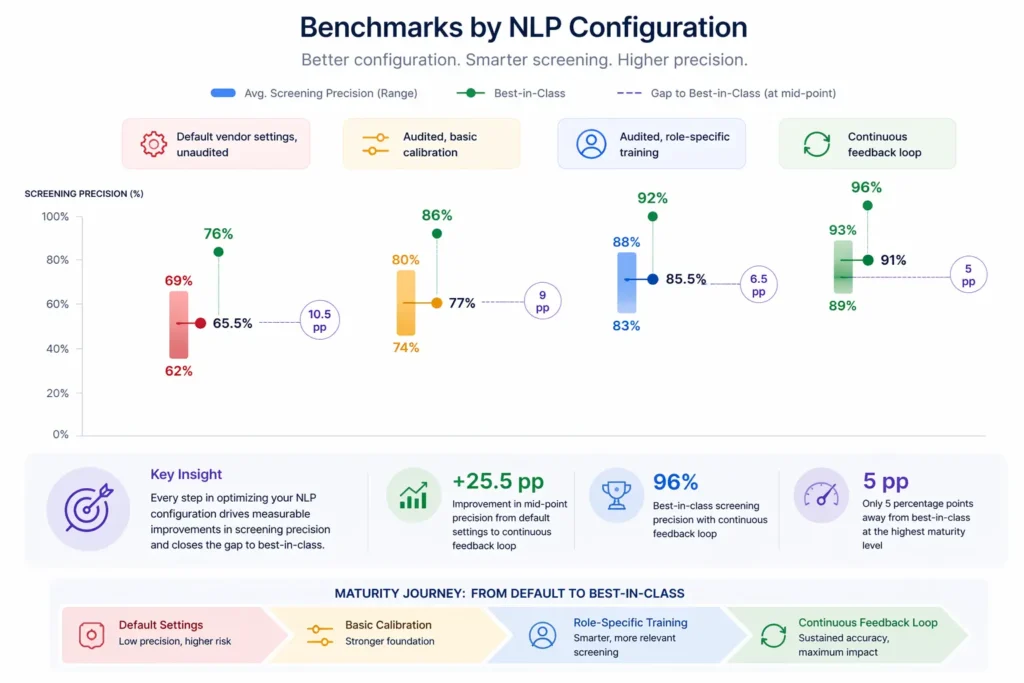

Benchmarks by NLP Configuration

| Configuration | Avg. Screening Precision | Best-in-Class |

|---|---|---|

| Default vendor settings, unaudited | 62-69% | 76% |

| Audited, basic calibration | 74-80% | 86% |

| Audited, role-specific training | 83-88% | 92% |

| Continuous feedback loop | 89-93% | 96% |

Key Strategies for Effective NLP Use in Recruitment

How Can AI and Automation Support NLP in Recruitment?

Semantic Resume Parsing and Structured Data Extraction

AI-powered semantic parsing tools can extract structured information from unstructured resume text, identifying not just that a candidate has “eight years of experience” but that those eight years involved progressive leadership roles in a specific sector with specific technical skill exposure at each stage. This structured data extraction, when integrated with a candidate management system, enables the kind of precise filtering and comparison that keyword-based systems could not support.

Real-Time Bias Detection and Mitigation

Advanced AI systems can monitor Natural Language Processing screening outputs in real time for demographic disparity signals, identifying whether candidates from specific demographic groups are being filtered at systematically higher rates, and flag these patterns for human review before they propagate through the pipeline. This real-time audit layer converts bias detection from a retrospective exercise into a proactive quality control process that prevents systematic filtering from accumulating into significant representation gaps.

Job Description Optimization and Language Analysis

NLP tools can analyze job descriptions for unnecessarily exclusive language, complexity scores that deter non-native English speakers, and gender-coded terminology that research has shown to reduce application rates from specific demographic groups. These tools compare job description language against inclusive posting corpora and flag specific phrases for revision, producing more accessible and effective postings without requiring specialized expertise from the TA team.

Conversational AI and Natural Language Candidate Engagement

NLP-powered chatbots can conduct preliminary candidate screening conversations in natural language, asking role-specific questions, evaluating response quality, and producing structured outputs for recruiter review, at a scale and consistency that human screeners cannot match. These systems are increasingly sophisticated in their ability to distinguish between candidates who are genuinely qualified and those pattern-matching to expected answers, making them a meaningful component of the modern automated screening toolkit.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Natural Language Processing and Equitable Hiring Practices

Training Data Bias and Systematic Demographic Filtering

The most significant equitable hiring risk associated with NLP is training data homogeneity. NLP models trained primarily on resumes from historically successful hires will learn to recognize the language patterns of those candidates as signals of quality and will systematically under-score candidates whose experience is equivalent but whose language reflects different educational backgrounds, geographic origins, or career paths.

The corrective intervention is auditing: measuring model output accuracy separately across demographic groups, identifying disparity patterns, and retraining models on more diverse evaluation datasets. Blind hiring approaches can serve as a useful parallel process to assess whether NLP outputs correlate with demographic signals that should have no bearing on qualification.

Language Accessibility and Non-Native Speaker Disadvantage

Candidates who are not native English speakers, or whose professional socialization occurred in a context where different language conventions apply, are systematically disadvantaged by NLP models trained on native-speaker resume corpora. This disadvantage does not reflect the candidate’s actual job capability, only their language representation of it. Organizations targeting international talent pools or underrepresented communities should explicitly test their NLP models for non-native speaker accuracy gaps and apply appropriate score adjustments or secondary review protocols for candidates flagged in this category.

Representation in Job Description Language and Application Rates

Natural Language Processing operates bidirectionally in the application process: it influences how applications are scored, and through job description optimization, it shapes who applies in the first place. Research consistently shows that job descriptions containing unnecessarily gendered language, excessive credential requirements, or cultural insider terminology produce demographic imbalances in application pools before any screening occurs. Using NLP to audit and optimize job description language is therefore as important an equitable hiring intervention as auditing the screening model itself, and it operates at a stage where correction costs nothing compared to rebuilding a biased shortlist.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| NLP model producing a high volume of false negatives for a specific role type | Conduct a role-specific calibration exercise using examples of strong and weak candidates in that role family; adjust semantic weighting for domain-specific terminology |

| Candidates gaming the NLP system through keyword stuffing | Add a secondary human review for candidates with unusually high relevance scores relative to their overall profile quality; use behavioral assessments as a complementary signal |

| Job description language producing a demographically narrow applicant pool | Apply NLP job description auditing tools to identify and replace exclusive language before posting; benchmark applicant pool demographics against market availability data |

Real-World Case Studies

Case Study 1: The Logistics Enterprise

A 15,000-employee logistics company implemented a new NLP-powered ATS and saw time-to-shortlist drop from 18 days to 4 days. Twelve months later, a quality audit revealed that the False Negative Rate for warehouse operations manager candidates was 24%, meaning nearly one in four genuinely qualified candidates had been filtered out. The root cause was a training dataset drawn primarily from white-collar professional services resumes that did not include the operational language used by strong candidates from field operations backgrounds. A role-specific retraining exercise reduced the False Negative Rate to 9%, and the post-retraining shortlists consistently ranked higher in hiring manager satisfaction scores by an average of 21 percentage points.

Case Study 2: The Technology Scale-Up

A 500-person technology company applied NLP job description analysis to all 40 of its active postings and found that 28 contained terminology rated as gender-coded by language analysis benchmarks. They revised the language in those 28 postings and re-published without other changes to the sourcing strategy. Female applicant rates across the revised postings increased by 31% in the following 60 days, with no change in overall application quality scores. The analysis cost less than $3,000 in tool licensing and recruiter time, while the pipeline impact translated to six additional qualified female candidates in final-stage interviews within the quarter.

Case Study 3: The Healthcare System

A regional healthcare system deployed an NLP-powered chatbot for initial registered nurse screening, automating the collection and evaluation of responses to twelve standardized clinical competency questions. The chatbot processed 340 applications in a three-week high-volume hiring period, producing structured evaluation outputs for each. Recruiters reviewed chatbot summaries rather than full applications, reducing initial screening time by 72%. Hiring manager satisfaction with shortlist quality was rated equivalent to manually-screened shortlists in a blind comparison, validating the NLP system’s ability to replicate human initial screening quality at a fraction of the time and resource cost.

Measuring Natural Language Processing Success: Key Performance Indicators

NLP Across the Recruitment Lifecycle

Job Description Development Stage

NLP’s influence on a recruitment process begins before the first application arrives. Job description language directly determines the NLP matching parameters for all candidate scoring downstream, and Natural Language Processing tools applied to job descriptions at the drafting stage can identify unnecessarily exclusive language, calibrate terminology to what candidates actually use, and optimize descriptions for search engine visibility. This upstream application of NLP is one of the highest-leverage and least-utilized capabilities in the standard recruiting toolkit.

Resume Screening and Shortlisting Stage

This is where NLP delivers its most visible efficiency impact, processing thousands of applications against a defined relevance model and producing a ranked shortlist in minutes. The quality of this output is entirely dependent on model quality, training data calibration, and the appropriateness of the matching parameters for the specific role. Organizations that treat the Natural Language Processing shortlist as a starting point for human judgment, rather than a final verdict, consistently achieve better outcomes than those using it as a hard filter with no human review of edge cases.

Interview and Candidate Engagement Stage

Natural Language Processing capabilities extend into the interview process through transcript analysis tools that extract key themes from recorded interviews, chatbot systems that conduct structured preliminary conversations, and sentiment analysis tools that evaluate candidate communication quality at scale. These applications reduce the administrative burden of interview documentation while providing structured data outputs that support more consistent evaluation across a panel of interviewers with different evaluation habits.

Post-Hire Feedback and Model Improvement Stage

The most underutilized phase of Natural Language Processing application in recruitment is the feedback loop between hire outcomes and model calibration. Organizations that systematically share performance data, twelve-month ratings, retention outcomes, and manager evaluations, back into their Natural Language Processing calibration process build models that improve continuously rather than degrading against a static training baseline. This feedback loop transforms the NLP model from a fixed infrastructure component into a learning system that becomes more accurate as the organization accumulates hiring experience.

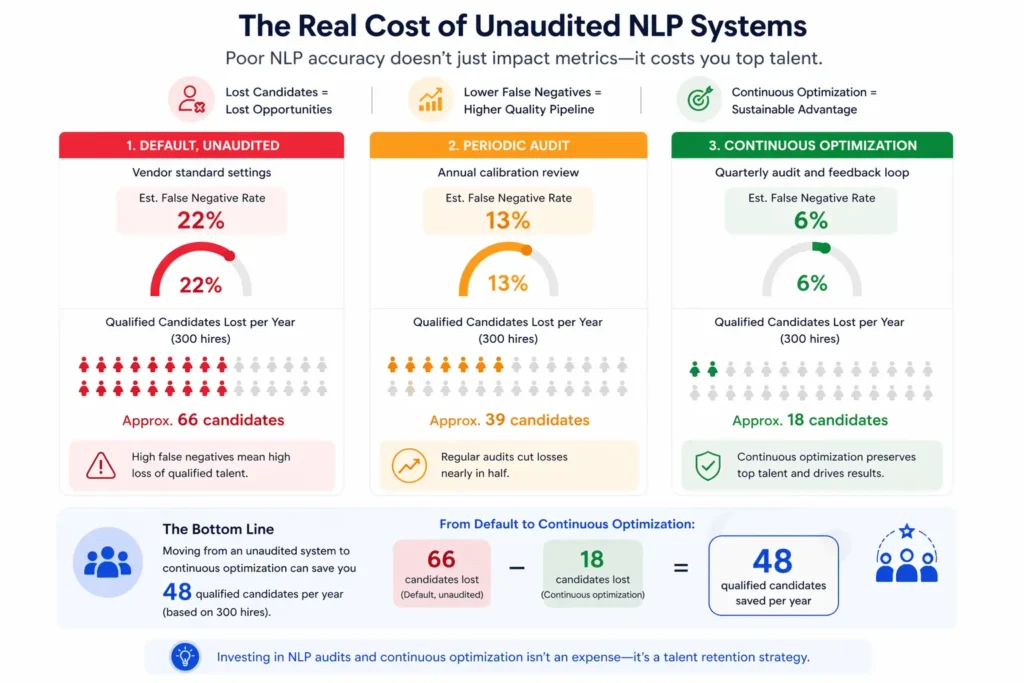

The Real Cost of Unaudited NLP Systems

| Scenario | NLP Configuration | Est. False Negative Rate | Qualified Candidates Lost per Year (300 hires) |

|---|---|---|---|

| Default, unaudited | Vendor standard settings | 22% | Approx. 66 candidates |

| Periodic audit | Annual calibration review | 13% | Approx. 39 candidates |

| Continuous optimization | Quarterly audit and feedback loop | 6% | Approx. 18 candidates |

Assumes an applicant pool where 35% of applicants are genuinely qualified. Candidates “lost” represent those filtered before human review who would have been competitive shortlist candidates.

Related Terms

| Term | Definition |

|---|---|

| AI Resume Screening | The use of artificial intelligence to evaluate and score resumes against role requirements before human review |

| Boolean Search | An advanced search technique using logical operators (AND, OR, NOT) to filter candidate databases and professional networks |

| Automated Screening | The process of using technology to evaluate candidates against defined criteria without individual human review of each application |

| Semantic Search | A search methodology that understands the meaning and context of a query rather than matching exact keyword strings |

| Applicant Tracking System (ATS) | Software that manages the end-to-end recruitment process from job posting through offer, typically incorporating NLP for resume parsing |

Frequently Asked Questions

What is Natural Language Processing in recruitment?

Natural Language Processing in recruitment is the application of AI language technology to tasks across the hiring process, including resume parsing, job description analysis, candidate-role matching, chatbot screening, and interview transcript analysis. NLP enables recruiting software to understand the meaning of language rather than matching exact keywords, which substantially improves candidate-role matching accuracy for roles with varied candidate backgrounds.

Is NLP biased in hiring?

NLP systems can reflect and amplify biases present in their training data. Models trained primarily on resumes from historically successful hires tend to score candidates who write in similar language more highly, which can produce systematic demographic disparities. Regular auditing against demographic outcomes is the standard approach to identifying and correcting these patterns before they compound into significant representation gaps.

How does NLP differ from keyword-based screening?

Keyword matching requires an exact term to be present in a document to register a match. NLP understands semantic meaning, so a resume describing “managed cross-functional teams” can match a job description requiring “led cross-departmental projects” without containing the specified words. This semantic understanding is both NLP’s primary advantage and, when miscalibrated, a source of systematic errors that are harder to diagnose than simple keyword mismatches.

Can candidates game NLP screening systems?

Candidates who mirror the specific language of a job description in their resume will typically score higher in NLP screening than candidates who describe equivalent experience in different language. The ATS optimization industry has grown specifically because candidates have identified this dynamic. Organizations address this by combining NLP scoring with assessment tools that evaluate capability independently of language pattern matching, reducing the advantage of optimization behavior.

How do organizations audit their NLP models?

The most practical NLP audit approach is a False Negative audit: take a random sample of candidates filtered out by the NLP system before human review, have a recruiter evaluate them manually, and calculate the proportion who were genuinely qualified. Organizations with access to performance data can also run retrospective analyses comparing NLP scores to twelve-month performance ratings for hired candidates, building a feedback signal that directly improves model calibration over time.

Conclusion

Natural Language Processing is not a feature in a software package.

It is the decision engine running at the front of your hiring process, making consequential quality judgments on thousands of candidates before any human is involved.

The organizations that treat it accordingly, auditing its outputs, calibrating it against their specific talent markets, and monitoring its demographic effects, consistently achieve higher shortlist quality, lower time-to-fill, and more equitable candidate pipelines than those running unexamined vendor defaults. NLP will only become more central to recruitment infrastructure as AI capabilities advance.

The question is not whether to use it, but whether you are using it with enough scrutiny to justify the trust you are placing in it.