Scheduling a thirty-minute phone screen with ten candidates sounds manageable until you are actually doing it. The back-and-forth emails, the no-shows, the time zones, the calendar conflicts. It adds up fast, and most of that friction happens before you have learned a single useful thing about any of the candidates.

One-way video interviews were built to fix exactly that.

A one-way video interview, also called an asynchronous video interview, is a format where candidates record their responses to pre-set questions on their own time, without a live interviewer on the other end. For hiring teams managing high applicant pool volumes, it is one of the most practical tools available for early-stage screening without sacrificing candidate experience.

The format connects naturally with broader asynchronous interview practices that are becoming standard across remote and hybrid hiring processes. It reduces scheduling overhead, gives candidates flexibility, and feeds into a more structured, data-driven recruiting approach where every early-stage evaluation follows the same format.

This guide covers how one-way video interviews work, when to use them, and how to make the experience work for both sides of the hiring process.

The core metric governing one-way video interview programs is Screening Conversion Rate: the proportion of video submissions that advance to the next evaluation stage.

Screening Conversion Rate (%) = (Candidates Advanced / Total Video Submissions Reviewed) x 100

Top-performing screening programs using one-way video maintain Screening Conversion Rates of 18-25%, indicating well-targeted sourcing and calibrated evaluation criteria. Rates below 10% typically signal over-broad sourcing or poorly defined criteria. Rates above 35% suggest insufficient screening rigor for the stage.

What is a One-Way Video Interview?

A one-way video interview is a structured, asynchronous screening tool in which a candidate records video responses to a fixed set of interviewer questions, using a dedicated platform, and submits those recordings for reviewer evaluation without real-time interaction. The candidate controls when and where they record their responses, within the timeframe set by the hiring organization, and evaluators review responses at a separate time using a shared evaluation rubric.

The format is distinct from both the traditional phone screen, which is synchronous and audio-only, and the live video interview, which is synchronous and requires mutual scheduling. The one-way format decouples the candidate’s performance from the evaluator’s availability entirely, which is simultaneously its greatest operational advantage and its most significant candidate experience risk. Candidates who are unfamiliar with the format or who receive insufficient instructions may experience the absence of a human on the other side as cold or impersonal, regardless of the quality of the opportunity being presented.

What makes one-way video interviews genuinely more than a convenience tool is the evaluator consistency they create. When all candidates in a screening cohort respond to identical questions in the same format, evaluators are comparing equivalent inputs rather than impressions shaped by conversational dynamics, question sequencing variance, or individual interviewer rapport. This consistency is a meaningful structural improvement over phone screen quality, which varies significantly based on how skilled the individual recruiter is at extracting comparable information across different conversations.

Why the One-Way Video Interview Is a Competitive Differentiator in Modern Hiring?

The business case for one-way video interviewing is stronger than most organizations have realized, and the gap between organizations using it effectively and those applying it haphazardly is measurable in both efficiency and quality outcomes.

On the efficiency side, the data is unambiguous. A phone screen for a single candidate, including scheduling, the call itself, and documentation, consumes an average of 45-60 minutes of recruiter time. A one-way video interview, once the question set is built, requires approximately 12-15 minutes of reviewer time per candidate for a standard three-question submission. For an organization screening 500 candidates per quarter across open roles, the difference represents over 200 hours of recruiter capacity, capacity that can be redirected toward the candidate engagement and relationship-building work that AI cannot replicate.

The quality case is less widely cited but equally compelling. Research from Harvard Business Review on structured interviews consistently shows that standardized question sets evaluated against explicit criteria produce significantly higher predictive validity for job performance than unstructured conversations. The one-way video format enforces question standardization by design: every candidate answers the same questions, in the same order, with the same response time limit. The result is a comparison dataset that is structurally more consistent than any phone screen process in which individual recruiters drive the conversation.

Candidate experience quality is the most frequently underestimated variable in one-way video implementation. The format’s efficiency only delivers its full ROI if candidates actually complete their submissions. According to research published in the Journal of Applied Psychology, completion rates for one-way video interviews fall sharply when technical instructions are unclear, response time per question exceeds three minutes, or the number of questions exceeds five.

Organizations that optimize for completion through clear technical guidance, concise question design, and mobile-friendly submission interfaces achieve completion rates of 70-80%. Those that do not routinely see completion rates below 50%, which eliminates the efficiency gain entirely, since the time saved on screening is offset by the time required to rebuild the pipeline for roles where candidates dropped out.

For TA leaders, the practical conclusion is that one-way video is not a drop-in replacement for the phone screen. It is a format that requires deliberate design, candidate communication investment, and evaluation training to deliver its quality and efficiency promise.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind One-Way Video Interviews

Performance Anxiety and the Missing Audience

The absence of a live human interlocutor produces a paradoxical effect in many candidates: the lack of someone to respond to increases rather than decreases performance anxiety for candidates who rely on social feedback cues to calibrate their responses. Without nodding, verbal acknowledgment, or conversational flow, candidates in one-way video interviews report feeling uncertain whether their responses are landing, which creates a form of social anxiety distinct from but equally inhibiting as traditional interview nerves. Organizations that address this directly in their candidate-facing communications, explicitly validating that the format feels unfamiliar and providing specific guidance on how to approach it, see meaningfully higher completion rates and self-reported candidate satisfaction.

Preparation Asymmetry and Authenticity Trade-offs

The one-way video format allows candidates unlimited preparation time before recording, which is a structural feature that simultaneously enables stronger, more articulate responses and introduces concerns about response authenticity. Evaluators must calibrate their assessment accordingly, recognizing that a candidate’s one-way video performance reflects their best-prepared self rather than their unrehearsed communication style. This is not a defect in the format; it is a design feature, since the hiring organization similarly presents its best-prepared self in the job description and employer brand. The calibration challenge is ensuring evaluators assess content and reasoning quality rather than production value alone.

Evaluator Cognitive Load and Consistency Risk

Video fatigue in evaluators reviewing large volumes of one-way submissions introduces a consistency risk that the format is designed to mitigate but does not eliminate. Reviewers watching their twentieth submission in a session evaluate candidates with measurably less care than those reviewing their third or fourth, producing sequential position bias equivalent to decision fatigue in face-to-face interview panels. Organizations that implement review limits, require completion of evaluation rubrics immediately after each submission, and distribute review across multiple evaluators for key roles dramatically reduce this consistency risk. The interview scorecard is the evaluator-side tool that transforms a viewing session into a comparable evaluation dataset.

One-Way Video Interview vs. Related Screening Methods

| Method | Scheduling Required | Candidate Flexibility | Evaluator Time | Scalability | Evaluation Consistency |

|---|---|---|---|---|---|

| One-Way Video Interview | No | High | Low (12-15 min per candidate) | Very high | High |

| Live Phone Screen | Yes | Medium | High (45-60 min per candidate) | Low | Moderate |

| Live Video Interview | Yes | Medium | High (60-90 min per candidate) | Low | Moderate |

| Written Assessment | No | High | Low (10-20 min per candidate) | High | High |

| In-Person Interview | Yes | Low | Very high (90-120 min per candidate) | Very low | Moderate |

What the Experts Say?

The best one-way video interview process I have seen treats the candidate as an audience of one. The question design, the instructions, and the submission experience are all built with the specific person in mind, not the process. That is the difference between an efficient screening tool and an efficient candidate exit ramp.

– Matt Alder, Host, The Recruiting Future Podcast; Author of Exceptional Talent

How to Measure One-Way Video Interview Effectiveness?

Formulas

Screening Conversion Rate (%) = (Candidates Advanced / Total Submissions Reviewed) x 100

Submission Completion Rate (%) = (Completed Submissions / Invitations Sent) x 100

Review-to-Decision Time (hours) = Time of Evaluation Decision - Time of Submission

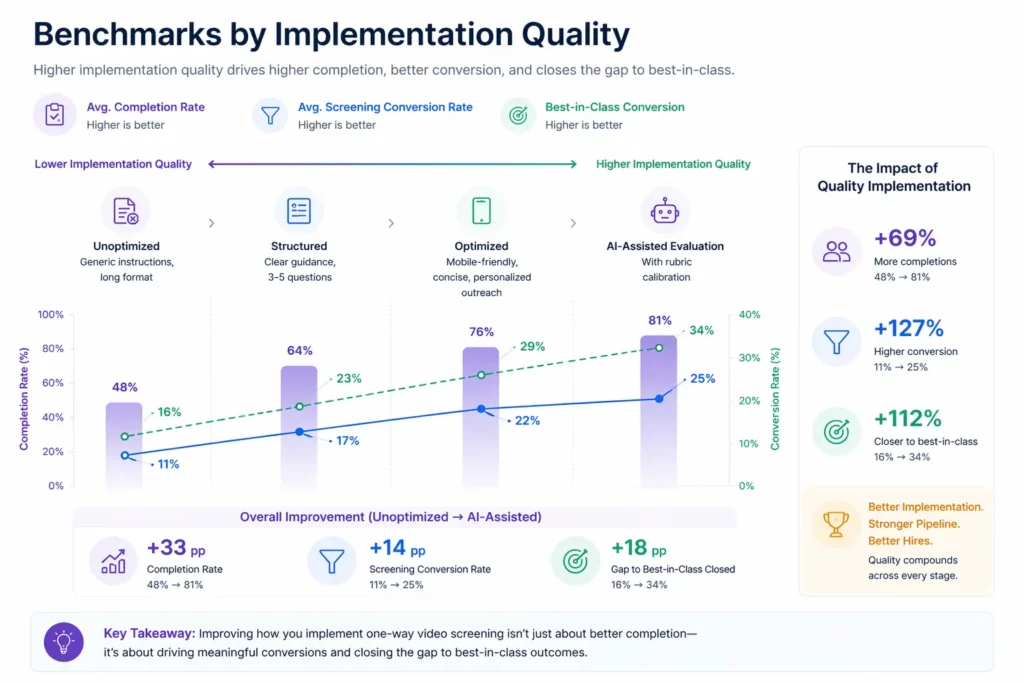

Benchmarks by Implementation Quality

| Implementation Quality | Avg. Completion Rate | Avg. Screening Conversion Rate | Best-in-Class Conversion |

|---|---|---|---|

| Unoptimized (generic instructions, long format) | 48% | 11% | 16% |

| Structured (clear guidance, 3-5 questions) | 64% | 17% | 23% |

| Optimized (mobile-friendly, concise, personalized outreach) | 76% | 22% | 29% |

| AI-assisted evaluation with rubric calibration | 81% | 25% | 34% |

Key Strategies for Effective One-Way Video Interviews

How Can AI and Automation Support One-Way Video Interviews?

AI-Powered Response Analysis

Natural language processing tools can analyze the verbal content of candidate video submissions, identifying key competency evidence, response structure quality, and communication clarity signals that provide evaluators with structured pre-reading before their own review. This analytical layer does not replace human judgment; it reduces the cognitive load on evaluators by organizing submission content and flagging specific moments in recordings for closer attention, compressing effective review time without reducing evaluation quality.

Automated Scheduling and Invitation Management

Workflow automation platforms can trigger one-way video invitations at the appropriate pipeline stage, send reminder communications to candidates who have not yet submitted, and notify reviewers when new submissions are ready for evaluation, all without manual recruiter intervention. This automation is particularly valuable in high-volume screening scenarios where manual coordination would consume recruiter time that should be concentrated on higher-value interactions.

Evaluation Rubric Enforcement and Consistency Tracking

AI-powered evaluation platforms can track inter-rater consistency across reviewers evaluating the same submission cohort, flagging significant divergences in assessor scores for calibration follow-up. Over time, this consistency tracking builds a dataset that identifies which evaluators are systematically over- or under-scoring specific competency dimensions, providing development intelligence that improves evaluation quality across hiring cycles.

Bias Identification in Evaluation Patterns

AI analysis of evaluation outcomes across submission cohorts can identify patterns of demographic differential scoring in one-way video reviews, flagging potential evaluator bias before it becomes a systemic hiring outcome. For example, if evaluators consistently rate submissions from candidates with international accents lower on “communication clarity” despite equivalent verbal content quality, this pattern can be surfaced for calibration discussion and evaluator training. This connects directly to the broader challenge of bias in hiring processes.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

One-Way Video Interview and Inclusive Talent Practices

Geographic and Scheduling Accessibility

The asynchronous nature of one-way video interviewing removes the scheduling barriers that disproportionately affect candidates with caregiving responsibilities, non-traditional working hours, or geographic time zone differences from the hiring organization. A parent of young children who cannot take phone calls during business hours, or a candidate in a significantly different time zone, can complete a one-way video submission at a time that is genuinely convenient. This scheduling flexibility is a structural inclusion mechanism that open-to-all application processes often claim to provide but rarely deliver in practice.

Standardization as a Demographic Equity Tool

Research on live phone screening consistently demonstrates that evaluator rapport signals, which correlate with shared background, communication style, and cultural reference points rather than role-relevant competence, significantly influence screening outcomes. The one-way video format, when evaluated against an explicit rubric applied consistently across all submissions, reduces the role of rapport-based screening and concentrates evaluator attention on demonstrated competence. Organizations tracking demographic representation at each pipeline stage consistently find that one-way video screening with rubric evaluation produces more demographically diverse shortlists than phone screen processes of equivalent volume. Explore more on this topic in the diversity hiring guide.

Evaluator Training for Appearance-Based Bias

The video format introduces a class of visual bias risks that audio-only screening does not: appearance-based judgments about professionalism, setting quality, and self-presentation that correlate with socioeconomic background rather than role competence. Organizations deploying one-way video screening should include explicit appearance-related bias training in their evaluator preparation, establishing norms that evaluate response content rather than home office quality, background aesthetics, or presentation polish as a proxy for organizational fit.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Submission completion rates below 55% | Audit the candidate-facing instructions and technical experience; add a practice question option; ensure full mobile compatibility; shorten question set length |

| Evaluators disagreeing significantly on the same submissions | Run a calibration session before the review cohort begins; require written rubric scores before group discussion; limit individual reviewer caseloads per session to avoid fatigue |

| Candidates raising concerns about the format feeling impersonal | Add a brief personal video message from the hiring manager at the start of the submission prompt; personalize the invitation email; acknowledge the format’s unfamiliarity directly |

Real-World Case Studies

Case Study 1: The Financial Services Firm

A financial services firm screening 800 applicants per quarter for analyst roles replaced its phone screen process with one-way video interviews using a three-question, six-minute maximum format. Recruiter screening time per candidate fell from 52 minutes to 14 minutes. Screening Conversion Rate to first-round interview improved from 14% to 21%, reflecting better evaluation consistency with the structured rubric. Time-to-shortlist fell from 19 days to 8 days. Candidate satisfaction with the screening process, measured by post-screen survey, was equivalent to the phone screen baseline at 6.8/10, dispelling the concern that the asynchronous format would produce significantly lower candidate experience scores.

Case Study 2: The Retail Group

A national retail group implementing one-way video screening for store manager roles across 200 locations found that 71% of candidate submissions were completed on mobile devices. Their initial platform required desktop for recording, producing a completion rate of 38%. After migrating to a mobile-first video platform with a maximum question response time of 90 seconds and a five-day submission window, completion rates rose to 74%. The group also reported a more geographically diverse shortlist, attributing the improvement to the removal of scheduled phone screen barriers for candidates in rural locations.

Case Study 3: The Technology Scale-Up

A Series C technology company used one-way video screening to evaluate 340 engineering candidates in a six-week sourcing sprint that their team of three recruiters could not have managed through phone screens within the same timeframe. AI-assisted response analysis flagged the top-quartile submissions by communication clarity and response structure, allowing reviewers to prioritize their evaluation time. From 340 submissions, the team advanced 68 to technical assessment within three weeks, a shortlisting pace that would have required an additional two recruiters under a traditional screening model.

Performance Metrics Every TA Leader Should Monitor

One-Way Video Interview Across the Hiring Lifecycle

Awareness and Application Stage: Setting Format Expectations

The quality of a one-way video screening process is partially determined before a single submission is recorded. Organizations that communicate the format clearly in the job posting, describe what the one-way video experience will involve, and provide preparation guidance in the invitation email produce significantly higher completion rates than those for whom the format is a surprise. Setting format expectations as part of the candidate experience design is not a courtesy; it is a pipeline protection measure.

Screening Stage: Invitation, Completion, and Review

The active screening phase has three distinct quality leverage points: the invitation communication, the submission platform experience, and the evaluator review process. Each requires separate design attention. The invitation determines whether the candidate engages. The platform experience determines whether they complete. The evaluation rubric determines whether the completion produces a reliable screening outcome. Organizations that optimize all three consistently outperform those optimizing for only one.

Shortlist Stage: Connecting Submission Quality to Interview Design

One-way video submissions contain valuable information for designing subsequent interview stages. A candidate’s communication style, areas of apparent strength, and moments of hesitation in their submission provide hiring manager interviewers with a pre-read that makes live interviews more targeted and productive. Organizations that share submission recordings with hiring managers before interviews, with rubric scores and specific probe question suggestions, consistently report more productive first-round interview conversations than those for whom the live interview is the evaluator’s first exposure to the candidate.

Offer Stage: Candidate Relationship Continuity

Candidates who completed a one-way video submission but were not advanced are a sourcing asset for future roles. The organization has their recorded responses, their competency evidence, and their contact information. Automated candidate relationship management that keeps non-advanced video submitters warm for future relevant roles converts the screening investment into a talent pipeline asset rather than a one-time transaction.

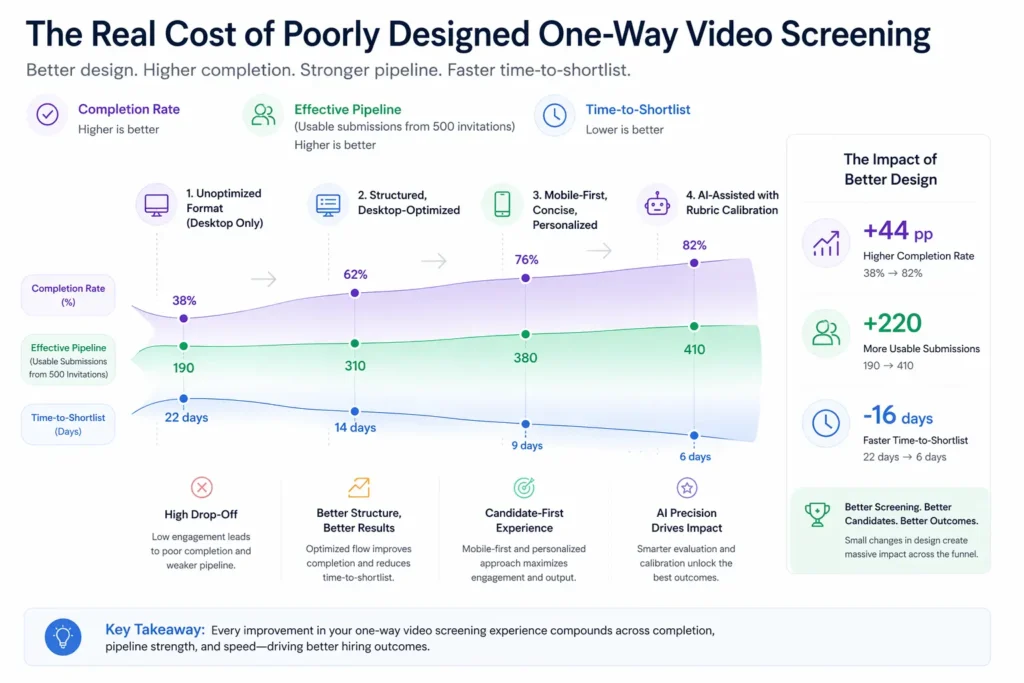

The Real Cost of Poorly Designed One-Way Video Screening

| Scenario | Completion Rate | Effective Pipeline (from 500 invitations) | Time-to-Shortlist |

|---|---|---|---|

| Unoptimized format, desktop only | 38% | 190 usable submissions | 22 days |

| Structured, desktop-optimized | 62% | 310 usable submissions | 14 days |

| Mobile-first, concise, personalized | 76% | 380 usable submissions | 9 days |

| AI-assisted with rubric calibration | 82% | 410 usable submissions | 6 days |

Effective pipeline represents the number of qualified submissions available for evaluation from a standard 500-invitation cohort. Time-to-shortlist includes evaluation and decision cycle time.

Related Terms

| Term | Definition |

|---|---|

| Asynchronous Interview | An interview format conducted without real-time interaction, allowing candidates and evaluators to participate at separate times |

| Screening Conversion Rate | The proportion of candidates at a screening stage who are advanced to the next stage of the hiring process |

| Interview Scorecard | A structured evaluation tool using predefined competency criteria and scoring rubrics applied consistently across candidates |

| Digital Interview | Any interview format conducted using digital technology, encompassing both synchronous video and asynchronous recording formats |

| Automated Screening | The use of technology to filter and evaluate candidates at scale without requiring proportional increases in human reviewer time |

Frequently Asked Questions

What is a one-way video interview?

A one-way video interview is an asynchronous screening format in which candidates record video responses to pre-set questions on their own schedule, without a live interviewer present, and those recordings are reviewed by recruiters or hiring managers at a separate time. The format decouples candidate response from evaluator availability, removing scheduling as a bottleneck in early-stage screening.

Are one-way video interviews fair to candidates?

When implemented with structured evaluation rubrics, clear instructions, and mobile-accessible platforms, one-way video interviews are generally fairer than unstructured phone screens because they standardize the evaluation inputs across all candidates. The fairness risk is in the evaluation stage, not the submission stage, and is mitigated by rubric calibration and evaluator bias training.

What is a good completion rate for one-way video invitations?

A completion rate above 65% is generally considered acceptable, with best-in-class programs achieving 75-80%. Rates below 50% indicate a problem with the invitation communication, the technical experience, or the perceived relevance of the format to the opportunity, and warrant an audit of all three elements before continuing the program.

How long should a one-way video interview take?

Most candidates complete a well-designed one-way video submission in 15-25 minutes. Best practice is to limit question sets to three to five questions with individual response times of 90 seconds to two minutes, producing a total submission time that candidates can reasonably complete in a single sitting on their lunch break or after work.

Can candidates retake their responses?

Platform settings vary, but best practice for most roles is to allow one practice question with unlimited retakes before the scored questions begin, and either one or two retakes for each scored question. Unlimited retakes for scored questions reduce the authenticity of responses; no retakes at all produce higher anxiety and lower completion rates.

Conclusion

The one-way video interview is one of the most efficient and, when properly implemented, most equitable tools in the modern recruiter’s screening kit.

Its value is not in the technology itself but in what the technology enables: consistent evaluation inputs, evaluator flexibility, geographic accessibility, and scale without proportional resource cost. The organizations that treat it as a candidate experience event, not just a process efficiency tool, are the ones achieving both high completion rates and high-quality shortlists from the same investment.

Design it as if the candidate’s first impression of your organization is the submission experience. Because for most of them, it is.