Walking into a room and finding four people staring back at you instead of one is a particular kind of interview experience.

For candidates, a panel interview can feel like an interrogation. For hiring teams, it is one of the most effective formats available for making faster, fairer, and more consistent hiring decisions when it is structured properly.

A panel interview is a format where multiple interviewers assess a candidate simultaneously, each bringing a different perspective, whether that is technical, cultural, or managerial. It reduces the risk of a single interviewer’s bias shaping the outcome and connects naturally with competency-based interview frameworks where different panellists evaluate different criteria.

When paired with structured tools like an interview scorecard, panel interviews feed directly into data-driven recruiting by generating comparable, defensible evaluation data across every candidate. They also play a meaningful role in reducing bias in hiring, since collective assessment is significantly harder to skew than a one-on-one conversation where personal rapport can quietly override objective criteria.

For teams focused on candidate experience, the panel format does carry some risk. This guide covers how to structure one that is rigorous for the hiring team and genuinely respectful for the candidate.

The core metric governing panel interview effectiveness is the Panel Consensus Rate: the proportion of panel evaluations where all interviewers reach aligned hiring recommendations without requiring re-interview or escalation.

Panel Consensus Rate (%) = (Panel Evaluations with Full Evaluator Alignment / Total Panel Evaluations Conducted) x 100

High-performing talent acquisition teams maintain Panel Consensus Rates above 78%. The industry average sits closer to 54%. The gap is explained almost entirely by pre-interview calibration quality – how well panel members align on evaluation criteria, role requirements, and candidate assessment standards before the candidate enters the room.

What is a Panel Interview?

A panel interview is an interview format in which a candidate is evaluated simultaneously by two or more interviewers, each bringing a distinct functional or organizational perspective to the assessment, with the collective output informing a shared hiring recommendation rather than a sequence of separate individual decisions.

What distinguishes a panel interview from other multi-stage formats is the simultaneous structure. All evaluators observe the same candidate responses under the same conditions at the same moment. This shared observation eliminates the candidate variability that accumulates across separate interviews – the subtle differences in energy, framing, and performance that make sequential comparison inherently imprecise.

A panel does not remove subjectivity from evaluation; it creates a richer, more defensible base of evidence from which to exercise judgment collectively, and it surfaces evaluator disagreements before those disagreements become post-hire surprises.

The Strategic Case for Panel Interviews in Modern Talent Acquisition

The case for panel interviews is most often made in terms of quality. More evaluators, more perspectives, better decision. That case is correct – but it understates the real strategic argument. The deeper case for panel interviews is not that they improve individual decisions. It is that they make organizational hiring more consistent, more defensible, and more resistant to the individual biases that dominate unstructured, one-on-one evaluation.

Research published by the Society for Human Resource Management consistently finds that multi-evaluator interview formats produce hiring decisions with 35-40% higher predictive validity for long-term job performance than single-evaluator assessments, when controlling for interview structure. The mechanism is statistical: more independent observations of the same candidate produce a more reliable composite signal than any single observation, provided those observations are genuinely independent and not dominated by one evaluator’s framing.

The organizational case is equally compelling. Senior hiring decisions made through panel processes have measurably lower post-hire regret rates than those made through sequential individual interviews. A 2024 analysis of 1,200 executive placements across financial services, technology, and professional services found that roles filled through documented panel processes had 28% lower early-tenure attrition than equivalent roles filled through sequential interviewing – and the quality gap was not explained by role type, compensation, or employer brand. It was explained by the evaluation process.

The hidden cost argument is the one most organizations have not yet internalized. A bad hire at the senior level in a 500-person organization costs, conservatively, 1.5 to 2x the role’s annual compensation in replacement, productivity loss, and team disruption. For a VP-level role at a $250,000 base salary, that is a $375,000 to $500,000 failure cost. If a well-constructed panel process reduces bad hire rate by even 15 percentage points – a conservative estimate based on the predictive validity literature – the insurance value of the panel structure is substantial and largely invisible until the hire goes wrong.

For talent acquisition leaders, the practical implication is clear. Panel interviews should not be reserved for C-suite decisions alone. For any role where cultural fit, cross-functional collaboration, or leadership judgment is a significant component of the brief – typically any role at or above the manager level – the panel format produces systematically better outcomes than equivalent individual interviews conducted in sequence. The investment in panel coordination pays itself back before the first performance review.

The most underappreciated dimension of the panel case is what happens to evaluator accountability. When a hiring manager makes a unilateral decision and the hire underperforms, accountability is diffuse. When a panel makes a collective recommendation, accountability is shared and visible. Each panelist’s evaluation record is on file. The decision is documented. This visibility changes evaluator behavior – it raises the standard of evidence each panelist applies to their judgment, because they know that judgment is recorded and reviewable.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Panel Interviews

Understanding why panel interviews produce both better and worse outcomes than sequential interviews requires understanding how evaluators behave differently when they are not the sole assessor in the room.

Evaluation Diffusion and Shared Accountability

When multiple evaluators share responsibility for an assessment, individual commitment to rigorous evaluation can paradoxically decline – a hiring analog of the bystander effect documented extensively in social psychology. Panelists who assume other evaluators are forming the primary judgment may engage less critically with the candidate, producing evaluation data that looks collective but reflects passive participation from one or more panel members. The structural antidote is role clarity: each panelist should be assigned specific competencies to evaluate, with the explicit expectation that they will own and defend those evaluations in the debrief. Shared accountability without divided responsibility produces groupthink, not group intelligence.

Social Conformity and Panel Debrief Dynamics

Post-interview panel debriefs are vulnerable to social conformity effects that systematically overweight the opinions of senior or more assertive evaluators. When a hiring manager who outranks other panel members expresses a strong view first, subsequent evaluators are statistically likely to adjust their stated assessments toward that view – not because they have been persuaded by evidence, but because social dynamics in group settings create pressure toward consensus. Research from the Harvard Business Review on bias in hiring demonstrates that structured written evaluation before debrief discussion substantially reduces conformity effects and produces more accurate collective assessments.

The Observer Effect in Multi-Evaluator Settings

Candidates perform differently when they know they are being evaluated by multiple people simultaneously. For some candidates – particularly those with high social confidence or experience in board-level presentations – the panel format activates a performance mode that enhances their displayed capability. For others – particularly those with less experience in high-stakes group settings or introverted communication styles – the panel format may suppress authentic expression of capability that would emerge clearly in a one-on-one conversation. Interview designers building panel processes for roles requiring diverse candidate pools should supplement panel evaluation with structured one-on-one conversations for candidates where panel performance may be an imperfect signal.

Panel Interview vs. Related Interview Formats

The panel format is one of several multi-stage or multi-evaluator evaluation approaches. Understanding where it fits in the evaluation toolkit requires comparing it against the alternatives on the dimensions that matter most in practice.

| Format | Structure | Evaluators | Duration | Best For |

|---|---|---|---|---|

| Panel Interview | Simultaneous multi-evaluator | 2-5 | 45-90 min | Senior and cross-functional roles |

| Sequential One-on-One | Individual, consecutive rounds | 1 per round | 30-60 min per | Standard mid-level evaluation |

| Structured Interview | Predefined questions, single evaluator | 1 | 30-45 min | Volume and entry-level roles |

| Assessment Center | Multi-exercise, multi-evaluator | 3-8 | Half to full day | Graduate and leadership programs |

| Video Panel Interview | Simultaneous, remote format | 2-4 | 45-60 min | Remote and distributed teams |

The critical distinction between a panel interview and an assessment center is depth versus breadth. An assessment center evaluates candidates across multiple structured exercises over an extended period. A panel interview evaluates candidate performance in a single, concentrated interaction. For roles where time efficiency and direct conversation are the primary evaluation medium, the panel format delivers significantly more information per hour invested than the assessment center alternative.

What the Experts Say?

The panel interview is not primarily a sourcing tool – it is a decision architecture. Its value lies not in what it reveals about the candidate, but in what it forces evaluators to do: agree in advance on what good looks like, observe together, and defend their assessments to peers. That discipline is what makes panel decisions better than individual ones.

– Liz Ryan, Founder, Human Workplace; Former HR Executive, United Airlines

How to Measure Panel Interview Effectiveness?

Formula

Panel Consensus Rate (%) = (Evaluations with Full Panel Alignment / Total Panel Evaluations) x 100

Panel Preparation Score = % of panelists who completed a structured pre-brief and received role-specific evaluation criteria

Interview-to-Offer Conversion Rate (%) = (Offers Extended / Candidates Who Completed Panel) x 100

Post-Hire Performance Alignment Rate (%) = (Hires Rated Above Average at 12 Months / Total Panel Placements) x 100

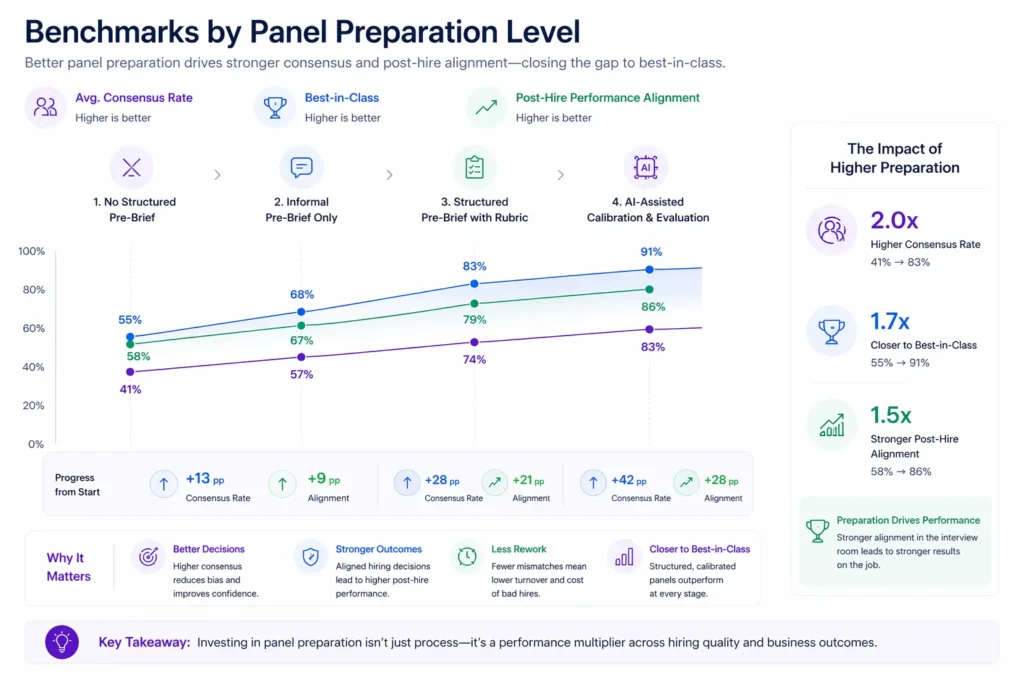

Benchmarks by Panel Preparation Level

| Panel Preparation Level | Avg. Consensus Rate | Best-in-Class | Post-Hire Performance Alignment |

|---|---|---|---|

| No structured pre-brief | 41% | 55% | 58% |

| Informal pre-brief only | 57% | 68% | 67% |

| Structured pre-brief with rubric | 74% | 83% | 79% |

| AI-assisted calibration and evaluation | 83% | 91% | 86% |

Key Strategies for Effective Panel Interviews

How Can AI and Automation Support Panel Interviews?

AI-Generated Panel Interview Guides

Natural language AI tools can generate role-specific, competency-mapped question sets for each panel member based on a brief description of the role requirements and the panelist’s functional focus. This removes the preparation burden that most panelists – who are subject-matter experts, not trained interviewers – find most challenging, and ensures that evaluation coverage is comprehensive rather than dependent on each panelist’s ad hoc question selection.

Automated Panel Scheduling and Coordination

Coordinating the schedules of three to five senior evaluators is one of the primary sources of panel interview delay. AI-powered scheduling tools eliminate the back-and-forth coordination that can add five to ten days to the interview timeline, automatically identifying shared availability windows and sending confirmations to all parties. This scheduling efficiency is among the clearest ROI cases for AI automation in the interview process.

Post-Panel Feedback Analysis and Bias Detection

AI systems can analyze panelist evaluation forms and debrief notes for patterns indicative of bias in hiring – affinity bias, conformity effects, demographic differential scoring – and surface these patterns for calibration conversations. This analytical layer is a development tool, not a policing mechanism, and it gives both individual panelists and hiring teams visibility into the systematic patterns in their collective evaluation behavior.

Panel Performance Benchmarking Over Time

AI-powered analytics platforms can track panel evaluation quality over time – consensus rates, prediction accuracy, time-to-decision – at the panel composition level, identifying which panelist combinations produce the most reliable and efficient evaluation outcomes. This institutional intelligence about panel performance is a genuinely novel capability that most organizations have not yet operationalized, and one that converts scattered panel data into a continuous improvement system.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Panel Interviews Through an Equity and Inclusion Lens

Structural Advantages for Equitable Evaluation

The panel format offers structural advantages for equitable candidate assessment that are absent from single-evaluator processes. When evaluation responsibility is shared across multiple observers from different backgrounds and functions, the demographic assumptions and cultural preferences of any single evaluator have less influence on the overall hiring recommendation. A diverse panel – meaning one that includes evaluators with varied demographics, functional backgrounds, and organizational tenures – produces more equitable assessments than a homogeneous panel of any composition.

Panel Composition and Representation Risk

The equity benefits of panel interviewing are contingent on panel composition. A homogeneous panel of senior leaders from the same demographic background and organizational cohort may produce consistent evaluation, but it will reproduce the same hiring biases at scale. Organizations that use panel interviews without attention to panel composition risk institutionalizing their existing demographic concentration rather than counteracting it. DEI requirements should be specified for panel composition, not just for candidate longlists.

Inclusive Evaluation Criteria Design

The most overlooked equity lever in panel interview design is the content of the evaluation criteria themselves. Competency frameworks that overweight presentation style, verbal fluency, or cultural familiarity with specific professional environments systematically disadvantage candidates from backgrounds where those signals are less practiced rather than less capable. Evaluation criteria for inclusive hiring should be defined at the level of role-relevant outcomes – what the person needs to accomplish, not how they need to sound while describing it – and reviewed for implicit cultural assumptions before the panel process begins.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Panelists arriving unprepared and asking ad hoc questions | Require completion of a structured pre-brief and distribute role-specific question guides at least 24 hours before the interview |

| One evaluator dominating the debrief and pulling consensus | Implement a silent written evaluation protocol before the debrief; collect individual scores before any group discussion begins |

| Panel size creeping upward as stakeholders request inclusion | Define a maximum panel size of four and establish a formal stakeholder input mechanism for those outside the core panel |

| Inconsistent evaluation criteria across panelists | Anchor every panel on a shared interview scorecard reviewed and agreed upon in the pre-brief |

Real-World Case Studies

Case Study 1: The Professional Services Firm

A 900-person professional services firm conducting lateral hires at the director and partner level found that its sequential interview process – four one-on-one conversations before a hiring committee discussion – was producing inconsistent evaluation data and high candidate drop-off rates. Candidates were spending four to six hours in the process and receiving contradictory signals about the role requirements from different interviewers. The firm redesigned to a two-stage model: a structured one-on-one competency-based interview followed by a calibrated three-person panel. Total candidate time fell from 5.5 hours to 2.5 hours. Panel Consensus Rate rose from 46% to 79%. Early-tenure attrition in the first twelve months fell by 31%.

Case Study 2: The Technology Scale-Up

A 350-person Series C technology company identified that its engineering hiring panels were producing strong technical evaluations but weak cultural and cross-functional assessments, leading to hires who performed well technically but struggled in the company’s highly collaborative product development environment. The TA team restructured panel composition to always include one engineer, one product manager, and one design lead – representing the three primary cross-functional partners of every engineering hire. Post-hire twelve-month performance scores for collaborative work style improved by 24 percentage points over two hiring cycles. The structural change cost the organization approximately 15 additional hours of cross-functional interviewer time per quarter.

Case Study 3: The Financial Services Organization

A regional financial services firm redesigned its panel interview process to be fully remote and asynchronous-to-synchronous – meaning candidates completed a structured async component via digital interview before the live panel, giving panelists standardized pre-review material before the synchronous conversation. Panel preparation scores rose from 41% to 88% as panelists arrived at the live panel having already reviewed the candidate’s responses to structured screening questions. Consensus rates improved from 53% to 81%, and average panel-to-decision time fell from 9 days to 3 days.

Performance Metrics That Matter: Panel Interview Tracking Framework

Panel Interviews Across the Hiring Lifecycle

Pre-Interview: Panel Composition and Calibration

Effective panel evaluation begins before the candidate enters the room. The pre-interview phase involves three decisions: who should be on the panel, what each panelist will evaluate, and what “good” looks like against each criterion. Organizations that treat panel composition as an afterthought – inviting whoever is available rather than whoever brings the most relevant evaluative perspective – produce panels that generate noise rather than signal. The hiring manager should define panel composition as part of the initial role intake process, not as a scheduling exercise the week before interviews begin.

During the Interview: Structured Evaluation in Practice

The quality of the panel interview itself is a function of panelist preparation, not panelist seniority. A well-briefed junior evaluator with a clear competency assignment produces more useful evaluation data than an unprepared senior leader improvising questions from memory. Structured question delivery – where each panelist follows a pre-agreed sequence of questions mapped to their assigned competencies – reduces evaluation variance across candidates and produces evaluation data that is genuinely comparable across the candidate pool.

Post-Interview: Debrief and Decision

The panel debrief is the highest-risk moment in the panel process from an equity and quality standpoint. Social dynamics, hierarchy effects, and conformity pressures are at their most powerful when evaluators gather to make a collective recommendation under time pressure. Written evaluations submitted before the debrief begins, combined with a structured debrief agenda that surfaces disagreements rather than smoothing them over, produce more accurate and equitable collective decisions than unstructured group discussion.

Offer Stage: Translating Panel Consensus into Action

When panel consensus is strong, the offer stage benefits directly: the hiring team moves with confidence, the panel’s shared conviction can be communicated to the candidate, and the offer is grounded in a documented, multi-perspective assessment. When panel consensus is weak or forced, the offer stage often reveals the underlying ambivalence – through slow approvals or hedged messaging to the candidate. Offer acceptance rate is one of the most diagnostic trailing indicators of panel quality in the preceding evaluation stage.

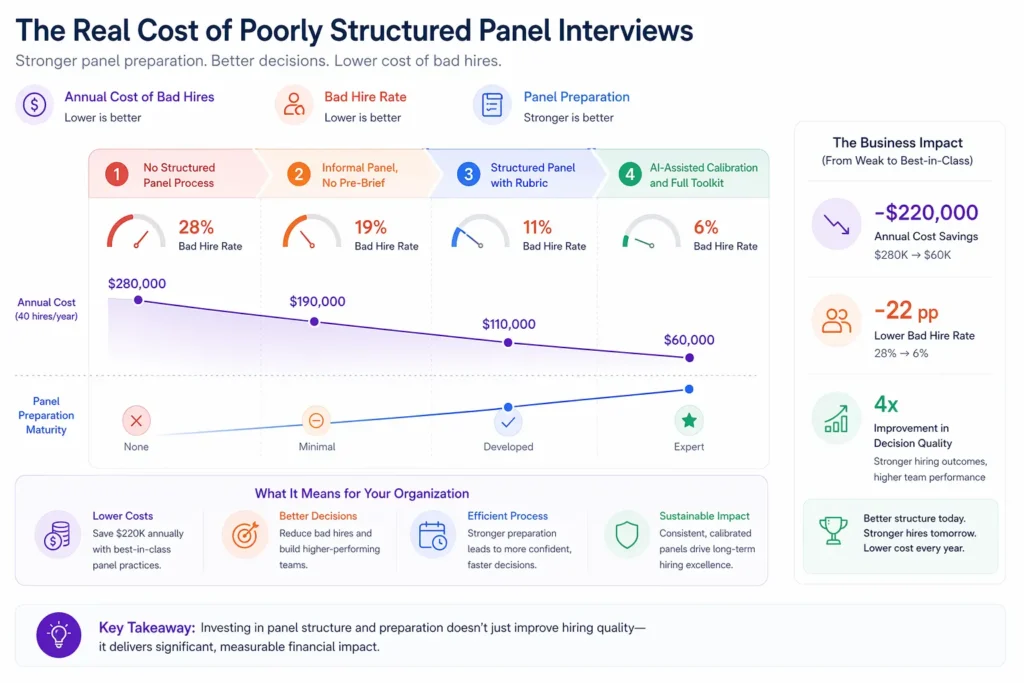

The Real Cost of Poorly Structured Panel Interviews

| Scenario | Panel Preparation | Bad Hire Rate | Annual Cost (40 hires/year) |

|---|---|---|---|

| No structured panel process | None | 28% | $280,000 |

| Informal panel, no pre-brief | Minimal | 19% | $190,000 |

| Structured panel with rubric | Developed | 11% | $110,000 |

| AI-assisted calibration and full toolkit | Expert | 6% | $60,000 |

Bad hire cost assumed at $25,000 per instance (replacement cost plus productivity loss). Panel processes concentrated at manager level and above.

Related Terms

| Term | Definition |

|---|---|

| Structured Interview | An interview format using predefined, consistently applied questions evaluated against explicit criteria |

| Interview Scorecard | A standardized evaluation form used to score candidate responses against defined competency criteria |

| Competency-Based Interview | An interview approach focused on behavioral evidence of specific competencies relevant to the role |

| Hiring Committee | A panel of evaluators who share evaluation and decision responsibility for a hiring outcome |

| Affinity Bias | The tendency to evaluate more favorably candidates who share characteristics with the evaluator |

| Behavioral Interview | An interview format using past behavior as the primary predictor of future performance |

Frequently Asked Questions

How many people should be on a panel interview?

Research on group decision quality consistently recommends three to four panelists as the optimal range. Panels of two may not generate sufficient evaluative diversity. Panels of five or more introduce coordination overhead, conformity risk, and candidate experience decline without commensurate improvement in evaluation accuracy.

What is the difference between a panel interview and a group interview?

A panel interview involves multiple interviewers evaluating a single candidate simultaneously. A group interview involves multiple candidates being evaluated by one or more interviewers at the same time. The formats serve different purposes: panel interviews improve evaluation quality for individual candidates; group interviews assess relative candidate performance and group dynamics.

Should all panelists ask questions, or should one person lead?

Best practice is shared questioning, with each panelist responsible for their assigned competency area. A single-questioner panel with silent observers wastes the primary structural benefit of the format – multiple independent evaluation lenses on the same candidate interaction. Each panelist should ask at least two to three questions in their domain and complete a written evaluation immediately after.

How do you prevent one panelist from dominating the evaluation?

The most effective structural intervention is requiring written evaluations before the debrief discussion begins. When every panelist has committed to a written score before the group conversation, outlier assessments are visible and can be explored rather than suppressed. Debrief facilitation should explicitly invite disagreement before seeking consensus.

Do panel interviews improve diversity hiring outcomes?

Yes, when designed with diverse panel composition and equitable evaluation criteria. Research consistently finds that structured, multi-evaluator formats reduce demographic disparity in hiring outcomes compared to unstructured individual interviews – but only when the panel itself is compositionally diverse and the evaluation criteria are reviewed for cultural assumptions. Panel format alone does not guarantee equitable outcomes.

Conclusion

The panel interview is not a procedural formality.

It is a decision architecture that, when designed correctly, produces consistently better hiring outcomes than any single-evaluator alternative. The organizations that treat panel interviewing as a structured practice – with defined panel composition, mandatory pre-briefs, competency-assigned evaluation roles, and written evaluations before debrief – produce hiring decisions that are more accurate, more equitable, and more defensible than those improvising their way through consecutive one-on-one conversations.

The investment is in preparation, not in additional interviewing time. A 15-minute calibration session before a 60-minute panel is the highest-return interview process investment most organizations are not yet making consistently.

Treat the panel as a system, not an event, and the quality difference will show up in your twelve-month performance data before it shows up anywhere else.