Once a year, a calendar invite appears that nobody is particularly excited about. The performance review, formal, structured, and often nerve-wracking for both sides of the table, has a reputation for being more bureaucratic exercise than genuine conversation. But when it is done well, it is one of the most powerful tools an organisation has for retaining talent, developing people, and building a workforce that actually improves over time.

A performance review is a structured evaluation of an employee’s work, contributions, and development over a defined period. It sits at the heart of the employee lifecycle, connecting day-to-day output to longer-term organisational goals. For HR teams, it is also a primary input into decisions around promotions, compensation, and workforce planning.

The connection between performance reviews and employee engagement is well established. Employees who receive regular, meaningful feedback are significantly more likely to stay, perform, and grow within the organisation. For HR analytics teams, review data is also a goldmine, surfacing patterns in performance, identifying flight risks, and informing employee retention strategy before attrition becomes a problem.

This guide covers what an effective performance review looks like, how to structure one, and how to turn the process into something people actually find useful.

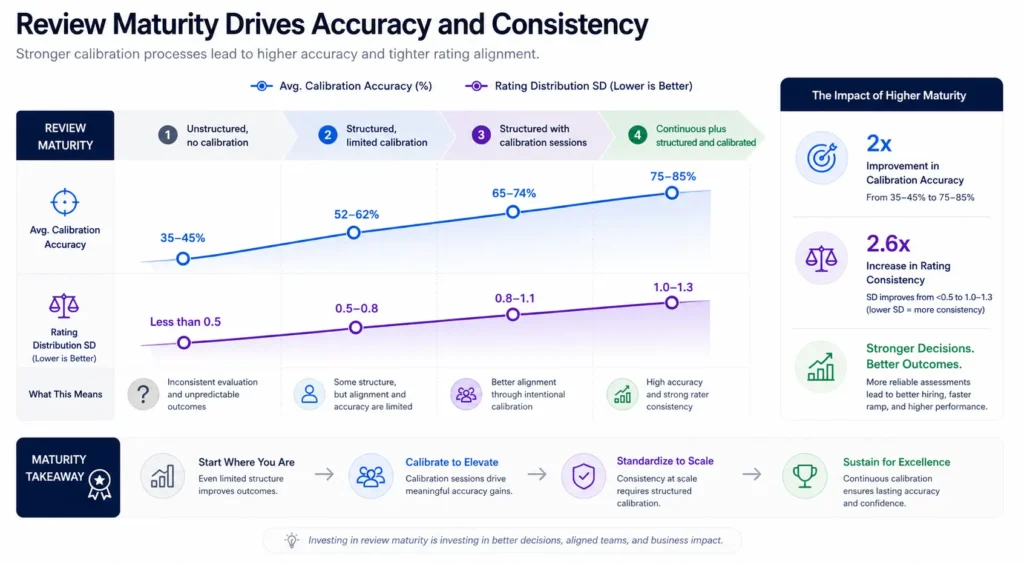

The core metric governing performance review effectiveness is Review Calibration Accuracy: the correlation between formal performance ratings and subsequent independently assessed performance outcomes.

Review Calibration Accuracy (%) = Correlation Coefficient (Formal Rating vs. 12-Month Outcome) x 100

High-performing organizations achieve Review Calibration Accuracy above 72%, indicating that formal ratings are predictive of future performance. Industry average sits around 41%, indicating that in most organizations, formal ratings are only weakly predictive of actual performance capacity, reflecting the degree to which review processes are influenced by recency bias, affinity, and manager inconsistency rather than genuine performance assessment.

What is a Performance Review?

A performance review (also called a performance appraisal, performance evaluation, or performance assessment) is a structured, periodic process through which a manager formally evaluates an employee’s performance against pre-defined expectations, goals, and competencies, documents the assessment, provides feedback, and uses the findings to inform decisions about compensation, promotion, development, and in some cases continued employment.

The performance review serves multiple organizational functions that are sometimes in tension with one another. It is simultaneously a development tool designed to improve future performance, a compensation mechanism designed to differentiate pay based on contribution, a talent identification instrument designed to identify high performers, and a performance management tool designed to address underperformance formally. Organizations that attempt to serve all four functions in a single annual conversation often serve none of them well.

Why Performance Review Quality Determines Organizational Growth and Retention?

The performance review is not an HR process. It is the primary mechanism through which organizations communicate what they value, who they value, and what kind of performance they are willing to tolerate. A performance review system that rates everyone as “meets expectations” regardless of actual contribution communicates that differentiation is not real. A system that rates based on manager relationship rather than documented performance communicates that relationship management is the actual performance standard. Employees are sophisticated readers of these signals, and they respond accordingly.

Gallup’s State of the Global Workplace research found that only 21% of employees globally report that their performance review motivates them to do outstanding work. A majority report that reviews are conducted as a compliance exercise rather than a genuine development conversation. The consequences are predictable: in organizations where performance reviews are perceived as formulaic, high-performing employees who have external options are significantly more likely to leave than those in organizations where reviews are perceived as meaningful and fair.

The retention math is direct. An organization of 1,000 employees with 18% annual voluntary attrition and an average replacement cost of $35,000 spends $6.3 million per year on replacement hiring. McKinsey research on performance management effectiveness found that organizations with high-quality performance review processes, defined as reviews that are consistent, calibrated, and development-oriented, report voluntary attrition rates 9 to 14 percentage points lower than organizations with poor-quality review processes. Applying a 9-point attrition reduction to that organization produces savings of approximately $3.15 million per year from improved performance review quality alone.

The compounding effect on talent quality is equally significant. Organizations where performance reviews effectively identify, develop, and differentiate high performers create internal talent pipelines that reduce dependence on external hiring for senior roles. Those where reviews fail to differentiate find their high performers leaving for organizations where their contribution is recognized, while their underperformers remain, because they have less external optionality.

For HR and talent development leaders, the strategic opportunity is to treat performance review quality as a talent acquisition and retention variable, not just an HR compliance metric. The research on this is unambiguous: performance review quality is one of the highest-leverage inputs to voluntary attrition, internal talent pipeline health, and long-term organizational capability, and it is dramatically underinvested in relative to those downstream outcomes.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Performance Reviews

Recency Bias and the 12-Month Memory Problem

Recency bias is the most pervasive and well-documented cognitive distortion in performance review contexts. Managers evaluating annual performance disproportionately weight events from the most recent three months, creating systematic evaluation errors for employees whose performance trajectory over the full review period does not match their recent performance. An employee who performed exceptionally in Q1 through Q3 and struggled in Q4 will typically receive a rating that reflects Q4 more than the full year warrants. Continuous documentation practices, meaning performance notes maintained throughout the review cycle rather than compiled retrospectively, are the primary structural intervention for recency bias.

The Halo and Horn Effect in Rating Consistency

The halo effect (where one strongly positive characteristic inflates ratings across unrelated dimensions) and the horn effect (where one negative characteristic deflates ratings across unrelated dimensions) are consistently documented in performance review contexts. A manager who finds a direct report exceptionally technically skilled will tend to rate their collaboration and communication more favorably than the evidence warrants. Competency-based rating frameworks with separate scores for distinct performance dimensions, rather than composite “overall performance” ratings, reduce though do not eliminate these effects by requiring evaluators to assess each dimension independently.

Leniency and Central Tendency Bias

Two of the most organizationally costly rating biases are leniency bias (the tendency to rate all employees higher than their actual performance warrants, driven by conflict avoidance) and central tendency bias (the tendency to cluster all ratings around the midpoint, avoiding both high and low extremes). Both biases produce rating distributions that fail to differentiate performance effectively, making it impossible to use review data for meaningful compensation differentiation or talent identification. Manager calibration sessions are a less distorting alternative to forced distribution systems that produces comparable differentiation without the morale costs.

Performance Review vs. Related Evaluation Processes

| Process | Frequency | Evaluator | Primary Purpose | Formality |

|---|---|---|---|---|

| Performance Review | Annual / Semi-Annual | Manager | Evaluation, compensation, development | Formal |

| 360-Degree Feedback | Annual / Ad hoc | Peers, reports, manager | Development, blind spot identification | Semi-formal |

| Continuous Feedback | Ongoing | Manager and peers | Real-time performance guidance | Informal |

| Performance Improvement Plan | As needed | Manager and HR | Documented underperformance management | Formal |

| Probationary Review | End of probation | Manager | Go/no-go assessment for new hires | Formal |

The critical distinction between a performance review and continuous feedback is not frequency but purpose. A performance review is a formal, documented assessment with defined consequences for compensation and career progression. Continuous feedback is a developmental conversation without formal documentation or consequences. Both are necessary; organizations that use only continuous feedback lose the calibration and accountability mechanisms that formal processes provide.

What the Experts Say?

The problem with performance reviews is not that we do them. It is that we do them once a year, with all the weight of a year of accumulated feedback, after all the moments when that feedback would have been most valuable have already passed.

– Dr. Tomas Chamorro-Premuzic, Professor of Business Psychology, University College London; Author of Why Do So Many Incompetent Men Become Leaders?

How to Measure Performance Review Effectiveness?

Formulas

Review Calibration Accuracy (%) = Pearson Correlation (Formal Rating, 12-Month Outcome) x 100

Rating Distribution Spread = Standard Deviation of Performance Ratings Across Rated Population

Manager Rating Consistency Index = Variance in Manager Average Ratings Across Equivalent Employee Cohorts

Benchmarks by Review Maturity

| Review Maturity | Avg. Calibration Accuracy | Rating Distribution SD |

|---|---|---|

| Unstructured, no calibration | 35-45% | Less than 0.5 |

| Structured, limited calibration | 52-62% | 0.5-0.8 |

| Structured with calibration sessions | 65-74% | 0.8-1.1 |

| Continuous plus structured and calibrated | 75-85% | 1.0-1.3 |

Key Strategies for Improving Performance Review Quality

How Can AI and Automation Support Performance Reviews?

Continuous Performance Signal Capture

AI-powered performance management platforms can aggregate signals of employee performance from multiple data sources, including project management systems, peer feedback tools, customer satisfaction data, and goal tracking platforms, to construct a continuous performance profile. This reduces the cognitive burden on managers during formal review periods and reduces recency bias by making performance across the full review period visible rather than just the most recent months.

Automated Calibration Support

Machine learning models can analyze preliminary rating distributions across managers, flagging cases where a manager’s rating pattern is a statistical outlier relative to organizational norms and relative to objective performance indicators for the same employees. This automated calibration support surfaces potential leniency bias, severity bias, or demographic rating differentials for discussion in calibration sessions rather than leaving those patterns to be identified manually.

AI-Assisted Review Writing

Natural language AI tools can assist managers in drafting structured, evidence-based performance review narratives by synthesizing the continuous performance data collected over the review period. This AI-assisted writing support reduces the time managers spend on review documentation, improves narrative consistency across the organization, and reduces the probability of legally problematic review language by flagging subjective characterizations before submission.

Sentiment and Engagement Analysis

AI-powered survey and feedback analysis tools can identify the degree to which employees experience their performance reviews as fair, useful, and developmental, enabling HR leaders to measure review quality from the employee perspective rather than only from completion rate metrics. Employee sentiment analysis of review feedback provides a leading indicator of the review program’s retention and engagement impact.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Performance Reviews, Equity, and the Ethics of People Decisions

Bias in Performance Ratings and Its Career Impact

Research on performance rating patterns consistently demonstrates demographic differentials that are not explained by actual performance differences. Studies of large corporate performance datasets find that women and employees from underrepresented racial and ethnic groups receive systematically lower ratings than white male counterparts with equivalent performance indicators, particularly in subjective dimensions such as “leadership potential” and “executive presence.” Because performance ratings directly determine compensation growth and promotion eligibility, rating bias has compounding career and financial consequences. Structured rating frameworks with specific behavioral anchors for each performance level reduce but do not eliminate demographic rating differentials.

The Language of Performance and Structural Exclusion

Textual analysis of performance review narratives reveals systematic differences in the language used to evaluate employees from different demographic groups. Employees from underrepresented groups are more frequently described in terms of individual personality attributes such as “collaborative,” “warm,” or “dependable,” while employees from majority groups are more frequently described in terms of achievement and strategic impact. These language differences, even when the underlying rating is equivalent, create qualitatively different career records that influence promotion decisions. AI-powered review language analysis can surface these patterns at scale.

Review Equity and DEI Outcomes

An organization’s commitment to DEI is ultimately tested in its performance review system. If diverse employees receive equivalent ratings to their majority-group peers with equivalent output, the review system is functioning equitably. If they receive systematically lower ratings, the review system is a mechanism through which structural inequity is formalized and amplified into compensation and promotion consequences. Performance review equity audits, which apply the same statistical methodology as pay equity audits to rating distributions, are an underutilized tool for identifying and correcting this pattern before it reaches the compensation and promotion stages.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Managers treating reviews as a compliance exercise rather than a development conversation | Redesign the review structure to separate compensation and development conversations; train managers on the development facilitation skills the conversation requires |

| Rating distributions that cluster at “meets expectations” with minimal differentiation | Implement guided distribution guidelines with calibration sessions; provide managers with peer comparison data during the calibration process |

| Employees feeling the review does not reflect their actual performance over the full year | Implement continuous documentation requirements; require managers to reference specific documented examples in the review narrative |

Real-World Case Studies

Case Study 1: The Professional Services Firm

A 900-person professional services firm identified that its voluntary attrition rate among employees rated “exceeds expectations” was 22%, significantly higher than the 11% attrition rate among employees rated “meets expectations.” Investigation revealed that high performers were departing because their review ratings were not translating into differentiated compensation or accelerated promotion timelines. The firm redesigned its performance review to include a formal “high performer action plan” for all employees rated above a defined threshold, including explicit compensation commitments and documented development investment. High performer attrition fell to 14% in the following review cycle.

Case Study 2: The Technology Company

A mid-size technology company redesigned its annual performance review process to include both a manager assessment and a structured self-assessment, with explicit calibration against team averages for each competency dimension. The calibration process identified that one department had a manager rating pattern that was statistically 1.4 standard deviations more lenient than the organizational average, producing a rating inflation that made it impossible to differentiate compensation in that team. After calibration coaching and revised guidelines, the department’s rating distribution normalized within two review cycles and cross-department compensation equity improved measurably.

Case Study 3: The Retail Organization

A national retail organization implemented mobile-first performance review tools that allowed store managers to complete reviews on their phones immediately after shift observations rather than during dedicated desktop time in weekly manager meetings. Review completion rate rose from 71% to 96%. The quality of review narratives, assessed by HR for specificity and evidence-basis, improved from an average score of 3.1 out of 5 to 4.2 out of 5. Employee satisfaction with the review process, measured in quarterly engagement surveys, improved from 44% positive to 67% positive within two review cycles.

Performance Review Metrics That Drive Actionable Decisions: What to Track

Performance Reviews Across the Employee Lifecycle

Probationary and Onboarding Phase

Probationary reviews serve a different function from ongoing performance reviews: they are a go/no-go assessment of whether the hiring decision was correct, and whether the new employee has the foundation to meet the role’s performance expectations. The quality of probationary reviews depends on the quality of the onboarding process that preceded them: employees whose first 90 days were well-structured produce more data-rich probationary reviews than those whose onboarding was unstructured and passive.

Active Performance Management Phase

The ongoing performance review cycle is where the majority of performance management value is created or lost. Annual reviews conducted without continuous feedback between cycles force managers into retrospective reconstruction of performance evidence, which is where recency bias and rating inconsistency are most pronounced. Organizations that build continuous feedback infrastructure alongside formal review processes produce review data that is more accurate, more defensible, and more useful as a development tool.

Promotion and Advancement Decisions

Performance reviews are the primary input to promotion and advancement decisions in most organizations. When review data is reliable, calibrated, and equitable, it produces promotion pipelines that reflect actual performance and potential. When review data is biased, inconsistent, or undifferentiated, promotion decisions reflect manager relationships and visibility rather than contribution, which produces both equity failures and organizational capability degradation over time.

Exit and Post-Departure Analysis

The final performance-relevant data point in an employee’s lifecycle is their exit and whether that exit was voluntary, involuntary, or developmental. Organizations that analyze exit patterns by review cohort, tracking whether employees who voluntarily departed were high, medium, or low rated, produce insights about whether their review-to-retention pipeline is functioning as intended. Voluntary departure of high-rated employees is the most actionable signal of review system dysfunction available to hiring managers and HR leaders.

The Real Cost of Poor Performance Review Quality

| Scenario | Review Quality | Annual Attrition (1,000 employees) | Annual Replacement Cost |

|---|---|---|---|

| No calibration, unstructured | Poor | 22% | $7.7M |

| Structured, limited calibration | Moderate | 16% | $5.6M |

| Structured with calibration sessions | Good | 12% | $4.2M |

| Continuous, structured, and calibrated | Best-in-class | 9% | $3.15M |

Replacement cost assumes $35,000 per departing employee. Attrition rates drawn from McKinsey performance management research benchmarks.

Related Terms

| Term | Definition |

|---|---|

| 360-Degree Feedback | A performance evaluation method that collects input from all directions in an employee’s professional circle, including peers, direct reports, and manager |

| Performance Improvement Plan (PIP) | A formal document outlining specific performance deficiencies and the expectations and timeline for improvement |

| Calibration Session | A structured meeting in which managers align on rating standards and review preliminary assessments to ensure consistency across the organization |

| Goal Setting | The process of defining specific, measurable performance expectations at the start of a review cycle against which performance will be evaluated |

| Rating Distribution | The statistical spread of performance ratings across an employee population, used to assess whether differentiation is occurring as intended |

Frequently Asked Questions

How often should performance reviews be conducted?

Annual reviews are the minimum; semi-annual is increasingly the standard for organizations prioritizing development. Quarterly formal check-ins, paired with continuous feedback, produce the strongest calibration accuracy and the lowest recency bias. The optimal frequency depends on role complexity and rate of change in performance expectations.

What is the difference between a performance review and a performance improvement plan?

A performance review is a standard evaluation of any employee’s performance relative to expectations, conducted on a regular cycle. A performance improvement plan (PIP) is a formal intervention document specifically for employees whose performance is below the threshold required for continued employment, outlining specific deficiencies, required improvements, and timelines.

Should performance reviews be tied directly to compensation decisions?

Directly tying reviews to compensation decisions in the same conversation reduces the developmental utility of that conversation. Best practice is to conduct the development review conversation separately from the compensation communication, with a temporal gap between the two so the development feedback is processed before compensation is introduced.

How do you handle biased performance ratings?

Calibration sessions are the primary intervention. Requiring managers to present ratings alongside specific behavioral evidence, in a group context where peers can challenge assessments, reduces individual manager bias more effectively than awareness training. Statistical monitoring of rating distributions for demographic differentials provides the organizational-level signal that individual calibration sessions may not surface.

Can performance reviews be done fairly for remote or distributed employees?

Yes, but it requires more deliberate documentation. Remote employees are at higher risk of visibility bias, meaning being underrated because of reduced proximity to the manager. Structured goal-setting, continuous documentation requirements, and explicit calibration protocols for remote workers offset much of this visibility disadvantage. Organizations that apply in-office review standards uniformly to distributed workforces consistently produce inequitable outcomes for remote employees.

Conclusion

The performance review is the most consequential conversation in the employee lifecycle, and the one most frequently conducted badly.

Organizations that invest in review quality, through structured frameworks, calibration processes, continuous documentation, and data-driven insight, consistently outperform those that treat reviews as an administrative obligation on retention, quality of hire, and internal talent pipeline health. The cost of a poor performance review system is rarely visible on a quarterly P&L. It accumulates in the voluntary attrition of high performers, the promotion of underperformers, the organizational capability degradation, and the employer brand damage that follows.

Treat performance review quality as a strategic infrastructure investment, and the returns will appear in the talent metrics that matter most.