A resume tells you what a candidate claims to know.

A skills assessment tells you what they can actually do. In a hiring ecosystem where credentials are increasingly easy to inflate and experience descriptions are optimised for automated screening rather than accuracy, the gap between what is written and what is real has never been wider. Skills assessments are how hiring teams close it.

A skills assessment is a structured evaluation tool designed to measure a candidate’s practical abilities, technical knowledge, or cognitive aptitude in relation to the specific demands of a role. It sits between the pre-screening stage and the final interview, giving hiring teams objective, comparable data on candidates before the most time-intensive parts of the process begin.

Used well, skills assessments feed directly into data-driven recruiting by replacing subjective impressions with measurable outputs. They also play a meaningful role in reducing bias in hiring, shifting the evaluation focus from where someone studied or who they worked for to what they can demonstrably deliver.

For organisations serious about quality of hire, skills assessments are one of the strongest predictive signals available. When integrated into a broader recruitment funnel alongside structured interviews and scorecards, they transform hiring from an educated guess into a genuinely evidence-based decision.

The core metric governing skills assessment effectiveness is the Assessment Predictive Validity Index (APVI): the correlation coefficient between candidate assessment scores and their verified 12-month performance ratings, measured across the organization’s historical hire data.

Assessment Predictive Validity Index (APVI) = Pearson r (Assessment Score, 12-Month Performance Rating)

A well-designed and correctly deployed skills assessment achieves an APVI of 0.35 to 0.55 – substantially higher than the 0.10 to 0.15 predictive validity of unstructured interviews. Assessment programs producing an APVI below 0.20 are either measuring the wrong competencies or measuring them with insufficient precision to justify their use in hiring decisions.

What is Skills Assessment?

Skills assessment in hiring is a structured evaluation method that measures a candidate’s demonstrated abilities, technical knowledge, or behavioral competencies through standardized tools – rather than relying on self-reported experience in a resume or conversational claims in an interview. It is distinct from credential verification (which confirms what someone has studied) and from reference checking (which gathers third-party opinion) in that it produces direct, first-hand evidence of what a candidate can actually do.

Skills assessments are typically deployed at one or more stages in the hiring process: as a post-application filter before first-round interviews, as a structured component of a competency-based interview process, or as a final evaluation stage before an offer is made. The most effective programs treat assessment not as a gate but as a diagnostic tool – using assessment data to understand each candidate’s capability profile in depth, not just to assign a pass/fail score. When integrated with an assessment center approach, skills assessments provide multi-method evidence that produces significantly higher hiring accuracy than single-method evaluation.

Why Skills Assessment Is an Essential Competitive Advantage in 2026?

The business case for skills assessment in hiring rests on a single underlying fact: credentials and experience are increasingly unreliable predictors of job performance, and the organizations that have replaced credential-based screening with competency-based assessment are consistently producing better hiring outcomes.

Start with what the research says. A meta-analysis published in the Journal of Applied Psychology and widely cited in talent acquisition literature found that structured skills assessments predict job performance with a validity coefficient of 0.35 to 0.54, compared to 0.10 to 0.15 for unstructured interviews and 0.18 to 0.27 for general mental ability tests. The practical implication is that a hiring process that uses validated skills assessment instead of unstructured interviews will produce correctly predicted job performance outcomes roughly three times more frequently. At an average bad hire cost of $25,000 to $50,000 for mid-level roles, that predictive difference translates directly into bottom-line impact.

The credential reliability problem compounds the case. Research from Harvard Business Review indicates that degree inflation – the practice of requiring four-year degrees for roles that historically did not demand them – has expanded throughout the hiring market without evidence that educational pedigree predicts performance in those roles.

Organizations that screen on credentials rather than capabilities are systematically excluding a large segment of the qualified talent market: the candidates who have the skills the role requires but did not acquire them through the credential pathway the job description specifies. Skills assessment is the mechanism that corrects this exclusion – and the benefit is not just access to a broader talent pool but access to a more capable one, since capability and credential are empirically distinct.

A concrete data point from the technology sector: LinkedIn’s 2025 Global Talent Trends research found that 76% of talent professionals report that skills-based hiring produces better hiring outcomes than degree or experience-based screening for their highest-impact roles, yet only 44% of organizations have implemented structured skills assessment across their hiring processes. The gap between those who have implemented assessment and those who know they should is among the most actionable inefficiencies in talent acquisition today.

The ROI math is direct. A mid-size professional services firm making 50 hires per year at an average cost-per-hire of $8,000 and a bad hire cost of $30,000, with a current bad hire rate of 22%, carries an annual bad hire cost of $330,000. If implementing a structured skills assessment program reduces the bad hire rate by 30% – a conservative estimate given the assessment validity research – the saving is approximately $99,000 annually.

Against a skills assessment platform investment of $20,000 to $40,000 per year, the return is 2.5 to 5 times the investment in bad hire cost reduction alone, excluding the additional value of faster time-to-fill from better-calibrated shortlists and higher offer acceptance rates from more informed hiring conversations.

For TA leaders, the organizational development opportunity is to reframe skills assessment not as a candidate filter but as a hiring quality infrastructure investment. The organizations that have done this – building validated, role-specific assessment programs with outcome feedback loops that measure APVI across their hire population – are consistently reporting higher quality-of-hire scores, lower early attrition, and higher hiring manager satisfaction with shortlist quality than those relying on resume screening and conversational interviews alone.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Skills Assessment

Cognitive Bias and Credential Reliance

Cognitive bias in hiring decisions is not the exception – it is the baseline. Research on unstructured interview processes consistently finds that interviewers form a strong first impression within the first five minutes and spend the remainder of the interview seeking information that confirms it. In the absence of structured assessment data, hiring decisions default to proxies that feel like competence signals: educational pedigree, employer brand recognition, and communication style.

These proxies are correlated with socioeconomic background, cultural context, and access to elite institutions far more strongly than they are correlated with actual job performance. Skills assessment provides an evidence base that interrupts this proxy-reliance pattern and gives decision-makers something more predictive to anchor their evaluations on.

Structured Evaluation and the Consistency Effect

Consistency in evaluation is the primary mechanism through which structured skills assessment improves hiring quality over unstructured interviews. When all candidates for a given role complete the same assessment tasks, evaluated against the same scoring rubric, the hiring decision is made on a comparable evidence base rather than on the idiosyncratic conversation that happened to unfold in a particular interview.

This consistency effect is especially powerful in hiring panels: when interviewers evaluate candidates on different questions with no shared scoring framework, their calibration is almost entirely impressionistic. When they evaluate candidates on the same assessment dimensions, calibration conversations become substantive rather than political.

Candidate Perception and Assessment Legitimacy

Candidates’ perception of skills assessment is more positive than many hiring teams assume. Research on candidate experience consistently finds that candidates rate structured, skills-based assessments as more fair and job-relevant than unstructured interviews – provided the assessment is clearly connected to the role requirements and does not feel like a barrier for its own sake.

The perception of fairness is a direct contributor to offer acceptance: candidates who feel evaluated on merit rather than on relationship or impression are more committed to the process and more likely to accept offers when they receive them. This fairness perception is one of the underappreciated business cases for skills assessment beyond its predictive validity.

Skills Assessment vs. Related Hiring Evaluation Methods

| Method | Evidence Type | Predictive Validity | Typical Stage | Primary Strength |

|---|---|---|---|---|

| Skills Assessment | Demonstrated capability | 0.35-0.54 | Post-application or pre-offer | Objective, role-specific evidence |

| Unstructured Interview | Self-reported, conversational | 0.10-0.15 | Any stage | Relationship building |

| Behavioral Interview | Claimed past behavior | 0.20-0.35 | Mid-process | Contextual depth |

| Assessment Center | Multi-method, observed behavior | 0.40-0.58 | Senior roles | Comprehensive evaluation |

| Reference Check | Third-party opinion | 0.15-0.25 | Pre-offer | External validation |

| Cognitive Ability Test | General mental ability | 0.18-0.27 | Post-application | Broad performance predictor |

The critical observation from the table is that skills assessment and assessment centers represent the two highest-validity methods available in standard hiring processes – and they are the two most underused relative to their predictive power. The methods most organizations rely on most heavily, including the unstructured interview, are the methods with the lowest predictive validity for job performance.

What the Experts Say?

The future of hiring is not about who went to the best school or who held the most impressive titles. It is about who can actually do the work. Skills assessment is how organizations find out – before the hire, not after it.

– Claudio Fernandez-Araoz, Senior Adviser, Egon Zehnder; Author, “It’s Not the How or the What but the Who

How to Measure Skills Assessment Effectiveness?

Formulas

Assessment Predictive Validity Index (APVI) = Pearson r (Assessment Score, 12-Month Performance Rating)

Assessment Completion Rate (%) = (Candidates Completing Assessment / Candidates Invited) x 100

False Positive Rate (%) = (Above-Threshold Hires Who Underperform at 12m / Total Above-Threshold Hires) x 100

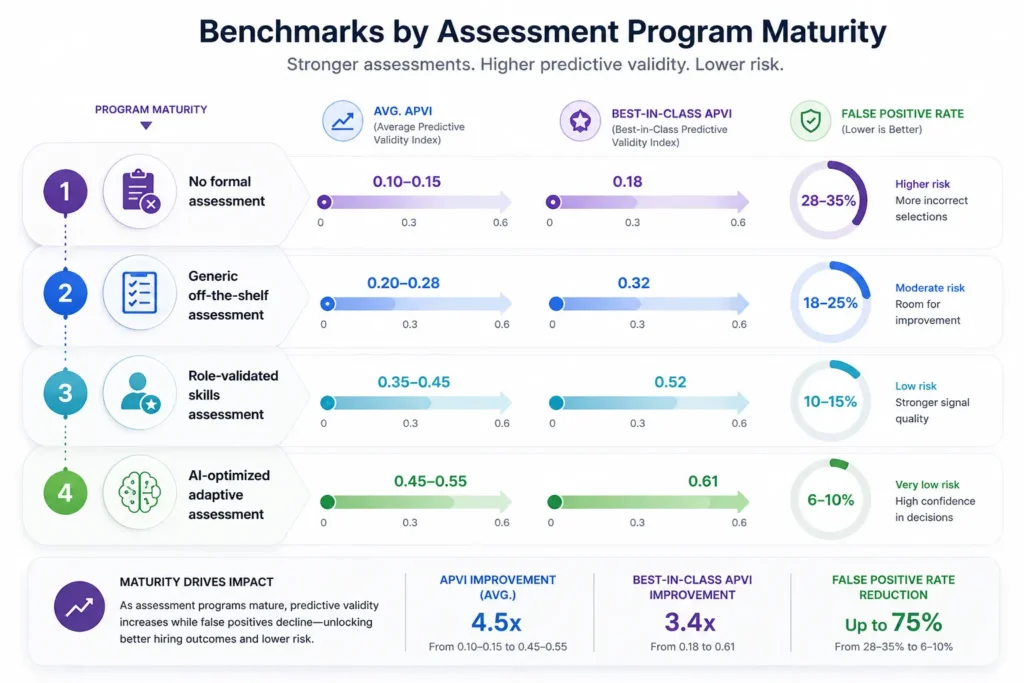

Benchmarks by Assessment Program Maturity:

| Program Maturity | Avg. APVI | Best-in-Class APVI | False Positive Rate |

|---|---|---|---|

| No formal assessment | 0.10-0.15 | 0.18 | 28-35% |

| Generic off-the-shelf assessment | 0.20-0.28 | 0.32 | 18-25% |

| Role-validated skills assessment | 0.35-0.45 | 0.52 | 10-15% |

| AI-optimized adaptive assessment | 0.45-0.55 | 0.61 | 6-10% |

Key Strategies for Effective Skills Assessment

How Can AI and Automation Support Skills Assessment?

Adaptive Assessment Design

AI-powered adaptive assessment platforms adjust question difficulty and domain coverage dynamically based on candidate responses, producing a more precise capability measurement in less candidate time than fixed-format assessments. This adaptive design reduces assessment fatigue – one of the primary drivers of candidate drop-off during assessment stages – while improving measurement precision for candidates at both the high and low ends of the capability distribution. Adaptive assessment also makes it significantly harder to game the test through preparation, since the question sequence is not predictable.

AI-Powered Scoring and Pattern Recognition

For open-ended assessment tasks – writing samples, case study responses, code reviews – natural language processing and machine learning scoring models can analyze responses against validated rubrics with inter-rater reliability comparable to expert human scorers, at a fraction of the time cost. This automation enables organizations to include open-ended, high-signal assessment tasks in high-volume screening processes that previously could only accommodate multiple-choice formats. The result is a higher-validity screen at the top of the funnel without the human resource cost that previously made it impractical. Integrating these outputs with AI resume screening data produces a richer candidate profile before the first human conversation.

Bias Detection in Assessment Data

AI tools applied to assessment outcomes can identify systematic patterns of demographic differential scoring across assessment types, role families, and evaluator groups. This analytical layer surfaces assessment bias that would otherwise remain invisible in aggregate data – for example, identifying that a specific assessment type produces statistically significant differential scores by gender that are not explained by actual performance differences. Early detection of these patterns allows assessment programs to be recalibrated before they produce discriminatory hiring outcomes at scale.

Candidate Experience Optimization

AI-powered assessment platforms can analyze candidate behavior data during assessment completion – time on task, question revisit patterns, interface interaction – to identify assessment design problems that are driving abandonment or reduced performance quality. This real-time quality monitoring allows continuous improvement of the assessment experience without waiting for post-hoc candidate surveys, producing higher completion rates and more accurate data from the candidates who matter most.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Skills Assessment and Workforce Equity

Credential Bias and Skills-Based Inclusion

The shift from credential-based to skills-based screening is one of the most significant structural equity interventions available to organizations through their hiring practices. Educational credential requirements disproportionately exclude candidates from lower socioeconomic backgrounds, first-generation college students, career changers, and self-taught professionals – groups that are systematically underrepresented in many high-value roles not because of capability deficits but because of credential access gaps.

Skills assessment that evaluates demonstrated competency rather than educational pedigree directly counters this exclusion, expanding the accessible talent pool while simultaneously improving predictive validity. This is the rare DEI intervention that also improves hiring quality, not one that requires trading one off against the other.

Standardized Assessment and the Reduction of Affinity Bias

Unstructured interviews are the primary mechanism through which affinity bias – the tendency to evaluate more favorably candidates who resemble the evaluator – enters the hiring process. When a hiring decision is made primarily on interview impression, the implicit factors driving that impression include communication style, cultural background, and interpersonal rapport – all of which are more strongly correlated with demographic similarity to the evaluator than with job performance.

Standardized skills assessment, evaluated against explicit rubrics, reduces affinity bias by providing a shared evidence base that all hiring decision-makers evaluate against the same criteria. This is why blind hiring programs that remove identity signals from assessment scoring consistently produce more diverse shortlists than those that preserve them.

Equitable Assessment Design and Adverse Impact Analysis

Not all skills assessments are equitable by design. Tests that include culturally specific knowledge, context-dependent language, or time pressure formats that disadvantage candidates from non-native language backgrounds can produce adverse impact – statistically disproportionate exclusion of protected groups that is not justified by the predictive validity of the test for the role.

Organizations implementing skills assessment programs should conduct adverse impact analyses on assessment outcomes by demographic group before full deployment, and recalibrate or replace assessments where adverse impact is identified. The legal and reputational risk of deploying an assessment with unexamined adverse impact is significant and avoidable with proper validation methodology.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Candidates abandoning assessment before completion | Reduce assessment length to the minimum required for valid measurement; clearly explain the connection between assessment tasks and the role before candidates begin |

| Assessment results do not predict performance in practice | Validate the assessment against actual performance data from past hires in equivalent roles; replace assessments with APVI below 0.25 |

| Hiring managers overriding low assessment scores based on interview impression | Build assessment score review into the structured hiring debrief; require documented justification for any hiring decision that contradicts assessment outcome |

| DEI concerns about differential assessment performance by group | Conduct adverse impact analysis before deployment; prioritize work-sample and situational judgment formats, which show lower differential performance than abstract reasoning tests |

Real-World Case Studies

Case Study 1: The Financial Services Company

A 1,200-person financial services company was experiencing a 28% bad hire rate for its analyst cohort – defined as underperformance against peer cohort at the 12-month mark. An audit of the hiring process found that analyst selection was based almost entirely on GPA, university ranking, and a 30-minute unstructured interview.

The company introduced a skills assessment combining a structured data analysis case study (45 minutes) and a written communication exercise (30 minutes), scored against validated rubrics derived from the performance profiles of its top-quartile analysts. Bad hire rate for the cohort fell from 28% to 11% over two hiring cycles. Annual savings from reduced bad hire and early attrition costs: approximately $280,000 for a cohort of 40 hires.

Case Study 2: The Technology Scale-Up

A Series B technology company with a rapidly growing engineering team was using technical coding challenges as its sole assessment method, administered via a general-purpose platform not calibrated to its specific engineering context. Assessment completion rates were 58% – losing nearly half of invited candidates before evaluation.

The company redesigned its assessment program using work-sample exercises that replicated actual sprint tasks from its own codebase, reduced the assessment time from 3 hours to 75 minutes, and added a brief context-setting video from the engineering lead. Assessment completion rates rose from 58% to 84%. APVI for the new assessment calculated against 12-month performance data: 0.47, versus 0.21 for the previous format.

Case Study 3: The Retail Chain

A national retail chain redesigned its store manager assessment program to be entirely mobile-compatible after discovering that 65% of candidates were attempting assessments on mobile devices but the assessment platform was not optimized for mobile. The mobile-incompatible format produced a disproportionate completion drop-off among candidates who were younger, had lower income levels, and were more likely to rely on smartphones as their primary internet device – precisely the demographic the chain was trying to attract into management pipelines.

After mobile optimization, completion rates rose from 52% to 81%. Diversity of the store manager pipeline – measured as proportion from underrepresented groups – improved by 18 percentage points within one hiring cycle.

Building a Skills Assessment Tracking System: What to Measure?

Skills Assessment Across the Hiring Lifecycle

Sourcing and Screening Stage

Skills assessment at the sourcing and screening stage functions as a quality filter that concentrates human evaluator time on candidates who have demonstrated relevant capability rather than those who merely appear qualified on paper. At this stage, assessments are typically brief, role-family-specific, and designed to identify clear capability thresholds rather than nuanced capability profiles. The behavioral interview data and structured assessment scores combined at this stage produce a candidate shortlist quality that neither method achieves independently, because they capture different dimensions of performance-relevant capability.

Interview and Deep Evaluation Stage

At the interview stage, skills assessment shifts from filtering to profiling. Assessment data collected prior to the interview informs which competency areas require deeper exploration in the interview conversation – converting the interview from a general capability screen into a targeted investigation of the dimensions that are most uncertain or most predictive for the specific role.

Hiring managers who receive a structured assessment profile alongside a candidate’s application consistently report higher-quality debrief conversations and more confident hiring decisions than those evaluating candidates without prior assessment data.

Offer and Onboarding Stage

Assessment data gathered during hiring is a valuable input to onboarding design – identifying the development areas where a new hire’s assessment profile suggests investment will accelerate time-to-performance. Organizations that connect assessment outcomes to onboarding plans produce faster ramp-up times and lower 90-day attrition rates than those that treat assessment as a hiring-stage filter with no downstream application. The assessment profile is also a useful baseline for early performance conversations between new hires and their managers, providing a shared language for the development priorities identified before the role began.

Post-Hire Performance Management

The long-term value of skills assessment data accumulates through its connection to post-hire performance outcomes. Organizations that track APVI systematically over time build an increasingly accurate model of the assessment signals that best predict performance in each role family – enabling continuous improvement of assessment design in ways that are grounded in actual organizational performance data rather than external validity research. This closed-loop assessment improvement cycle is one of the primary competitive advantages that sophisticated talent organizations build over time, and one that becomes more valuable with each hiring cycle.

The Real Cost of Hiring Without Skills Assessment

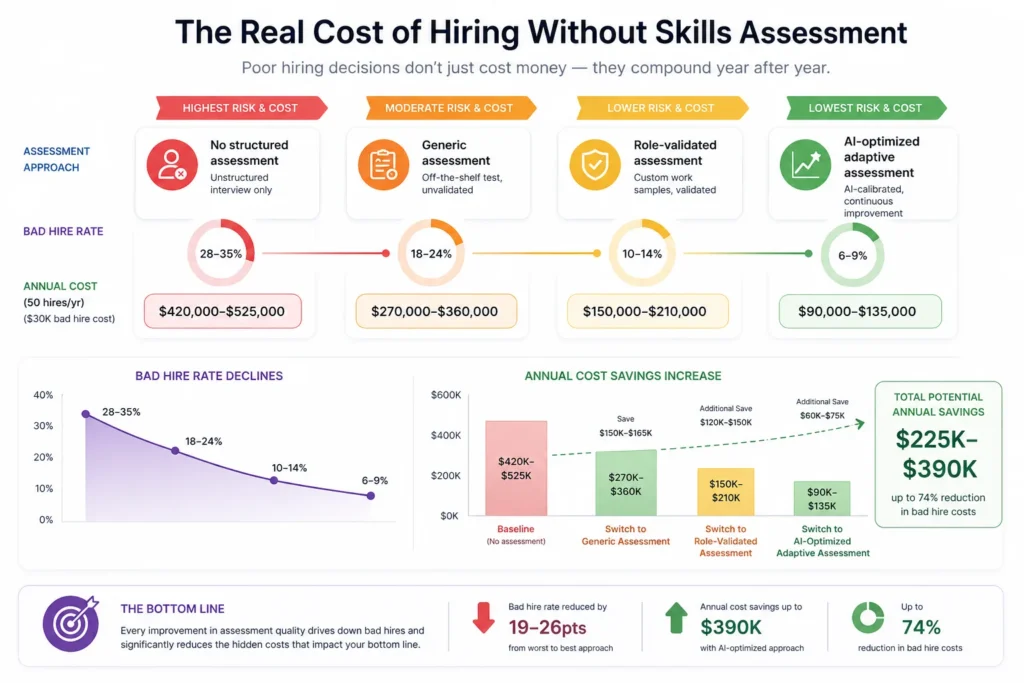

| Scenario | Assessment Approach | Bad Hire Rate | Annual Cost (50 hires/yr, $30K bad hire cost) |

|---|---|---|---|

| No structured assessment | Unstructured interview only | 28-35% | $420,000-$525,000 |

| Generic assessment | Off-the-shelf test, unvalidated | 18-24% | $270,000-$360,000 |

| Role-validated assessment | Custom work samples, validated | 10-14% | $150,000-$210,000 |

| AI-optimized adaptive assessment | AI-calibrated, continuous improvement | 6-9% | $90,000-$135,000 |

Bad hire cost assumed at $30,000 per instance including replacement recruitment, onboarding, and productivity loss during tenure of underperforming hire.

Related Terms

| Term | Definition |

|---|---|

| Predictive Validity | The statistical relationship between an assessment score and a future outcome such as job performance, measured as a correlation coefficient |

| Work Sample Test | An assessment that requires candidates to complete tasks representative of the actual work of the role being hired for |

| Situational Judgment Test (SJT) | An assessment presenting realistic work scenarios and asking candidates to choose between alternative responses, measuring judgment and decision-making |

| Adverse Impact | A statistically significant difference in selection rates between protected demographic groups that is not justified by legitimate job-related criteria |

| Competency Framework | A structured model defining the knowledge, skills, behaviors, and attributes required for performance in a role or role family |

Frequently Asked Questions

What is the difference between skills assessment and a competency-based interview?

A competency-based interview asks candidates to describe past behaviors as evidence of competencies. A skills assessment asks candidates to demonstrate current capabilities through structured tasks. Both assess competency, but assessment produces observed evidence while the interview produces claimed evidence. Both methods are more predictive in combination than either alone.

How long should a skills assessment be?

Research on assessment completion rates shows that assessments under 45 minutes maintain candidate completion rates above 80% for most role levels. Assessments above 90 minutes see significant drop-off, particularly among currently employed (passive) candidates who have limited discretionary time. Design the minimum assessment that provides sufficient predictive signal for the role, not the most comprehensive one technically possible.

Do skills assessments disadvantage candidates from underrepresented groups?

It depends on the assessment design. Generic cognitive ability tests and abstract reasoning assessments show documented differential performance by demographic group. Work-sample assessments, situational judgment tests, and role-specific technical tasks show substantially lower differential performance. Conducting an adverse impact analysis before deployment is essential for any assessment used in a screening role.

Can skills assessment replace the interview?

No, but it can significantly reduce the number of interviews needed. Assessment data allows organizations to make faster, more informed decisions at earlier stages – reducing the average number of interviews per hire from 5 to 3 or fewer. It cannot replace the judgment, relationship-building, and nuanced evaluation that a well-structured interview provides, but it makes those interviews more productive by focusing them on the right questions.

How is assessment validity measured and maintained over time?

Validity is measured by calculating the correlation (APVI) between assessment scores and 12-month performance ratings for all hires who completed the assessment. Maintaining validity requires recalibrating assessments when role requirements change, when business context shifts materially, or when APVI measurements show decline below the 0.25 threshold. Organizations should calculate APVI at least annually for any assessment used in hiring decisions affecting more than 20 hires per year.

Conclusion

Skills assessment is the most underused high-validity tool in the talent acquisition toolkit. In a hiring environment where credentials are increasingly poor proxies for capability, where bias in hiring costs organizations access to the broadest possible talent market, and where AI-powered sourcing has solved the volume problem without solving the quality problem, the organizations that invest in validated, role-specific assessment programs are the ones building a structural hiring advantage.

The evidence for their impact is not theoretical – it is measurable in APVI data, bad hire rates, early attrition numbers, and hiring manager satisfaction scores. The question for every talent acquisition leader is not whether skills assessment works. It is how long they can afford to hire without it.