Two candidates. Two interviewers. Two completely different conversations.

If that is how your hiring process works, the decision at the end of it is not really a comparison, it is a guess dressed up as one. Structured interviews exist to fix exactly that, bringing consistency, fairness, and genuine predictive power to one of the most consequential steps in the entire hiring process.

A structured interview is a format where every candidate is asked the same predetermined questions, evaluated against the same criteria, and scored using the same framework. It removes the conversational drift that unstructured interviews are prone to and connects directly to competency-based interview methodology, where questions are designed to surface specific, role-relevant behaviours rather than general impressions.

The impact on bias in hiring is significant. When every candidate faces the same questions in the same order, personal rapport and first impressions have far less room to quietly override objective assessment. Paired with a well-designed interview scorecard, structured interviews generate evaluation data that is both defensible and genuinely comparable across candidates.

For teams building a data-driven recruiting function, structured interviews are not just a fairness tool. They are a data collection mechanism that improves quality of hire over time by revealing which questions and criteria actually predict on-the-job performance.

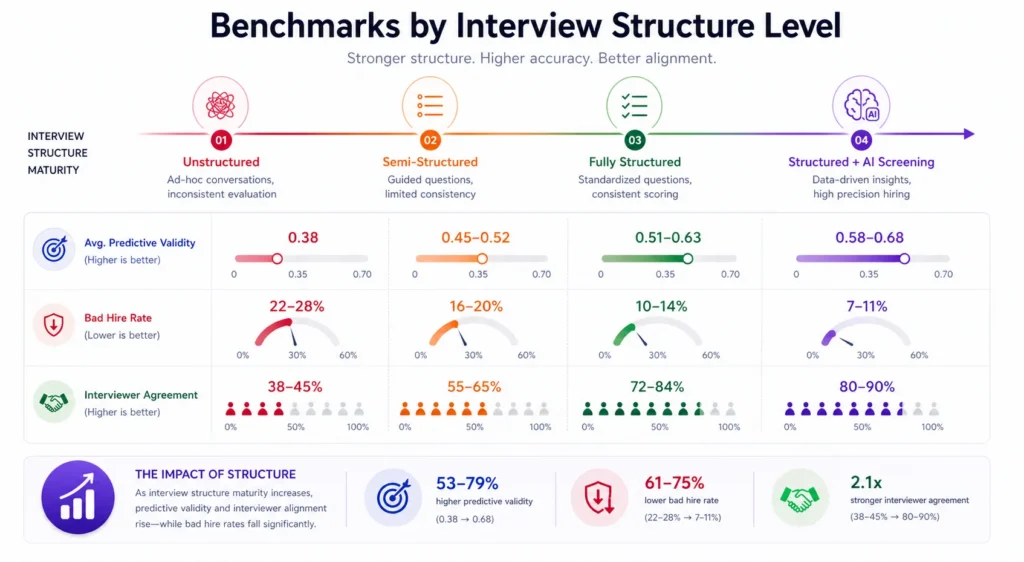

The core metric governing structured interview effectiveness is the Predictive Validity Coefficient: the statistical correlation between structured interview scores and subsequent job performance ratings. A coefficient of 0.50 or above is considered strong in organizational psychology. Structured interviews consistently achieve coefficients of 0.51 to 0.63, compared to 0.38 for unstructured formats.

Predictive Validity Coefficient = Correlation (Interview Score, 12-Month Performance Rating)

What is a Structured Interview?

A structured interview is a formal interview method in which all candidates for a specific role are assessed using the same questions, asked in the same sequence, with responses rated against predefined scoring criteria established before interviewing begins. This approach ensures comparability of evaluations across candidates and reduces the influence of interviewer bias on hiring decisions.

The structured interview is distinct from other interview formats in one fundamental way: it systematically removes the interviewer’s discretion from question selection. Interviewers still exercise judgment, but they do so within a defined framework rather than improvising the evaluation from scratch. The result is a process that is simultaneously more fair, more consistent, and more predictive of actual job performance than its unstructured counterpart.

Why Structured Interviews Are a Competitive Advantage in Modern Hiring?

The case for structured interviews is one of the most well-supported findings in organizational psychology, and one of the least implemented practices in actual hiring. Understanding why that gap exists is as important as understanding how much it costs organizations.

The foundational research is unambiguous. Schmidt and Hunter’s landmark meta-analysis examining decades of personnel research found that structured interviews had roughly double the predictive validity of unstructured interviews for job performance. More recent analyses have confirmed and extended this finding.

Research published by the American Psychological Association found that structured interviews predicted job performance significantly more accurately than unstructured formats across virtually all role types, industries, and seniority levels. You can explore Google’s re:Work guide on structured interviewing for a practitioner-level breakdown of how the method translates into real hiring processes.

Yet LinkedIn’s Global Talent Trends research found that only 35% of organizations use structured interviews as standard practice for all roles. The remaining 65% rely primarily on conversational, unstructured formats, despite overwhelming evidence that these formats are less accurate, less fair, and more legally vulnerable.

Why the gap? Three reasons dominate. First, structured interviews require upfront investment: building question sets, developing scoring rubrics, and training interviewers takes time that most hiring teams feel they cannot spare. Second, interviewers frequently resist the format because it feels mechanical, reducing the conversational latitude they prefer. Third, organizations that have never measured the cost of a bad hire have no benchmark against which to calculate the ROI of switching to a structured method.

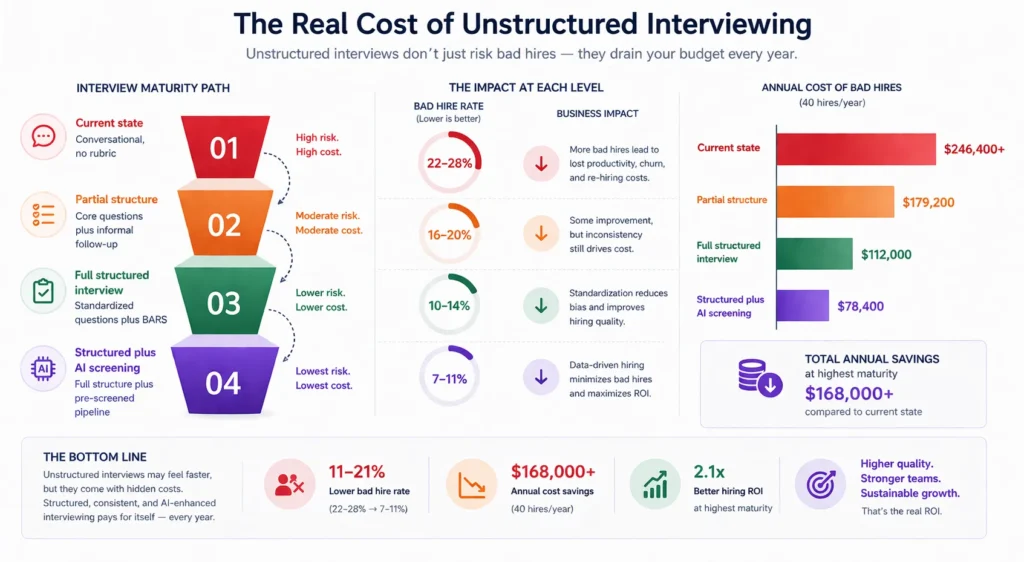

The ROI calculation is not complex. Consider a professional services firm making 40 hires per year at an average seniority of senior associate, with a cost-per-hire of approximately $9,000 and a bad hire cost of $28,000 per instance. If their current unstructured interview process produces a bad hire rate of 22%, consistent with industry averages for unstructured formats, the annual cost of hiring errors is approximately $246,400.

Research on structured interviewing consistently finds that switching to a structured format reduces bad hire rates by 30 to 50%. At a 40% reduction, the same firm’s bad hire cost falls to approximately $147,840, a saving of nearly $100,000 annually. The cost of implementing structured interviews across 40 roles, including question development, rubric building, and interviewer training, is typically under $20,000 for a firm of this size. The return is approximately 5:1 in the first year.

The competitive advantage of structured interviews extends beyond the internal P&L. Candidates evaluate the quality of the interview process as a signal about the quality of the organization. Research consistently finds that candidates rate structured interviews as more fair and professional than unstructured ones, even when they find them more demanding. In a competitive talent market where candidate experience is a genuine differentiator, a well-executed structured interview communicates organizational quality that an improvised conversation simply cannot.

For TA leaders, the practical implication is clear. Structured interviewing should be the organizational default, not an option reserved for executive hires or diversity-sensitive processes. Organizations that implement structured interviews at scale and pair them with AI-powered screening layers consistently produce higher Quality of Hire scores, lower early attrition, and stronger employer brand outcomes than those relying on interviewer intuition.

Your Resume Isn’t Getting Read

Let’s Get That Fixed!

75% of resumes get auto-rejected. avua’s AI Resume Builder optimizes formatting, keywords, and scoring in under 3 minutes, so you land in the “yes” pile.

The Psychology Behind Structured Interview Design

Cognitive Load and Evaluator Fatigue

Unstructured interviews place maximum cognitive demand on the interviewer, who must simultaneously generate questions, listen to responses, assess answers, and manage conversational flow. This cognitive overload consistently degrades evaluation quality, particularly later in an interview day. Structured interviews distribute this cognitive load by removing question generation from the in-room task list. The interviewer’s full cognitive resource is available for listening, probing, and scoring, which produces more accurate and more consistent evaluations across a full interview schedule.

Anchoring and First Impression Dominance

Research on hiring decision psychology consistently finds that interviewers form a strong initial impression of a candidate within the first few minutes and then use the remaining interview time to confirm that impression rather than genuinely evaluate the candidate. Structured interviews counteract this anchoring effect by requiring evaluators to score specific responses against predetermined criteria, making it harder for a strong opening impression to carry a weak substantive answer. The behavioral interview methodology that underpins most structured formats is specifically designed to redirect interviewer attention from impression to behavioral evidence.

Interviewer Consistency and the Reliability Problem

A fundamental problem with unstructured interviews is that the same candidate evaluated by two different interviewers will receive systematically different assessments, not because the candidate performed differently but because the interviewers asked different questions and weighted different attributes. Structured interviews solve this reliability problem by ensuring all interviewers evaluate the same behaviors against the same criteria, making scores comparable across evaluators and making calibration conversations meaningful rather than impressionistic.

Structured Interview vs. Related Interview Formats

| Format | Question Standardization | Scoring Method | Predictive Validity | Primary Use |

|---|---|---|---|---|

| Structured Interview | All questions predefined | Rubric-scored | High (0.51-0.63) | All role levels |

| Unstructured Interview | Interviewer-generated | Holistic impression | Moderate (0.38) | Common in practice |

| Semi-Structured Interview | Core questions fixed, follow-up flexible | Partial rubric | Moderate-High (0.45-0.55) | Senior/complex roles |

| Behavioral Interview | Past-behavior questions, predefined | STAR-scored rubric | High (0.51+) | Competency assessment |

| Situational Interview | Hypothetical scenarios, predefined | Anchored rubric | High (0.50+) | Future-behavior assessment |

| Panel Interview | May or may not be structured | Variable | Depends on structure | Senior/committee decisions |

The critical distinction between a structured interview and a competency-based interview is that competency-based interviews define the competencies being assessed but do not always standardize the specific questions used across all candidates. A structured interview adds the additional constraint of full question standardization, making it more reliable but requiring more upfront design investment.

What the Experts Say?

Structured interviews are the single most cost-effective improvement any hiring organization can make. The research has been clear for decades. The questions are predefined, the scoring is anchored, and the predictive validity is nearly double that of conversational interviews. The only reason not to use them is that they require discipline, and discipline is harder than conversation.

– Frank L. Schmidt, Professor Emeritus of Management and Organizations, University of Iowa; co-author of the landmark meta-analysis on personnel selection methods.

How to Measure Structured Interview Effectiveness?

Formula

Predictive Validity Coefficient = Correlation (Interview Score, 12-Month Performance Rating)

Interview-to-Offer Rate (%) = (Offers Extended / Candidates Interviewed) x 100

Structured Interview Completion Rate (%) = (Interviews with Full Rubric Completion / Total Interviews Conducted) x 100

Interviewer Agreement Rate (%) = (Cases with Score Variance Under 1 Point / Total Multi-Interviewer Cases) x 100

Benchmarks by Interview Structure Level

| Structure Level | Avg. Predictive Validity | Bad Hire Rate | Interviewer Agreement |

|---|---|---|---|

| Unstructured | 0.38 | 22-28% | 38-45% |

| Semi-Structured | 0.45-0.52 | 16-20% | 55-65% |

| Fully Structured | 0.51-0.63 | 10-14% | 72-84% |

| Structured + AI Screening | 0.58-0.68 | 7-11% | 80-90% |

Key Strategies for Implementing Structured Interviews

How Can AI and Automation Support Structured Interviews?

AI-Generated Interview Question Sets

Natural language AI tools can generate role-specific, competency-mapped question sets from a job description in minutes, producing structured interview guides that previously required hours of TA specialist time. The AI output still requires human review and refinement, but it removes the blank-page problem that prevents many hiring teams from building structured formats for every open role across the business.

Automated Interview Score Aggregation

AI-powered applicant tracking systems can aggregate rubric scores from multiple interviewers, calculate inter-rater reliability metrics, flag high-variance assessments for calibration review, and produce composite candidate rankings without manual data compilation. This automation converts structured interview data into actionable shortlist intelligence rather than leaving it scattered across disconnected evaluation forms.

Bias Signal Detection in Scoring Patterns

Machine learning models trained on historical interviewer scoring data can identify systematic bias patterns, such as a specific interviewer consistently scoring candidates from a particular demographic lower on subjective competencies. Surfacing these patterns for calibration conversations enables organizations to address bias in hiring structurally rather than relying on individual interviewer awareness or annual training programmes.

Video Interview Integration and Response Analysis

AI-powered video interview platforms can deliver structured interview questions in a standardized format, ensuring that every candidate receives identical question presentation and timing. Some platforms offer NLP-based response analysis as a supplementary input, flagging competency indicators in candidate language for interviewer review, though human evaluation remains the primary assessment mechanism for role-critical competencies.

Stop Juggling

10 Job Boards.

Search One

Your next role is already here. avua pulls opportunities from across the web into a single searchable feed; filtered by role, location, salary, and remote preference.

1.5 Million+

Active Jobs

380+

Job Categories

Structured Interviews as an Equity Tool: Beyond Compliance

Structural Fairness vs. Intentional Fairness

The most important insight about structured interviews and equity is that fairness in hiring does not primarily come from interviewer intentions; it comes from interview structures. Research on inclusive hiring practices consistently demonstrates that well-intentioned interviewers using unstructured formats still produce demographically skewed outcomes, while interviewers with no explicit DEI training using fully structured formats produce significantly more equitable results. Structure is not a substitute for DEI commitment, but it is a more reliable mechanism for producing equitable outcomes than individual commitment alone.

Standardization and Underrepresented Candidate Attrition

Unstructured interviews disproportionately disadvantage candidates from underrepresented groups for three connected reasons:

- They reward communication styles that align with the majority interviewer’s cultural norms and expectations

- They allow affinity bias to operate through conversational flow and subjective rapport assessment

- They lack evaluative anchors that would require an interviewer to justify a low score with behavioral evidence rather than intuition

Structured interviews address all three mechanisms by requiring consistent question presentation, rubric-based scoring, and documented behavioral evidence for every evaluation decision across every candidate.

Rubric Language and Representation

Even within structured formats, the language of the scoring rubric can embed bias. Rubrics that anchor high scores to communication styles, leadership archetypes, or professional behaviors more common in majority populations will produce biased outcomes even when question standardization is in place. DEI-aware structured interview design reviews rubric language for cultural assumptions and ensures that behavioral anchors reflect the full range of ways a competency can be effectively demonstrated by candidates from diverse professional backgrounds.

Common Challenges and Solutions

| Challenge | Solution |

|---|---|

| Interviewers find the structured format too rigid and resist adoption | Involve interviewers in question development; demonstrate the scoring benefit through calibration sessions; allow limited follow-up probing within the defined structure |

| Structured interview guides become outdated as roles evolve | Build a formal annual review of all guides into the TA calendar; trigger an automatic review whenever a job description is updated |

| Inter-rater reliability remains low despite rubric training | Conduct live calibration sessions using recorded or written sample responses; require written score justification for all rubric items before group debrief |

| Legal challenges to structured scoring outcomes | Ensure all evaluation criteria map directly to role-relevant competencies; retain completed rubric documentation for all candidates for a minimum of two years post-hire |

Real-World Case Studies

Case Study 1: The Technology Company

A 500-person technology company with a high-volume engineering hiring need found that its interview-to-offer rate had fallen to 1-in-9 over twelve months, indicating either poor screening or deeply inconsistent evaluation. An audit revealed that each engineering interviewer was using a different set of informal questions, making it impossible to compare candidates across interview slots. The company implemented a fully structured interview process for all engineering roles, with a five-competency guide and BARS scoring rubrics. Interview-to-offer rate improved to 1-in-4.5 within two hiring cycles, and 12-month engineering performance ratings for new hires improved by 22% compared to the prior cohort.

Case Study 2: The Financial Services Firm

A financial services firm conducting a diversity audit found that female candidates were advancing through initial screening at equal rates to male candidates but converting from hiring manager interview to offer at significantly lower rates. Analysis of hiring manager interview notes found that unstructured evaluations consistently referenced subjective attributes such as confidence and executive presence using language that did not correspond to documented role requirements. The firm implemented structured interviews for all director-level and above hiring, with rubrics requiring behavioral evidence for all competency scores. Female candidate conversion from interview to offer equalized within three hiring cycles.

Case Study 3: The Healthcare Network

A regional healthcare network implemented structured interview guides for nursing and allied health hiring, replacing informal conversational interviews that had produced a 90-day attrition rate of 18% across clinical staff. To maximize adoption, the TA team built the guides directly into their mobile-optimized ATS, so interviewers could score candidates on their phones immediately after each conversation. Interview guide completion rate reached 96% within the first quarter of implementation. Post-hire 90-day attrition for nursing staff fell from 18% to 9% over the first year following full structured interview rollout.

Measuring What Matters: Your Structured Interview Tracking Framework

Structured Interviews Across the Hiring Lifecycle

Pre-Search: Structured Interview Guide Development

The structured interview process begins before the first candidate is contacted. As part of the search design phase, the hiring manager and TA specialist identify the three to five core competencies the role requires, develop two to three structured questions per competency, and build a BARS rubric before any sourcing activity begins. This upfront investment of typically two to four hours per role pays back in faster, more consistent evaluation once candidates enter the active interview process.

Screening Stage: Structured Screening Conversations

Structured interviewing principles apply at the screening stage as well as the formal interview stage. Structured phone screens or video screens, using a consistent three to four question set with simple scoring criteria, dramatically improve the quality of candidates advanced to the hiring manager interview. An interview scorecard built for the screening stage ensures that screening decisions are documented and comparable, not based on post-call impressions that fade within hours.

Evaluation Stage: Multi-Interviewer Structured Assessment

For roles above a defined seniority threshold, structured interviews should be conducted by multiple interviewers, with each interviewer assigned to assess different competency areas. This distributes the evaluation workload, reduces the influence of any single interviewer’s biases, and produces a more comprehensive candidate assessment than any single evaluator can provide. The debrief session, using independently completed rubric scores as the starting point, converts individual assessments into a collective, evidence-based hiring decision.

Post-Hire: Closing the Validity Loop

The structured interview’s value as an organizational learning tool depends on closing the feedback loop between interview scores and hire outcomes. TA teams that track the correlation between structured interview composite scores and 12-month performance ratings for each role family can identify which competency questions are genuinely predictive and which are not, enabling continuous improvement of interview guide quality over successive hiring cycles.

The Real Cost of Unstructured Interviewing

| Scenario | Interview Format | Bad Hire Rate | Annual Cost (40 hires/year) |

|---|---|---|---|

| Current state | Conversational, no rubric | 22-28% | $246,400+ |

| Partial structure | Core questions plus informal follow-up | 16-20% | $179,200 |

| Full structured interview | Standardized questions plus BARS | 10-14% | $112,000 |

| Structured plus AI screening | Full structure plus pre-screened pipeline | 7-11% | $78,400 |

Bad hire cost estimated at $28,000 per instance, including replacement cost and productivity loss. Annual costs calculated at the midpoint of each format’s bad hire rate range.

Related Terms

| Term | Definition |

|---|---|

| Behavioral Interview | An interview format using past-behavior questions to assess how a candidate has demonstrated specific competencies in previous roles |

| Situational Interview | An interview format using hypothetical scenarios to assess how a candidate would approach role-relevant challenges |

| Competency-Based Interview | An interview design approach that maps questions to specific competencies required for role success |

| BARS (Behaviorally Anchored Rating Scale) | A scoring rubric that anchors each score level to specific examples of candidate response quality |

| Inter-Rater Reliability | The degree of agreement between different interviewers evaluating the same candidate using the same criteria |

| Predictive Validity | The statistical correlation between an assessment score and a subsequent measure of job performance |

| Interview Scorecard | A structured evaluation form used to document and score candidate responses during or immediately after an interview |

Frequently Asked Questions

What is the difference between a structured interview and an unstructured interview?

A structured interview uses the same predefined questions for every candidate, evaluated against a consistent scoring rubric. An unstructured interview allows the interviewer to generate questions in real time based on conversational flow. Structured interviews have roughly double the predictive validity of unstructured formats for subsequent job performance.

How many questions should a structured interview include?

Research on structured interview design recommends two to three questions per competency, covering three to five competencies per interview. A typical structured interview for a professional role includes eight to fifteen questions total, which can be covered in sixty to ninety minutes without rushing evaluations.

Can structured interviews be used for senior executive roles?

Yes, and they are particularly valuable at the executive level where hiring decisions carry the highest cost. Executive structured interviews typically use a combination of behavioral and situational questions at higher complexity, with rubrics developed in consultation with the board or executive committee to reflect the specific leadership demands of the role.

Do candidates prefer structured or unstructured interviews?

Research consistently finds that candidates rate structured interviews as more fair, regardless of outcome. Candidates who receive offers through structured processes report higher confidence that the decision was merit-based. Candidates who are not selected through structured processes report higher willingness to reapply in the future, because the structure signals the evaluation was conducted seriously.

How do you train interviewers to use structured interview rubrics?

Effective rubric training has three components: a review of the competency definitions and question rationale; a practice scoring session using recorded or written sample candidate responses; and a calibration conversation where interviewers compare practice scores and align on what distinguishes each rating level. This training typically requires ninety minutes to two hours and should be repeated whenever rubrics are meaningfully updated.

Conclusion

The structured interview is not a bureaucratic constraint on good hiring; it is the mechanism that makes good hiring replicable.

Organizations relying on interviewer intuition and conversational rapport are not making more human hiring decisions. They are making less accurate ones, at greater legal risk, with greater demographic inequity, and with higher bad hire costs. The research on structured interviews is among the clearest in organizational science: standardized questions, predefined rubrics, and calibrated scoring consistently outperform improvised conversations on every meaningful measure of hire quality.

The SHRM research and guidance on structured interviewing confirms the same conclusion that organizational psychologists have reached for decades. The organizations that treat structured interviewing as a core talent acquisition infrastructure investment, rather than a formality reserved for regulated industries, are the ones that build hiring capability that compounds over time.

Build the guide before you meet the candidate. Score before you debrief. Close the feedback loop after every hire. The discipline pays for itself faster than almost any other investment in talent acquisition quality.